Can an algorithm anticipate a flood or help a farmer better irrigate their crops? The answer is yes, and there are eight teams in Latin America that are already proving it.

Climate change is not a problem of the future. It is a reality that today displaces families, destroys crops, collapses infrastructures and puts biodiversity at risk. Faced with this scenario, technology and, specifically, the combination of open data and artificial intelligence are a powerful tool to build smarter, faster and more effective solutions.

In this post we want to present eight projects selected within the framework of the Open Data and AI Innovation Challenge (Data2AIChallenge), an initiative promoted by the Open Data Charter (ODC) with the support of the Patrick J. McGovern Foundation and the governments of Colombia and Uruguay. These eight teams have been chosen from all the proposals received to receive six months of specialized mentoring with which to bring their ideas to reality.

What is Data2AIChallenge?

The Data2AIChallenge is a regional call focused on climate action that seeks to support the development of projects that reuse open public data and apply artificial intelligence to respond to specific environmental challenges in Colombia and Uruguay.

Its objectives are:

- Encourage citizen participation.

- Promote ethical and innovative uses of AI and open data.

- To make visible solutions with real impact.

The call accepted proposals from students, developers, journalists, activists and researchers. A multidisciplinary jury (made up of specialists in open government, climate change and digital transformation from institutions such as the Development Bank of Latin America, the Agency for Electronic Government and the Information and Knowledge Society of Uruguay and the Ministry of ICT of Colombia) evaluated the proposals according to criteria of innovation, relevance and methodological rigor.

Of these, eight projects were selected that demonstrate that open data can be a lever for change in the environmental sector.

The eight selected projects

1. Alerta Yí: early warnings of floods with citizen science

The Yí River basin, in Uruguay, is an area recurrently affected by floods. The Alerta Yí team proposes a participatory early warning system that integrates open data, artificial intelligence models and citizen science. The aim is both to anticipate risk and to build community resilience, i.e. for communities themselves to be an active part of the surveillance and response system.

This type of hybrid approach between technology and citizen participation is especially valuable in contexts where institutional resources are limited and local knowledge is essential.

2. Minga Abierta: community mapping to prevent risks in Medellín

-

The slopes of Medellín (Colombia) concentrate popular neighborhoods with high exposure to landslides and floods. The Pluriverse Narrative Collective, responsible for the Minga Abierta project, combines community cartography, citizen science and predictive models to anticipate climate risks.

The name of the project is no coincidence: "minga" is a word of Quechua origin that refers to collective work. The proposal understands that data without community is not enough, and that risk prevention is also an act of social organization.

3. AgroClima Platform: smart irrigation prescriptions for family farming

Water stress directly threatens the food security of small producers in the Municipality of Magdalena (Colombia). AgroClima Platform uses artificial intelligence and open-access satellite data to generate accurate irrigation prescriptions tailored to each plot.

This is a very clear example of the democratizing potential of open data. Because climate information that was previously only available to large agro-industrial farms can now be put at the service of family farmers who most need to adapt to climate change.

4. Robo-Threat: AI to open environmental files

How many environmental impact assessment processes are buried in dense and inaccessible documents? Roboto Threat applies generative AI to transform these files into open data that can be audited, understandable and reusable by any citizen.

This project defends a fundamental premise: transparency is not just about publishing data, but about making that data understandable and actionable. When citizens can understand what the environmental files say, accountability becomes real. It should be noted that Amenaza Roboto already has a previous trajectory: it was the winning team in a previous challenge organized by the ODC itself in Uruguay in 2022.

5. Urban Light: light pollution maps to protect biodiversity

-

Light pollution is one of the least obvious forms of pollution, but with documented effects on biodiversity, the circadian cycles of animals and plants, and also on human health. Luz Urbana uses big data and artificial intelligence to cross-reference satellite images with urban data and generate maps of light pollution in Uruguay.

The project represents an innovative use of open geospatial data to address an environmental problem that is routinely left out of local political agendas.

6. Recyclables Observatory: climate decisions based on waste data

How to compare the climate impact of different waste management policies? The Recyclables Observatory project, promoted by CEMPRE Uruguay, answers these questions by applying open data, IPCC methodology and artificial intelligence to measure the climate impact of recycling decisions on a territorial scale.

The value of this proposal lies in transforming scattered data on waste into comparable and actionable indicators that can guide evidence-based public policies.

7. Slope Guardians: Actionable weather alerts against landslides

-

Colombia is one of the countries in the world with the highest incidence of landslides. Guardianes de la Ladera transforms open geospatial data into local climate alerts, using artificial intelligence to anticipate landslides with traceable evidence and communicate them in a way that can guide concrete decisions at the community level.

The proposal focuses on a classic problem of warning systems: the gap between general forecasts and local decisions.

8. BIO-AI: Data Journalism to Defend the Amazon

The Amazonian piedmont in Caquetá (Colombia) is home to a wealth of endemic species threatened by deforestation and habitat loss. BIO-AI combines artificial intelligence and data journalism to build conservation-oriented audiovisual experiences. The proposal understands that in order for scientific data to reach the public, they must be converted into stories that people can understand and that mobilize wills.

In a context where the Amazon continues to be subject to political disputes and economic pressures, projects like this show that knowledge, when communicated well, can be a tool for territorial defense.

What do these projects teach us?

Beyond their particularities, the eight selected projects share a series of features that are worth highlighting:

- Open data is the infrastructure for climate action. Without free access to satellite, climate, geospatial or waste data, none of these projects would be possible. The opening of public data allows citizen innovation to flourish.

- AI is a tool, not a magic bullet. All these teams use artificial intelligence at the service of a specific problem, with real data and clear objectives. But AI is not the idea itself, but a tool to obtain better results.

- Citizen participation amplifies impact. Several of these projects integrate citizen science and community mapping. This not only improves the quality of the data; it also generates local ownership of solutions.

- Open data reduces gaps. Family farmers, hillside communities, flood zone dwellers: the selected projects put the most sophisticated tools at the service of those who need them most.

Conclusion: When Open Data Becomes Action

The eight projects of the Data2AIChallenge are a practical demonstration that the opening of public data, combined with artificial intelligence and citizen engagement, can generate concrete solutions to real climate problems. From the slopes of Medellín to the Amazonian foothills, from the fields of Magdalena to the illuminated nights of Uruguay, these initiatives show that change does not always come from large institutions or millionaire budgets: sometimes it is born from small teams, with good questions, access to open data and a willingness to transform their environment.

The challenge now is to continue expanding the availability, quality and usability of public climate data, and to accompany those who want to use it to build a more resilient world. Because open data is the starting point for everything that is to come.

When we talk about generative AI applied to data, we often stop at isolated examples: a graph, a query, a model. But, in practice, the job of an analyst is much broader: collecting data, cleaning it, understanding it, creating metric variables, and drawing useful conclusions.

Today, AI and data science can optimize data analytics. In this exercise, we are going to show how to do it through a real and reproducible case: a complete analysis of fuel prices in Spain, supported by generative AI in each phase of the workflow.

In this educational exercise, which is reproducible on Google Cola , we analysed more than 11,000 Spanish petrol stations using public data from the Ministry for Ecological Transition and Demographic Challenge to answer business questions such as:

- Which province has the most expensive fuels?

- Does geographic location affect price?

- Are there significant differences between brands?

- Can we predict future fuel prices?

Each step explains how GenAI accelerated the analysis, documenting real problems encountered and proposing reusable solutions.

Access the data lab repository on GitHub

Accesses the GoogleColab notebook

Fast execution

Google Colab (Recommended)

Click on the "Open In Colab" badge above. The notebook runs directly in the browser without the need to install anything.

Total Time: ~4 minutes

Local (Python 3.9+)

git clone <repo-url>

cd exercise-data-ia-copilot

pip install -r requirements.txt

jupyter notebook notebook/Analisis_Carburantes_v0_1.ipynbStructure

exercise-data-ia-copilot/

├── notebook/

│ └── Analisis_Carburantes_v0_1.ipynb # 19 cells, 100% executable

│

├── prompts/ # Real problems + solutions

│ ├── ingestion/

│ │ ├── descargar_dataset.md # Robust APIs with fallbacks

│ │ └── explorar_estructura.md

│ ├── cleanup/

│ │ ├── validar_precios.md

│ │ └�─ normalizar_marcas.md

│ ├── visualization/

│ │ ├── precio_por_provincia.md # Interactive mapbox scatter

│ │ ├── distribucion_por_marca.md # Box plot top 10 brands

│ │ ├── ubicacion_vs_precio.md # Scatter mapbox 11k stations

│ │ ├── analisis_impacto_features.md # Correlation + trends

│ │ └── mejoras_visualizaciones_interactivas.md

│ └── features/

│ ├── crear_fin_semana.md

│ ├── distancia_punto_referencia.md

│ └── region_geografica.md

│

├── posts/

│ └── Reflexion_GenAI_Analisis_Carburantes.md # Reflection on the process

│

├── specs/ # Technical documentation

│ └── 001-fuels-ia/

│ ├── spec.md # Functional specification

│ ├── plan.md # Technical plan + lessons learned

│ └── checklists/

│

├── tests/ # Validation scripts

│ ├── test_descarga_local.py

│ └── test_notebook_completo.py

│

├── requirements.txt # pandas, matplotlib, scikit-learn├

── LICENSE # MIT

└── README.md # This filePhases of the analysis

PHASE 0: Preparation

- Environment setup, imports, and metadata

- Notebook version and iteration counter

STEP 1: Robust Ingesta (T009-T010)

- Download from the API of the Ministry for the Ecological Transition and Demographic Challenge

- Triple fallback: requests → curl → datos demo

- Handles SSL, timeouts, and IP blocks

PHASE 2: Cleaning and validation (T014-T017)

- Price validation (realistic range)

- Brand Normalization (Non-Standardized Variants)

- Coordinate filtering (Spain bounding box)

- Null Value Detection

PHASE 3: Exploratory Analysis (T020-T023)

4 visualizations with answers to business questions:

- Bar chart: average price by province (top 12)

- Scatter map: location vs price (peninsula ● / islands ▲)

- Histogram: price distribution (mean + median)

- Bar chart: top 8 brands (normalized, with counts)

PHASE 4: Variable Engineering (T028-T030)

- es_fin_semana: Binary (0=week, 1=weekend)

- distancia_a_madrid: Approach to an economic hub

- Region: North/Central/South (based on latitude)

PHASE 5: Features Impact Analysis (T034-T037)

3 additional visualizations showing the impact of each feature:

- Scatter plot: price vs distance to Madrid (geographical correlation)

- Comparative bar chart: weekend vs weekday price (time impact)

- Regional box plot: price distribution by north/central/south region

Documented Technical Lessons

Every real problem finds a documented solution:

|

# |

Problem |

Solution |

Reusable |

|---|---|---|---|

| 1 | SSL/IP blocking in API | Triple fallback (requests→curl→demo) | Public spanish API |

| 2 | Coordinates outside Spain | Bounding box [lat:27.5-43.8, lon:-18.2-4.4] | Geographic analysis |

| 3 | Brand variants not standardised | .str.upper().str.strip() before grouping | Any aggregation |

| 4 | Similar non-visual figures | ax.set_xlim(min*0.95, max*1.05) | Tight ranges |

| 5 | ValueError: y contains NaN | Validate before train_test_split | ML pipelines |

Figure 1. Summary table of the solutions proposed for each problem in the development of the exercise. Source: own elaboration – datos.gob.es

Each solution is documented in prompts/ with:

- Original Prompt: What We Ask GenAI to Ask

- Result obtained: Code that worked

- Reflection: What we learned + reusable pattern

Conclusion

The interesting thing about this exercise has not been so much the final result of the analysis, but the path to get there. Working with real data is almost never an obvious and linear process. In this case, the process has been full of small frictions that also define the actual work of any data analyst.

From the beginning, various problems appear: APIs that do not always respond stably, occasional crashes when trying to download information, or the need to design fallback mechanisms so as not to depend on a single data source.

On all these points, GenAI has helped to propose approaches and generate alternatives, but always within a process of constant validation by the analyst.

In summary, the most relevant thing we can extract from the realization of this exercise is the idea that the value is in how the obstacles of the process are overcome, not in avoiding them.

The entire exercise, including the executable notebook in Colab, the code and prompts used in each phase, is available on GitHub to be able to reproduce it step by step.

Content created by Alejandro Alija, an expert in digital transformation and innovation. The content and views expressed in this publication are the sole responsibility of the author.

One of the biggest challenges of the open data ecosystem is its dissemination and the recognition of its value by society. Knowing their existence and understanding what they are for amplifies their impact. In an environment where algorithms are increasingly present in everyday life, data literacy has become a necessary civic skill. Civil rights are increasingly expressed in a digital key and, in this context, digital rights emerge as an essential frame of reference to ensure that technological transformation leaves no one behind. Added to this is the rise of artificial intelligence, which amplifies the value of data, but also the risks derived from its biased or non-transparent use.

Incorporating data literacy into educational curricula from an early age is key to overcoming these challenges, as it provides students with technical knowledge and tools to participate in society in an informed way. The V National Open Data Meeting held in Pamplona on 8 May focused precisely on the role of data in the education sector under the slogan "Learn and undertake". The challenge of this edition has been EDUCA-DATA, a resource that brings open data to the classroom in a practical and accessible way, showing its value in understanding reality and generating opportunities.

What does the annual challenge of the National Open Data Meeting consist of?

The National Open Data Meeting poses a different challenge every year, in which experts from different fields work together to find solutions. The challenge is proposed by the organization and volunteers related to the field of data, linked to the academic world and the public administration, collaborate throughout the year to respond to the challenge. The conclusions are presented during the annual event and all the documentation generated is public.

What is EDUCA-DATA?

Data is a citizen tool to understand and transform the world. EDUCA-DATA is an educational project that facilitates learning about the use and reuse of public open data. It seeks to strengthen digital skills, critical thinking and promote the culture of open knowledge.

EDUCA-DATA is mainly aimed at students and teachers of Compulsory Secondary Education, Baccalaureate and Vocational Training, but also at citizens in general. The educational material allows students to work on open data concepts in the classroom, contains resources that teachers can use as support in the classroom and makes it easier for anyone interested to learn independently about this topic.

During the approach to this challenge, three coordinated and complementary documentary pieces have been prepared, without it being necessary to consult all three to understand the complete content. The content of each one is detailed below. All the materials are available in section 5 of the EDUCA-DATA challenge, at the bottom of the meeting page.

Presentation of open data, a collection of essential data

The centerpiece of the materials produced is a presentation in PowerPoint format. It is a 65-slide document that allows anyone without prior knowledge to approach open data. This document includes all the content that students will work on in the classroom together with the teachers and articulates the complete didactic sequence, from the introduction to the data to the benefits of its opening.

Theoretical document: concepts, examples and resources to deepen

All the material produced is based on a solid theoretical basis. The technical document develops in greater depth the concepts, definitions and examples that appear in the presentation, and acts as a reference when students or teachers need to delve deeper into a specific point.

With a didactic approach, it traces a path that allows us to understand how open data, correctly processed and published, provides significant value in areas as diverse as investigative journalism, science against climate change or citizen participation. The inclusion of real cases, explained in a clear and accessible way, makes it easier to understand their impact on our daily lives. The document is designed to be read continuously, with a fluid and enjoyable reading, and to be consulted in a timely manner whenever you want to clarify a concept or expand on a specific aspect. In addition, it includes links to external resources for those who wish to delve into the different sections.

A teacher's guide, discovering the power of data

To enable teachers to work in the classroom, an exhaustive teaching guide has been developed, which allows them to manage their work with students autonomously. This document contains the curricular framework, the didactic guidelines and the necessary information for classroom practice. The guide is organized in two parts: the first includes the conceptual framework and the curricular fit, and the second contains the classroom materials.

At the curricular level, the material developed fits mainly into the following subjects: Digitalization, Economics, Geography and History and Mathematics.

The content is developed in five didactic units that allow you to gradually approach open data:

- Introduction. The world of data.

- Open Data. What are they and what defines them?

- The formats. The packaging of information.

- Licenses. The rules of the game.

- The benefits. Why does all this matter?

Figure 1. EDUCA-DATA teaching modules: open data in the classroom. Source: own work – datos.gob.es

In the teaching guide , teachers will find everything they need to be able to teach the five teaching units and their activities autonomously, without the need for prior specific training in open data. That is:

- The conceptual and historical context that frames open data.

- Its fit into the LOMLOE curriculum of several subjects.

- The objectives and contents of each unit.

- The key ideas that should be transferred to the classroom.

- The most common errors and confusions of students.

- Proposals for rapid evaluation.

- References for further information.

The guide covers four areas:

- A conceptual and historical framework that provides teachers with the necessary context about open data. It is information that is not designed to be transferred to the classroom as it is.

- A curricular framework with the assessment of the fit in four subjects (Digitalization, Economics, Geography and History and Mathematics), a reasoned didactic recommendation, cognitive progression according to Bloom's taxonomy and detailed alignment tables with LOMLOE.

- The five didactic units. It is the core of this material and each one follows the same structure to facilitate the work of the teaching staff.

- Two integrating pieces that close the tour, in the form of practical application exercises: an integrative case study presented as a journalistic role-playing game and an advanced practical exercise to work with data.

In addition, a basic glossary and two in-depth studies on the concepts of distribution and licensing are included as transversal contents. The guide is presented as an open proposal that teachers can take as a reference and adapt it to their class, their students and their way of teaching.

Open data in the classroom, a commitment to the citizenship of the future

Bringing open data closer to students in Secondary, Baccalaureate and Vocational Training contributes to forming citizens capable of understanding the digital ecosystem of information, of contrasting what they read with public data and of exercising their right to transparency and participation. For this reason, these educational resources have a great value that goes beyond digital and data literacy, since, in their most civic dimension, they contribute to forming people who are more informed, more critical and better prepared to understand and transform the world in which they live.

Every time an asthmatic person checks the level of suspended particles before going for a run, or when a city council decides to close a playground due to a pollution episode, there is open data behind that decision. Open environmental and climate data – on air quality, water, biodiversity or extreme events – are no longer the exclusive preserve of scientists and institutions and have become a global civic infrastructure: accessible, reusable and, increasingly, generated by citizens themselves.

The question is no longer whether this data exists. They exist and in unprecedented quantities. The question is: who uses them and for what? This article covers this ecosystem of information, from global platforms to local repositories, including citizen projects that have transformed our environment.

The citizen turn: from consumers to data producers

For decades, environmental data came mainly from state agencies, government satellites, and large laboratories. That panorama began to transform when sensors became cheaper, smartphones became massive, and organized communities understood that measuring their environment was also a way to protect themselves and their surroundings. In this way, the information generated by citizens is added to that of public bodies, expanding and enriching the collective understanding of the environment. Some examples are:

- iNaturalist, the citizen science platform for documenting biodiversity, accumulates more than 200 million observations, made by 3.3 million participants worldwide. Its data, integrated into GBIF (Global Biodiversity Information Facility), is used in conservation research, in monitoring the impacts of climate change and in biodiversity policies in dozens of countries.

- IQAir AirVisual is another global air quality network, with more than 30,000 stations and real-time data from more than 100 countries, including maps, 7-day forecasts, and recommendations for vulnerable groups.

- NASA's GLOBE Observer has been allowing anyone to record observations of clouds, temperature, ground cover and mosquito habitats from their mobile phone since 2016 – a critical indicator for detecting pockets of vector-borne diseases aggravated by global warming.

- Meteoclimatic is a collaborative network of automatic weather stations that share data in real time, focused on the Iberian Peninsula and nearby areas.

These projects show that citizens no longer only consume data: they also produce it, validate it and make it available to the public.

Cases that change policies: from citizen data to public decision

One of the most persistent prejudices about citizen science is that its data is too imprecise to have a real impact or even to be considered science. Several recent projects challenge that argument and are pushing for the belief to change.

The European COMPAIR project, funded by the Horizon Europe programme between 2021 and 2024, deployed citizen air quality sensors in five cities: Athens, Berlin, Flanders, Plovdiv and Sofia. Citizen sensors are low-cost environmental measurement devices (air, noise, temperature, water, etc.) that citizens themselves install, maintain and use to generate open data on their immediate environment. These sensors were installed in neighbourhoods and spaces used by Roma communities, the elderly and schoolchildren. This choice aimed to make visible exposures to risk that are usually off the radar (for example, school routes with heavy traffic or insufficiently monitored peripheral neighborhoods) and to provide additional data that would allow administrations to design measures aimed at those who breathe the most polluted air. In Sofia, for example, the publication of pollution maps at school entrances led to a documented increase in the use of public school transport; a citizen data project that changed collective behavior.

Citizen data, moreover, when it respects methodological and legal conditions, can be admissible before the courts and contribute to policy improvement. It is precisely this type of use that is being developed by the Sensing for Justice (SensJus) project: a Marie Curie initiative – a prestigious European research funding programme – that uses networks of citizen sensors as evidence in environmental litigation and out-of-court mediations, with success stories documented in the United States and Italy.

Projects located in closer contexts

Environmental or climate data activism is not a distant phenomenon. Spain has a growing network of initiatives that take environmental measurement to the scale of the neighbourhood, river and roof.

Smart Citizen, created by the Barcelona Fab Lab of the Institute for Advanced Architecture of Catalonia (IAAC), is one of the world's leading projects in citizen sensing: it combines a low-cost sensor kit – air quality, temperature, humidity, noise, light – with an open real-time data platform. With more than 9,000 registered users and more than 1,900 sensors deployed in more than 40 countries, it demonstrates that citizen environmental monitoring can have a global reach based on a local initiative. SensaCitizens, on the other hand, is a Spanish network of low-cost environmental monitors, with LoRaWAN technology, aimed at generating useful data for local public policies on air quality and urban comfort.

In the field of everyday health, Planttes stands out, an app developed by the Autonomous University of Barcelona that allows citizens to map in real time the allergenic plants in their environment and indicate their phenological state. The result is a street-level allergy risk map that complements the official pollen information.

River surveillance, on the other hand, is manifested in the Cantabria Rivers Project, active since 2008, which involves volunteers who adopt river sections of about 500 meters and carry out biannual inspections to monitor the ecological status of the river. With 282 sections inspected and more than 300 documented actions, its data feeds the Administration's decisions on ecosystem conservation.

In the urban area there are also various initiatives. Vitoria-Gasteiz participated in the European CITI-SENSE project – together with eight other cities, such as Barcelona, Oslo and Vienna – with a specific initiative for the participatory design of public spaces, which combined noise, air quality and thermal comfort sensors. In total, the project generated more than 9.4 million observations in the participating cities.

At the citizen and local level, the recently presented SolData Spain (Universidad Autónoma de Madrid) offers an open-access geoportal to analyse the evolution of solar energy in Spain over almost three decades, by cross-referencing satellite irradiation data with historical meteorological records.

The following chart summarizes the projects mentioned so far:

Figure 1. Open data and citizen science for climate and change fights. Source: own elaboration - datos.gob.es

The company is also taking action: climate adaptation with open data

The climate crisis is not only an environmental and social issue, but also an operational risk for businesses. According to the Smart Electric Power Alliance's Utility Transformation Profile report (2023), 62% of the utilities surveyed have developed a public carbon reduction plan (mitigation), but climate adaptation measures remain scarce and rarely quantified. However, we find some examples:

Meteoflow, from Iberdrola, is a weather forecasting platform, recognised by the International Research Centre on Artificial Intelligence (IRCAI) linked to UNESCO, among the best AI projects for sustainability. Its function is to optimise the production of its wind and solar farms by anticipating weather conditions, although it also incorporates alert modules for extreme phenomena that allow it to manage risks. To do this, it uses open-access meteorological information together with its own data, both historical and production in real time.

Another example is dotGIS's Solarmap, which combines open data from cartography (CNIG/INSPIRE), solar radiation (AEMET/Copernicus) and geospatial big data to calculate the profitability per roof of installing solar panels anywhere in Spain.

These are initiatives that have an impact on the operational resilience of companies and a potential benefit for the collective energy system, but whose transformative scope grows when their results transcend the logic of protecting corporate assets to be integrated into shared public infrastructure. In the private sector, data (open or not) can help reduce collective risks—such as avoiding blackouts or anticipating impacts on ecosystems—so its impact is not limited to protecting corporate assets. Its civic potential is multiplied when the results, methodologies and datasets that can be accessed are integrated into data infrastructures shared with other public and social actors.

Where we're headed: three trends redefining security

The landscape of open environmental and climate data is changing, and three trends are on the near horizon.

- From mitigation to adaptation. For years, climate policy focused on reducing emissions. That focus is shifting to adaptation: anticipating risks, reducing vulnerabilities, and protecting communities from irreversible changes. The Basque Country is an example of this turn: the Basque Country Climate Change Strategy 2050 (KLIMA 2050) establishes adaptation as a cross-cutting axis with objectives by sector – health, water, biodiversity, energy – and Vitoria-Gasteiz leads commitments within the framework of the European Mission for Climate Neutral Cities. The leap is also an advance in open data: in addition to measuring and publishing open data on emissions, adaptation strategies are beginning to open and document data on vulnerabilities (heatwaves, floods, public health) and institutional and community response capacities, so that they can be reused by administrations, companies and citizens.

- Citizenship as a civic sentinel. Citizens increasingly act as civic sentinels: they collect, contrast and share environmental data that complement official measurements. When a city's neighborhood detects pollution levels that official stations don't record, or when an indigenous community documents changes in its ecosystem that satellites don't capture, a second layer of information critical to risk management is generated. Projects such as OpenTEK/LICCI of the ICTA-UAB integrate indigenous knowledge from Nepal, Thailand, Vietnam and Latin American countries as scientifically legitimate sources of data on climate variability, and make them available under FAIR and CARE principles, seeking to make them as open and reusable as possible without compromising the sovereignty of the communities that generate them.

- FAIR standards and European data spaces. The European Union promotes the Green Deal Data Space and the AD4GD initiative to integrate open environmental data under FAIR (findable, accessible, interoperable, reusable) standards, which facilitate its combined use by multiple actors. In this framework, the EU's Strategic Foresight Report 2025 identifies the climate transition and security as the two axes that exert the greatest pressure on Europe, and underlines the need for shared data infrastructures based on these principles to respond with resilience.

Open environmental and climate data is not just a technical issue or an aspiration for bureaucratic transparency. They are, increasingly, a condition for communities to be able to anticipate risks, demand responsibilities and make well-informed collective decisions in the face of the greatest challenge. The infrastructure exists. The citizens who use it, too, so we must continue to promote to ensure that its use is universal and equitable.

Appendix: Where is the data? A practical guide to repositories

For anyone who wants to explore, reuse, or combine open environmental and climate data, the ecosystem of repositories is vast and increasingly accessible. Here is a selection organized by scale:

At European level:

- data.europa.eu: European data catalogue, where you can find, among others, data on air, water, biodiversity, climate and energy, with documented use cases

- Copernicus C3S: historical climate data and projections; essential climate variables.

- Copernicus CAMS: real-time air quality and atmospheric composition data.

- ESA CCI Open Data: essential climate variables on glaciers, sea level, greenhouse gases, etc.

- European Environment Agency (EEA): environmental indicators, climate risk maps, biodiversity and water quality.

- INSPIRE : is the central access point for locating, visualizing and accessing geographic information and harmonized spatial data of the member countries of the European Union.

You can find more repositories of interest in this article 10 climate-related public data repositories.

At the state level:

- datos.gob.es: National open data portal with a section on the environment.

- AEMET OpenData: historical climatological series, real-time weather data, REST API, etc.

- MITECO Open Data: data on air, water and emissions quality.

- IDEE Geoportal: geospatial data and national environmental cartography, among others.

Content produced by Miren Gutiérrez, PhD and researcher at the University of Deusto, an expert in data activism, data justice, data literacy and gender-based disinformation. The content and views expressed in this publication are the sole responsibility of the author.

Just a few months after the success of its first award, the Madrid City Council has opened the call for the second edition of the Open Data Reuse Awards. It is an initiative that seeks to recognize and promote innovative projects that use the datasets published on the datos.madrid.es portal. With a total endowment of 15,000 euros, these awards consolidate the municipal commitment to data culture, transparency and the creation of social and economic value from public information.

In this article we tell you some of the keys you must take into account to participate.

Two award categories to consider

The call establishes two categories, each with several prizes:

1) Web services, applications and visualizations: rewards projects that generate services, visualizations or web or mobile applications.

- First prize: €4,000

- Second prize: €3,000

- Third prize: €1,500

- Student prize: €1,500

2) Studies, research and ideas: focuses on research projects, analysis or description of ideas to create services, studies, visualizations, web or mobile applications. This category is also open to university end-of-degree and end-of-master's projects (TFG-TFM).

- First prize: €2,500

- Second prize: €1,500

- Third prize: €1,000

- Projects already awarded, subsidized or contracted by the Madrid City Council.

- Projects that do not use any datasets from the municipal portal.

In both categories, it is necessary that at least one set of data from the municipal portal is used, and can be combined with public or private sources from any territorial area. Projects can be recent or have been completed in the two years prior to the closing of the call.

Awards may be declared void if the minimum quality is not reached. In this case, the remaining amounts will be redistributed proportionally among the rest of the winners.

Requirements to participate

The call is open to natural and legal persons who are the authors of the projects or initiatives. The aim is for any person or entity with an interest in the reuse of data to be able to submit their proposal, regardless of their technical level. Therefore, both professionals and companies, researchers, journalists and developers, as well as amateurs and amateurs interested in data analysis and visualization can participate.

In the case of the student prize, only those individuals enrolled in official courses 2023/24, 2024/25 or 2025/26 may participate.

On the other hand, the following are excluded from all categories:

Process Phases

The municipal portal details the phases of the call, which include:

- Publication of the call. On March 3, the regulatory bases were published in the Official Gazette of the Madrid City Council.

- Submission of nominations. The deadline for submitting applications is from March 4 to May 4 (both included). They can be submitted online or in person, as explained below.

-

Analysis and correction. Until June 3, the review of the documentation submitted will be carried out. If necessary, applicants will be contacted to correct errors.

-

Assessment and deliberation. A jury will evaluate all the admitted projects, according to the criteria established in the rules of the call. Their usefulness, economic value, social value and contribution to transparency will be taken into account; their degree of innovation and creativity; the variety of datasets used from the Madrid Open Data Portal; and its technical quality. This phase will run until September 15.

-

Resolution. In the months of September and October , the proposal for the granting and official publication of the resolution will be carried out.

-

Awards ceremony. The awards will be presented at a public event, estimated for the month of November.

The official website will update dates and documentation as the process progresses.

How applications are submitted

As mentioned above, applications can be submitted electronically or in person:

- Online, through the electronic headquarters of the Madrid City Council. Identification and electronic signature are required for this.

- In person, at the registration assistance offices of the Madrid City Council, as well as at the registries of other public administrations.

Individuals may submit the application in both ways, while legal persons may only submit the application electronically.

In both cases, nominations must include:

- Official application form, to be downloaded from the Madrid City Council's electronic headquarters.

- Project report, based on a model to be downloaded from the aforementioned electronic office. This document will include the title, authorship and a detailed description, as well as the list of datasets used, the objectives, the target audience, the expected impact, the degree of innovation and the technology used.

- Responsible declaration.

- Collaboration agreement, in the case of presenting itself as a group.

Get inspired by the winning projects of the first edition

The second edition of the Open Data Reuse Awards comes on the heels of the success of the previous edition. In 2025, the Madrid City Council held the first edition of these awards, which brought together 65 nominations of great quality and diversity. Among them, proposals promoted by university students, startups, multidisciplinary teams and citizens committed to the intelligent use of public data stood out.

The award-winning projects demonstrated that open data can become real tools to improve urban life, boost transparency and generate useful knowledge for the city. In this article we summarize what these projects consisted of.

In summary, the II Open Data Reuse Awards 2026 are an opportunity to demonstrate how public data can be turned into real innovation. An invitation to develop projects that promote a smarter, more transparent and participatory Madrid.

On Wednesday, March 4, the Cajasiete Big Data, Open Data and Blockchain Chair of the University of La Laguna held a webinar to present the winning ideas of the Cabildo de Tenerife Open Data Contest: Reuse Ideas. An event to highlight the potential of public information when it is put at the service of citizens. The recording of the presentation is available here.

In this post we will review what each of the winning projects consists of – which are still pending ideas for development in apps – and what challenges they would answer.

Cultiva+ Tenerife: precision agriculture for the Tenerife countryside

The first prize-winning project was born from a very specific need that every farmer on the island knows well: to make the right decisions at the right time. Which crop is most profitable this season? What are the weather conditions forecast for the coming weeks? Is there a fair or event in the sector that should not be missed?

Cultiva+ Tenerife is an application designed specifically for the agricultural sector that integrates open data from the Cabildo to answer these questions in a simple and intuitive way.

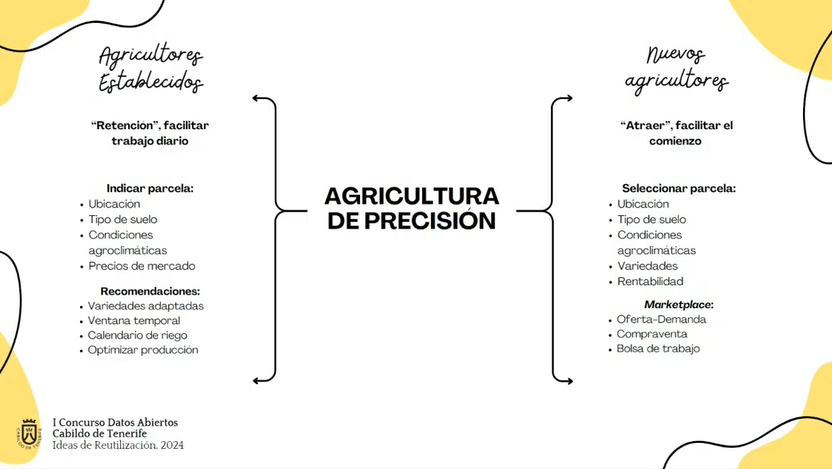

Specifically, it is aimed at both workers already established in the sector and new farmers. In the first case, the app would facilitate daily work through irrigation recommendations and other issues that improve production; while for new farmers the application would help to select the best plot to start an agricultural activity according to soil type, weather conditions, etc.

Figure 1. Possible uses of the Cultiva+ Tenerife application according to the type of user. Source: presentation by Cultiva+Tenerife in the Webinar "From data to innovation: Reuse ideas awarded in the I Open Data Contest of the Cabildo de Tenerife, Universidad de la Laguna".

The application would intuitively and clearly collect information such as:

- Price information: the farmer can consult the evolution of market prices of different products, which allows him to plan what to grow based on the expected profitability.

- Weather conditions: the app crosses weather data with the specific needs of each type of crop, helping to anticipate irrigation, protection or harvests.

-

Agenda of activities of interest: agricultural fairs, technical conferences, calls for grants... All relevant information for the sector, centralized in one place.

Figure 2. Visual structure of the Cultiva+Tenerife application. Source: presentation by Cultiva+Tenerife in the Webinar "From data to innovation: Reuse ideas awarded in the I Open Data Contest of the Cabildo de Tenerife, Universidad de la Laguna".

Something that was highlighted as valuable about this project in the webinar is its focus on a group that has historically had less access to digital tools: farmers in Tenerife. The proposal does not seek to complicate their day-to-day life with unnecessary technology, but to simplify decisions that today are often made by eye or with incomplete information. Precision agriculture is no longer just a matter for large farms: with open data and a good application, it can be within the reach of any local producer.

Analysis of trends and models on tourism in Tenerife: when the data reveal a crisis

The second winning project addresses one of the most complex and urgent issues in the reality of Tenerife: the relationship between tourism, housing and the labour market. An equation with multiple variables that directly affects the quality of life of residents and that, until now, was difficult to analyse rigorously without access to reliable data.

The starting point of the project is revealing: in June 2024, 35% of the new employment contracts signed in Tenerife corresponded to the hospitality sector. A figure that perfectly illustrates the structural dependence of the island's economy on tourism, but which also opens up uncomfortable questions: to what extent is tourism growth transforming the housing market? Are you displacing habitual residents from certain areas? How will tourist arrivals evolve in the coming years?

This project proposes to answer these questions through an analysis and prediction model built with data science tools. Its developer proposes to use data such as the number of tourists staying in Tenerife according to category and area of establishment, available in datos.tenerife.es, to build models with Python and NumPy that allow identifying trends and projecting future scenarios.

The objectives of the project are ambitious but concrete:

- Analyse the relationship between tourist demand and accommodation supply, identifying which areas of the island suffer the greatest pressure and at what times of the year.

- To develop a predictive model capable of estimating the future arrival of tourists and their impact on the tourist housing sector.

- Contribute to mitigating the housing crisis by providing data and analysis that allow us to understand how tourism is affecting the availability of housing for residents.

- To support business and urban planning, offering companies, investors and administrations an analysis tool that facilitates strategic decision-making.

In short, it is a matter of putting the intelligence of data at the service of one of the most current debates that Tenerife has on the table.

The university as a bridge between data and society

The choice of the Cajasiete Big Data, Open Data and Blockchain Chair of the University of La Laguna as a space to give visibility to the winners is in itself a message: the University has a key role in the construction of the open data ecosystem in Tenerife.

This chair has been working for years on the border between academic research and the practical application of technologies such as big data analysis, blockchain or the reuse of public information. Their involvement in this competition and in the dissemination of its results reinforces the idea that open data is also a valuable resource for training, research and local economic development.

The success of this first call has confirmed that there was a real demand for this type of initiative. So much so that the Cabildo has already launched the II Open Data Contest: APP Development, which gives continuity to the process by taking ideas to the next level: the development of functional applications.

If in the first edition ideas and conceptual proposals were awarded, in this second edition the challenge is to build real solutions, with code, user interface and proven functionalities. The economic endowment is 6,000 euros divided into three prizes.

Projects such as Cultiva+ Tenerife or the Analysis of the impact of tourism on housing show that there are ideas with the potential to become useful and sustainable tools. This second phase is the opportunity to materialize them.

"I'm going to upload a CSV file for you. I want you to analyze it and summarize the most relevant conclusions you can draw from the data". A few years ago, data analysis was the territory of those who knew how to write code and use complex technical environments, and such a request would have required programming or advanced Excel skills. Today, being able to analyse data files in a short time with AI tools gives us great professional autonomy. Asking questions, contrasting preliminary ideas and exploring information first-hand changes our relationship with knowledge, especially because we stop depending on intermediaries to obtain answers. Gaining the ability to analyze data with AI independently speeds up processes, but it can also cause us to become overconfident in conclusions.

Based on the example of a raw data file, we are going to review possibilities, precautions and basic guidelines to explore the information without assuming conclusions too quickly.

The file:

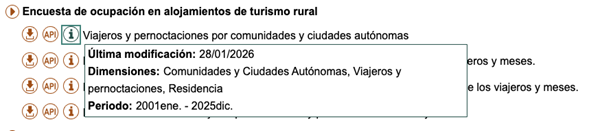

To show an example of data analysis with AI we will use a file from the National Institute of Statistics (INE) that collects information on tourist flows in Europe, specifically on occupancy in rural tourism accommodation. The data file contains information from January 2001 to December 2025. It contains disaggregations by sex, age and autonomous community or city, which allows comparative analyses to be carried out over time. At the time of writing, the last update to this dataset was on January 28, 2026.

Figure 1. Dataset information. Source: National Institute of Statistics (INE).

1. Initial exploration

For this first exploration we are going to use a free version of Claude, the AI-based multitasking chat developed by Anthropic. It is one of the most advanced language models in reasoning and analysis benchmarks, which makes it especially suitable for this exercise, and it is the most widely used option currently by the community to perform tasks that require code.

Let's think that we are facing the data file for the first time. We know in broad strokes what it contains, but we do not know the structure of the information. Our first prompt, therefore, should focus on describing it:

PROMPT: I want to work with a data file on occupancy in rural tourism accommodation. Explain to me what structure the file has: what variables it contains, what each one measures and what possible relationships exist between them. It also points out possible missing values or elements that require clarification.

Figure 2. Initial exploration of the data file with Claude. Source: Claude.

Once Claude has given us the general idea and explanation of the variables, it is good practice to open the file and do a quick check. The objective is to assess that, at a minimum, the number of rows, the number of columns, the names of the variables, the time period and the type of data coincide with what the model has told us.

If we detect any errors at this point, the LLM may not be reading the data correctly. If after trying in another conversation the error persists, it is a sign that there is something in the file that makes it difficult to read automatically. In this case, it is best not to continue with the analysis, as the conclusions will be very apparent, but will be based on misinterpreted data.

2. Anomaly management

Second, if we have discovered anomalies, it is common to document them and decide how to handle them before proceeding with the analysis. We can ask the model to suggest what to do, but the final decisions will be ours. For example:

- Missing values: if there are empty cells, we need to decide whether to fill them with an "average" value from the column or simply delete those rows.

- Duplicates: we have to eliminate repeated rows or rows that do not provide new information.

- Formatting errors or inconsistencies: we must correct these so that the variables are coherent and comparable. For example, dates represented in different formats.

- Outliers: if a number appears that does not make sense or is exaggeratedly different from the rest, we have to decide whether to correct it, ignore it or treat it as it is.

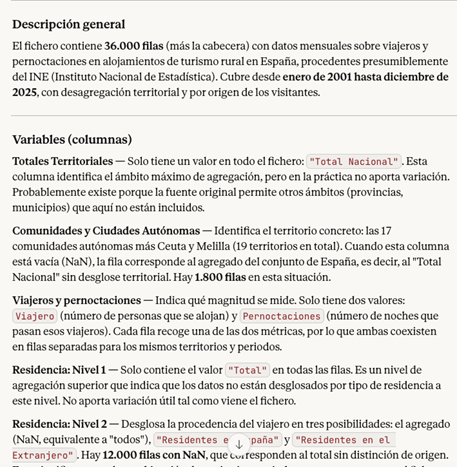

Figure 3. Example of missing values analysis with Claude. Source: Claude.

In the case of our file, for example, we have detected that in Ceuta and Melilla the missing values in the Total variable are structural, there is no rural tourism registered in these cities, so we could exclude them from the analysis.

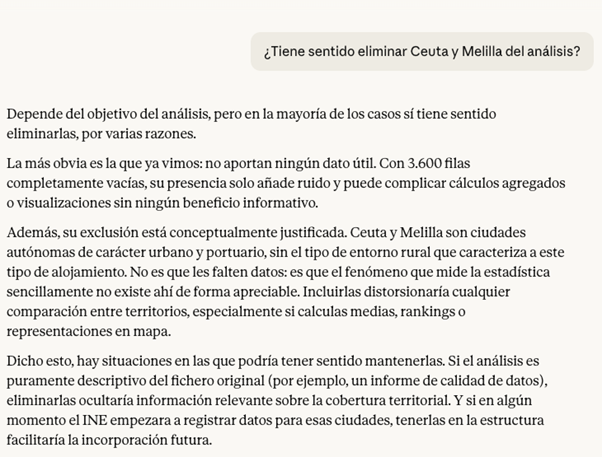

Before making the decision, a good practice at this point is to ask the LLM for the pros and cons of modifying the data. The answer can give us some clue as to which is the best option, or indicate some inconvenience that we had not taken into account.

Figure 4. Claude's analysis on the possibility of eliminating or not securities. Source: Claude.

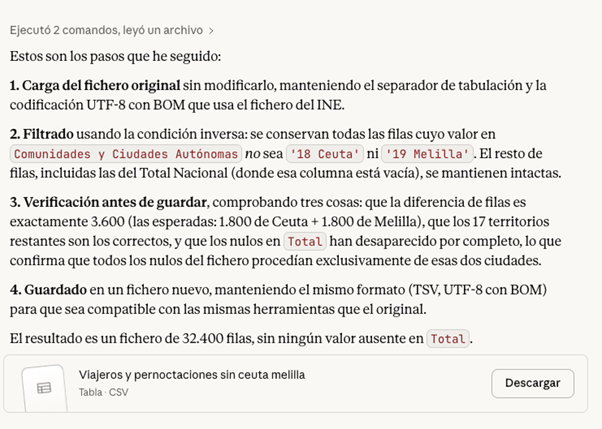

If we decide to go ahead and exclude the cities of Ceuta and Melilla from the analysis, Claude can help us make this modification directly on the file. The prompt would be as follows:

PROMPT: Removes all rows corresponding to Ceuta and Melilla from the file, so that the rest of the data remains intact. Also explain the steps you're following so they can review them.

Figura 5. Step by step in the modification of data in Claude. Source: Claude.

At this point, Claude offers to download the modified file again, so a good checking practice would be to manually validate that the operation was done correctly. For example, check the number of rows in one file and another or check some rows at random with the first file to make sure that the data has not been corrupted.

3. First questions and visualizations

If the result so far is satisfactory, we can already start exploring the data to ask ourselves initial questions and look for interesting patterns. The ideal when starting the exploration is to ask big, clear and easy to answer questions with the data, because they give us a first vision.

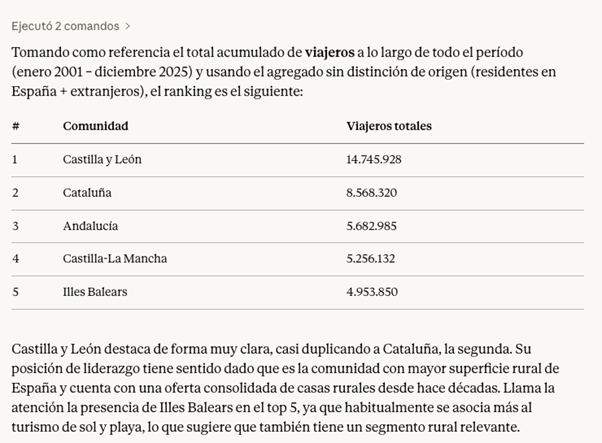

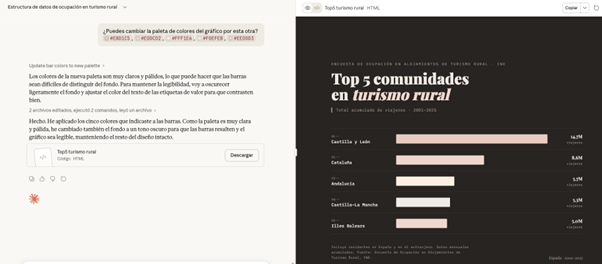

PROMPT: It works with the file without Ceuta and Melilla from now on. Which have been the five communities with the most rural tourism in the total period?

Figure 6. Claude's response to the five communities with the most rural tourism in the period. Source: Claude.

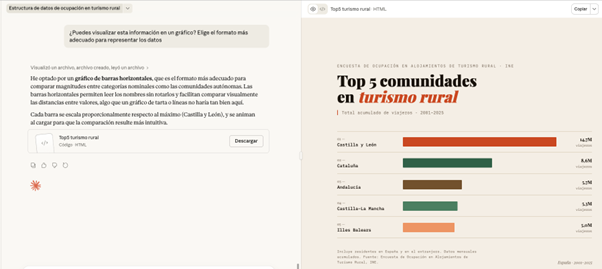

Finally, we can ask Claude to help us visualize the data. Instead of making the effort to point you to a particular chart type, we give you the freedom to choose the format that best displays the information.

PROMPT: Can you visualize this information on a graph? Choose the most appropriate format to represent the data.

Figure 7. Graph prepared by Cloude to represent the information. Source: Claude.

Here, the screen unfolds: on the left, we can continue with the conversation or download the file, while on the right we can view the graph directly. Claude has generated a very visual and ready-to-use horizontal bar chart. The colors differentiate the communities and the date range and type of data are correctly indicated.

What happens if we ask you to change the color palette of the chart to an inappropriate one? In this case, for example, we are going to ask you for a series of pastel shades that are hardly different.

PROMPT: Can you change the color palette of the chart to this? #E8D1C5, #EDDCD2, #FFF1E6, #F0EFEB, #EEDDD3

.Figure 8. Adjustments made to the graph by Claude to represent the information. Source: Claude.

Faced with the challenge, Claude intelligently adjusts the graphic himself, darkens the background and changes the text on the labels to maintain readability and contrast

All of the above exercise has been done with Claude Sonnet 4.6, which is not Anthropic's highest quality model. Its higher versions, such as Claude Opus 4.6, have greater reasoning capacity, deep understanding and finer results. In addition, there are many other tools for working with AI-based data and visualizations, such as Julius or Quadratic. Although the possibilities are almost endless in them, when we work with data it is still essential to maintain our own methodology and criteria.

Contextualizing the data we are analyzing in real life and connecting it with other knowledge is not a task that can be delegated; We need to have a minimum prior idea of what we want to achieve with the analysis in order to transmit it to the system. This will allow us to ask better questions, properly interpret the results and therefore make a more effective prompting.

Content created by Carmen Torrijos, expert in AI applied to language and communication. The content and views expressed in this publication are the sole responsibility of the author.

Gasofinder is a modern and efficient web application designed to help users find the cheapest gas stations closest to their location in real time. Using official data and an interactive map, the application helps save money on every refuel. Specifically, it offers:

-

Interactive Map:

-

Clear Visualization: uses OpenStreetMap maps with a clean design (Carto Voyager).

-

Smart Markers:

🟢 Green: low prices.

🟡 Yellow: average prices.

🔴 Red: high prices. -

Special Icons: Quickly identifies the cheapest (⭐) and the most expensive (⚠️) gas station within the visible area.

-

Savings and Real-Time Pricing: retrieves updated prices directly from the Ministry for the Ecological Transition.

-

Tank Fill Calculation: enter your tank capacity (default 55L) to see how much it will cost to fill it.

-

Savings Estimation: shows how much you save compared to the most expensive option in the area.

-

Price Thermometer: a visual bar that indicates whether a station’s price is good, average, or bad compared to local minimums and maximums.

-

Customization and Filters

Fuel Type: filter by Diesel A, Gasoline 95 E5, Gasoline 98 E5, or Premium Diesel. -

Tank Size: adjustable for personalized calculations.

-

Charging Points Link: direct access to the official electric vehicle charging points map.

-

Navigation and Location

Geolocation: automatically detects your location (first approximately, then precisely). -

Routes: automatically calculates the distance and travel time to the selected gas station.

-

GPS Integration: opens the location directly in Google Maps, Waze, or Apple Maps with a single click.

-

Lock Mode: allows you to "pin" a selected gas station so it does not change automatically while moving the map.

Icon Clarification

Location (📍) Shows your current position.

Star (⭐) The most affordable visible option.

Alert (⚠️) The most expensive visible option.

Centraldecomunicacion.es is a Spanish platform specializing in company databases and company listings ready for use in B2B commercial prospecting, market analysis, segmentation by sector/province, and growth campaigns. They work with public signals and verifiable digital presence to build actionable business records: location, activity, online reputation, and contact channels when available. The data is delivered in compatible formats (Excel/CSV) for integration into standard workflows (CRM, email marketing, BI/Excel, automations).

The databases include fields such as: name, category/sector, description, full address, city/province, coordinates, Google Maps URL, as well as phone number, corporate email, website, and social media when publicly available. Reputation signals such as ratings, number of reviews, and in some cases, review text and hours of operation are also included.

A key differentiator is the focus on quality: verification methodologies and tools (including email verification) are published to reduce bounce rates and improve the actual usefulness of the data. In addition, open resources such as a list of postal codes and utilities related to categorization/segmentation are offered.

Introduction

Every year there are tens of thousands of accidents in Spain, in which thousands of people are injured of varying degrees, and which occur in very different circumstances, both in terms of the type of road and the type of accident.

Many of the statistics related to these parameters are collected in the databases of the Directorate General of Traffic (DGT) and some of them in the catalogue hosted in datos.gob.es.

In this exercise, you will examine the content of the DGT accident database for the year 2024 in order to make a series of basic visualizations that allow us to quickly and intuitively see which are the facts to highlight regarding the incidence of accidents and their consequences in that year.

To do this, we are going to develop Python code that allows us to read and calculate basic metrics regarding the total number of victims, the particularities of the infrastructures as well as the different cases of accidents. And once we have this data available, we will visualize it using the Javascript D3.js library, which allows us both to represent data in its most traditional form and in more contemporary designs, common in the press, thus favoring a narrative that is fluid in style and coherent in content.

In the Python environment we will use commonly and frequently used libraries such as Numpy, for basic calculation - sums, maximums and minimums, and Pandas, to structure the data intuitively, facilitating both its organization and its transformation. We will also work with Datetime, both for the formatting of the input data in standard date types within the world of Python programming, and to add the data in an easy and intuitive way. In this way we will learn how to open any type of data file in . CSV, to structure it in an orderly way and to carry out basic transformations and operations in a simple way.

In the Javascript environment we will develop notebooks in D3.js thanks to the use of Observable, an open and free initiative, to be able to execute Javascript code directly in a web interface, and without having to resort to local servers or complex installations. In different notebooks we will create classic visualizations -such as time series on

Cartesian axes or maps- along with other proposals such as bubble distributions or elements stacked by categories.

In Figure 1 you can see the main stages of this exercise, from the reading of the data within the DGT file, to the operations and output variables in JSON format, which will in turn serve us in a Javascript environment to be able to develop the visualizations in D3.js.

Figure 1. Steps to be followed when performing this exercise, from reading the input CSV file, postprocessing the data with Python, creating an output in JSON format and ultimately displaying the information in D3.js

Access to the Github repositories, GoogleColab notebook and Observable notebooks is done via:

Access to the Github repository

Access to GoogleColab notebook

Access to Observable notebooks

Development Process

1. Reading the data file

The first step will be to read the DGT file containing all the accident records for the year 2024. This step will allow us to identify the fields of interest and especially in what format they are. We will be able to identify if any transformation is required, especially in the information of the date, as it is structured in the original file.

We will also see how to translate the codes of many of the categories offered by the DGT, so that we can make a real interpretation beyond the numbers of categories such as type of accident, type of road or ownership of the road.

Once we understand the structure and content of the data, we can start operating with it.

2. Calculating Metrics

The Pandas Python library allows us to operate with the different columns of data and perform basic calculations that will be representative enough to minimally understand the casuistry of accidents on Spanish roads.

In this section, three types of calculations will be made.

- The first of these will be the calculation of the total number of victims per hour of the day for each of the days of the week. The DGT database is structured by day of the week, so we will also use this time scale to represent the data in a series. It should be noted that avictim is considered to be any person who has died or who is diagnosed as seriously or lightly injured.

- The second calculation will be the sum of the total of accidents for different categories, such as road ownership, type of accident or type of road. This will allow us to see which are the conditions in which accidents are most frequent.

- The third calculation will be the number of accidents per municipality. In this case we will carry out the calculation restricted to the province of Valencia as an example, and which would be applicable to any province or municipality of our interest. In this case we will observe the differences between urban and non-urban centers, as well as those municipalities through which the main communication routes pass.

3. Visualization Design

Once we have calculated the metrics of interest, we will develop four visualization exercises in D3.js. To do this, we will export the result of the metrics in JSON format and create notebooks in Observable. Specifically, we made the following visualizations:

- Time series with the total number of casualties in each hour and day of the week, with an interactive drop-down menu to select the day of the week of interest. In addition to the curve that describes the number of victims, we will draw the uncertainty of all the days of the week on the background of the graph, so that the daily time series is framed in the context of the whole week as a reference.

- Map of the province of Valencia with the total number of accidents by municipality.

- Bubble diagram, with the different magnitudes of the different types of accidents with the total number of accidents in each case written in detail.

- Stacked dot diagram, where we accumulate circles or any other geometric shape for the different road ownership and its total number of accidents within the framework of each ownership.

- Mountain ridge diagram, where the height of each mountain represents the total number of victims on a logarithmic scale.

Viewing metrics

The result of this exercise can be seen graphically and explicitly in the form of visualizations made for the web format and accessible from a web interface, both for its development and for its subsequent publication. These visualizations are gathered as Observable notebooks here:

Access to Observable notebooks

In Figure 2 we have the result of the time series of the total number of victims with respect to the time of day for different days of the week. The time series is framed within the uncertainty of the total number of days of the week, to give an idea of the margin of variability that we can have depending on the time of day.

Figure 2. Time series of total accident casualties by time of day for all days of the week in 2024. The light blue background indicates the uncertainty associated with all the days of the week as context, with a drop-down menu to select the day of the week.

In Figure 3 we can see the map of the province of Valencia with a colour intensity proportional to the number of accidents in each municipality. Those municipalities in which no accidents have been recorded appear in white. Intuitively you can guess the layout of the main roads that cross the province, both the road to the east of the city of Valencia in the direction of Madrid and the inland road to the south of the city in the direction of Alicante

Figure 3. Map of the number of accidents by municipality in the province of Valencia in 2024.

In Figure 4 we see a geometric shape, the circle, associated with the types of accidents, with the detail of the number of accidents associated with each category. In this type of visualization, the most frequent accidents around the center of the diagram naturally emerge, while those that are minority or residual occupy the perimeter of the diagram to also give a round shape to the set of shapes

Figure 4. Bubble diagram of the number of accidents by accident type in 2024.

Figure 5 shows the traditional bar diagram, but this time broken down into smaller units, to refine the number of accidents associated with the ownership of the road where they have occurred. This type of diagram allows us to discern small differences between similar quantities, preserving the general message that we obtain from a calculation of these characteristics.

Figure 5. Bar diagram with dot discretization for the number of accidents by road ownership in 2024

Figure 6 shows the total number of victims on a logarithmic scale based on the height of each mountain for each type of road.

Figure 6. Mountain ridge diagram, displaying the total number of victims by each type of road in 2024.

Lessons learned

Through these steps we will learn a whole series of transversal skills that allow us to work with those datasets that are presented to us in CSV format in columns, a very popular format for which we can perform both their analysis and their visualization. These lessons are specifically:

- Universality of reading and structuring data: the use of tools such as Python, with its Numpy and Pandas libraries, allows access to data in detail and structured in an orderly and intuitive way with a few lines of code.

- Simple calculations in Pandas: the Python library itself allows simple but essential calculations for the preliminary interpretation of results.

- Datetime format: through this Python library we can become familiar with the standard date format, and thus perform all kinds of transformations, filters and selections that interest us the most in any time interval.

- JSON format: once we decide to give space to our visualizations on the web, learning the structure and use of the JSON format is very useful given its wide use in all types of applications and web architectures.

- Spectrum of D3.js possibilities: this Javascript library allows us to explore from the most traditional and conservative to the most creative thanks to its principles based on the most basic shapes, without templates, templates or predefined diagrams.

Conclusions and next steps

We have learned to read and structure data according to the standards of the most widely used formats in the world of analysis and visualization. This exercise also serves as an introductory module to the world of D3.js, a very versatile, current and popular tool within the world of storytelling and data visualization at all levels.

In order to move forward in this exercise, it is recommended:

- For analysts and developers, it is possible to dispense with the Pandas library and structure the data with more elementary Python objects such as arrays and matrices, looking for which functions and which operators allow the same tasks that Pandas does to be performed but in a more fundamental way, especially if we think of production environments for which we need the fewest possible libraries to lighten the application.

- For the creators of visualizations, information on municipalities can also be projected onto existing cartographic databases such as OpenStreetMap and thus link the incidence of accidents to orographic features or infrastructures already reflected in these cartographic databases. For the magnitudes of the accident numbers, you can explore Treemap diagrams or Voronoi diagrams and see if they convey the same message as the ones presented in this exercise.

Areas of application

Los pasos descritos en este ejercicio pueden pasar a formar parte de cualquier caja de herramientas de uso habitual para los siguientes perfiles:

- Data analysts: here are the basic steps for the description of a data file in CSV format and the basic calculations to be carried out both in the date field and operations between variables of different columns. These tools can be used to introduce you to the world of data analysis and help in those first steps when facing a dataset.

- Scientists and research staff: the universality of the tools described here apply to a wide variety of data sources, such as that experienced in experimental sciences and observations or measurements of all kinds. These tools allow for a quick and rigorous analysis regardless of the field of knowledge in which you work.

- Web developers: the export of data in JSON format as well as the Javascript code offered in Observable notebooks are easily integrated into all types of environments (Svelte, React, Angular, Vue) and allow the creation of visualizations on a website in a simple and intuitive way.

- Journalists: covering the entire life process of a data file, from its reading to its visualization, gives the journalist or researcher independence when it comes to evaluating and interpreting the data by himself without depending on external technical resources. The creation of the map by municipalities opens the door to using any other similar data, such as electoral processes, with the same output format to show geographical variability with respect to any type of magnitude.

- Graphic Designers: Handling visualization tools with a wide degree of freedom allows designers to cultivate all their creativity within the rigor and accuracy that data requires.