The Spanish Data Protection Agency (AEPD), through its own Innovation and Technology section, carries out an essential didactic task by providing a documentary corpus that translates the legal obligations of the General Data Protection Regulation (GDPR) into specific technological realities. Its value lies in its ability to offer legal certainty and technical guidelines in areas where regulations are still finding their practical fit, such as artificial intelligence or biometrics.

These are reference guides, articles and other teaching materials aimed especially at SMEs and entrepreneurs. In this post we present some of the most recent, ordered by sector and subject.

The new trends in artificial intelligence and its secure deployment

The evolution of artificial intelligence towards increasingly autonomous systems poses new challenges in terms of data protection. For this reason, the Spanish Data Protection Agency has developed various guides and documents aimed at facilitating a secure and responsible deployment of this technology. In general, AI is one of the areas of greatest document activity of the AEPD due to its transversal impact. The Agency's resources range from internal management to state-of-the-art technologies.

- Guide to agentric artificial intelligence from the perspective of data protection: theso-called agentric AI is one capable of making decisions and acting with a certain degree of independence. Unlike purely reactive models, an agent AI can carry out multiple tasks autonomously and make intermediate decisions during complex processes. This guide discusses the risks of loss of human control and sets out criteria to ensure that decision traceability is not lost in automation.

- General policy for the use of generative AI in AEPD administrative processes: generative artificial intelligence (IAG or GenAI) is a type of AI capable of producing new content, such as text, images, audio or code from learned patterns. This document establishes an internal policy for its responsible use in administrative processes.

- Implementation annex of the AEPD's general IAG policy: this annex to the above document includes the permitted use cases, the type of systems recommended (external, internal or ad hoc), the level of risk associated with each application and the specific obligations of review, human control, security and data protection.

- Basic summary of obligations and recommendations for the management of generative AI: this is a synthesized outline on aspects of governance, design and development of use cases, processing of personal data and sensitive information, transparency and explainability, and responsible use of tools, among others.

- Federated Learning Report: Federated learning is an AI approach that allows models to be trained collaboratively without centralizing data, improving privacy, and aligning with GDPR. This guide explains what it consists of, where personal data can be processed and what are the benefits and challenges in data protection.

To complement this information, users can also visit the AEPD's blog, which serves as a trend observatory where the visible and invisible risks of consumer technologies are analyzed. Some of the topics covered are:

- Image and voice processing: Analyses have been published on AI voice transcription and the use of services that convert photos to other formats (such as animations). These articles warn about the processing of biometric data and the ownership of data in the cloud.

- Algorithmic literacy: resources such as "Addressing AI Misconceptions" seek to raise the level of critical judgment of users and managers in the face of the opacity of algorithms.

- Balance of rights: the analysis of the protection of minors in the digital environment and the design of public contracts that integrate privacy by design stands out.

European Digital Identity Wallet

The evolution towards an interconnected Europe requires robust identity standards and security measures accessible to all levels of business.

Building a secure, interoperable and trustworthy digital identity is one of the pillars of digital transformation in Europe. The future European Digital Identity Portfolio is a project that aims to allow citizens to identify themselves electronically and share personal attributes in a controlled way across multiple services, both public and private.

To analyse its implications from the point of view of privacy, the Spanish Data Protection Agency has published a series of four monographic articles throughout 2025. In them, the Agency breaks down the relationship between the new digital identity wallet and the GDPR.

These contents address key issues such as:

- Data minimisation and the principle of proportionality in information exchange: explains how the eIDAS2 Regulation boosts the European digital identity portfolio. This regulation establishes a framework for secure, interoperable and user-centric electronic identification, aligned with the GDPR to ensure the control and protection of personal data across the EU.

- The risks associated with interoperability between systems: delves into how to prevent the use of the European Digital Identity Wallet from tracking citizens when they present credentials in different public or private services, highlighting the need for advanced cryptographic solutions.

- The need to ensure user control over their credentials: examines identification threats in digital identity wallets under eIDAS2, highlighting that, without strong safeguards such as pseudonymization and non-bonding, even selective disclosure of data can allow for the improper identification and profiling of users.

- The security measures needed to prevent misuse or data breaches: Raises the threats of inaccuracy in digital identity wallets under eIDAS2, highlighting how outdated data or linkable cryptographic mechanisms can lead to erroneous decisions and compromise privacy. To solve this, it stresses the need for solutions that guarantee both reliability and plausible deniability (that there is no technical evidence to prove that a person has carried out a specific action with their wallet or digital credential).

This series provides a progressive overview that helps to understand both the potential of European digital identity and the challenges posed by its implementation from a data protection perspective.

Personal Data Protection Encryption in SMBs

For many small and medium-sized businesses, ensuring the security of personal data remains a challenge, especially due to a lack of technical resources or specialized knowledge. In this context, encryption is presented as a fundamental tool to protect the confidentiality and integrity of information.

With the aim of bringing this concept closer to a non-expert audience, the Spanish Data Protection Agency has published the Encryption Guide for the self-employed and SMEs, accompanied by an explanatory infographic.

These resources explain in a clear and practical way:

- What is encryption and why is it important in data protection?

- What types of encryption exist and in which cases they are applied.

- How to implement encryption measures in common situations, such as sending emails or storing information.

- Which tools can be used without the need for advanced knowledge.

Scientific research and the European legal framework

For profiles that require a more in-depth and academic analysis, the Agency has promoted the publication of scientific articles in various international media, which connect technology with ethics and law. Some examples are:

- Addictive patterns: analysis of how interface design affects human behavior.

- Neurotechnology: study on the risks of brain-computer interfaces.

- Algorithmic governance: A comprehensive analysis that aligns the GDPR with the European Artificial Intelligence Regulation (AI Act), the Digital Services Act (DSA), and the Cyber Resilience Act.

The didactic value of these materials lies in their ability to offer a 360-degree view of the data. From cutting-edge academic research to encryption infographics for a small business, the AEPD provides the building blocks for innovation that doesn't sacrifice privacy.

Together, these materials shared by the Spanish Data Protection Agency help to incorporate effective security measures and comply with the requirements of the General Data Protection Regulation in a proportionate and accessible way. All of them, and some others, are compiled and ordered by theme in its website, available here.

The digital ecosystem around data has evolved rapidly in recent years. While the debate once focused mainly on volume and speed, today we face a far more complex landscape in which generative artificial intelligence, governance, ethics, and interoperability have become central priorities.

This report identifies and analyses four major trends that are currently shaping the data ecosystem, along with the challenges they present and the key lines of action needed to address them.

Figura 1. Four key trends in the world of data. Source: own elaboration - datosgob.es.

Each of these is summarized below.

1. Generative artificial intelligence: a new paradigm in the use of data

The emergence of generative AI has redefined the role of data, not only as raw material for training models, but also as a product. This transformation poses great opportunities when it comes to automating tasks or enriching public services but also challenges in terms of quality and possible ethical biases, as well as in terms of traceability and human monitoring capacity. The new European legislation, especially the AI Act, establishes a robust regulatory framework that classifies systems according to their level of risk and imposes minimum requirements such as impact assessments, or obligations in terms of transparency and human control. Spain reinforces this approach with initiatives such as the creation of the Spanish Agency for the Supervision of AI (AESIA) and the adoption of new quality guidelines and standards.

2. Ethics and digital rights: placing people at the center

In a context where personal data feeds a large part of digital systems, the protection of fundamental rights becomes an unavoidable obligation. The General Data Protection Regulation (GDPR) continues to be the main regulatory pillar, promoting good practices in terms of data minimisation, portability or algorithmic transparency. In addition to this, there are other initiatives such as the EU Declaration of Digital Rights and Principles and the Charter of Digital Rights in Spain, which strengthen the social and humanistic approach to digital transformation. Thanks to all this, a new organisational culture is beginning to consolidate where ethical aspects are integrated in a transversal way in the processes of design, development and deployment of digital solutions.

3. Data spaces: building the new information ecosystems

The European Data Spaces represent a strategic commitment to build a common data ecosystem in key sectors such as health, energy, mobility or tourism. These spaces facilitate controlled and secure access to public and private data, expanding on the traditional model of open data portals. The ultimate goal is to achieve an interconnected data environment that allows the development of innovative services, activating a more dynamic data economy. However, technical and organizational challenges, such as semantic and technical interoperability, inclusive participation, or the protection of security and privacy, remain significant. Initiatives such as the Data Spaces Support Centre or the Data Space Reference Centre (CRED) in Spain are driving its practical implementation.

4. Data governance: the new high-value asset in organizations

Data governance has ceased to be a purely technical issue and has become an institutional priority. In order to achieve this, public and private organisations are adapting their organisational structures and adopting new regulatory and technical frameworks. Proper governance must cover the entire life cycle of the data, from its creation to the final archive, and involves carrying out actions in several areas, such as cataloguing, interoperability, traceability and security. In addition, a series of human, technological and evaluation capacities will need to be developed in order to respond to these new needs. In general, both Spain and other European countries are moving towards more mature and better articulated data governance models, understanding data as a strategic infrastructure.

The role of the regulatory framework

The report concludes with an overview of the related regulatory framework, which acts as a lever for progress and a generator of trust. The European Union has succeeded in positioning itself as a global reference in digital regulation, with an approach grounded in rights and sustainability. Integration between the various existing standards, such as the GDPR, the AI Act and the DGA, helps to create a more secure, transparent and innovative environment for the use of data, albeit complex. For this reason, the regulatory simplification expected under the new Digital Omnibus is anticipated to bring greater coherence and clarity, making adoption and compliance easier.dopción y cumplimiento.

The "Public Sector Data Re-User´s Decalogue" (2025 edition), offers an updated guide to facilitate the access, reuse and enhancement of public sector information in the current context marked by the data economy, artificial intelligence and new European regulatory frameworks. It maintains its practical vocation, aimed at facilitating the effective reuse of public sector information by citizens, companies and administrations, but also incorporating the profound technological, regulatory and organisational changes that have taken place in the last decade.

Who it is for?

This Decalogue is aimed at a wide and diverse audience that participates, in one way or another, in the public data ecosystem. It is especially useful for public administration professionals responsible for the publication, management and governance of data; for companies, entrepreneurs and organizations that develop services, products or research based on information from the public sector; and for citizens interested in better understanding how to access, interpret and reuse these resources.

What does the guide include?

The main objective of this document is to offer a functional vision on how to leverage the value of public data in a secure, interoperable and responsible way. To do this, it clearly and concisely explains what open data is, how to interpret and apply reuse licenses, where to find datasets, and what factors influence their quality, interoperability, and persistence over time. It also incorporates an updated look at the role of metadata, standards and data governance as key elements to ensure reuse at scale and in increasingly complex contexts.

Likewise, the Decalogue presents us with the wide range of processing, analysis and visualization tools and introduces us to the need for continuous training and experimentation, in line with European priorities in digital skills and data science. In addition, it addresses the new challenges and opportunities arising from the use of data in artificial intelligence systems, placing special emphasis on its connection with the social and economic value of data and the need for ethical and responsible use through traceability, transparency and risk mitigation.

Overall, this guide is consolidated as an up-to-date and useful reference to promote a responsible, sustainable use and generator of social and economic value of public sector data.

If you want to go deeper…

For those who want to move towards more specialized levels of analytics, data science, and artificial intelligence, this guide is complemented by the Data Scientist's Decalogue, which offers a roadmap for developing high-value technical and analytical skills and continuing to deepen the best practices needed in today's data ecosystem.

You can download the report and the executive summary below.

The adoption of the new DCAT-AP-ES profile aligns Spain with the application profile in Europe (DCAT-AP), facilitating automatic federation between data catalogs defined in RDF (Resource Description Framework).

In this RDF graph environment where flexibility is the norm, the absence of traditional rigid schemas can lead to a silent degradation of data quality, if the standard is not rigorously followed. To mitigate this risk, there is SHACL (Shapes Constraint Language), a recommendation of the W3C. These guidelines make it possible to define "shapes" that function as true guardians of quality and compliance with interoperability.

The stages of the SHACL validation process are as follows:

- An RDF data graph is available

- A subset from the previous graph is selected

- The SHACL constraints that apply to the previous subgraph are checked

- A validation report is obtained with the compliant elements, with errors or with recommendations.

The following figure shows these stages:

Figure 1: Main stages of the SHACL validation process

Objectives and target audience

This technical guide aims to help publishers and reusers incorporate SHACL validation as a continuous quality improvement practice, through a didactic and accessible approach, inspired by clear resources and open validation tools from the data ecosystem.

In addition, its relationship with DCAT-AP-ES is deepened in a special way, detailing a practical and exhaustive case of the complete workflow of validation and governance of a catalog according to this profile.

Structure and contents

The document follows a progressive approach, starting from theoretical foundations to technical implementation and automatic integration, structured in the following key blocks:

- Fundamentals of semantic validation: RDF and the challenge of the “open world, as well as SHACL as a mechanism to perform validations, defining key concepts such as Shape or Validation Report.

- DCAT-AP-ES and the adoption of SHACL for validation: the SHACL forms defined in DCAT-AP-ES and the case of their application in the federation process of the National Catalogue are explained.

- Case Study: RDF Graph Validation: A step-by-step tutorial on how to validate a catalog with DCAT-AP-ES SHACL forms, troubleshooting common issues, and available tools.

- Conclusions: Reflections on the advantages of integrating SHACL validation to improve data catalog governance.

SHACL validation represents a paradigm shift in metadata quality management in data catalogs. This guide walks through the entire process from theoretical foundations to practical application, demonstrating that the adoption of SHACL is not simply a technical requirement, but an opportunity to strengthen and improve data governance.

Did you know that Spain created the first state agency specifically dedicated to the supervision of artificial intelligence (AI) in 2023? Even anticipating the European Regulation in this area, the Spanish Agency for the Supervision of Artificial Intelligence (AESIA) was born with the aim of guaranteeing the ethical and safe use of AI, promoting responsible technological development.

Among its main functions is to ensure that both public and private entities comply with current regulations. To this end, it promotes good practices and advises on compliance with the European regulatory framework, which is why it has recently published a series of guides to ensure the consistent application of the European AI regulation.

In this post we will delve into what the AESIA is and we will learn relevant details of the content of the guides.

What is AESIA and why is it key to the data ecosystem?

The AESIA was created within the framework of Axis 3 of the Spanish AI Strategy. Its creation responds to the need to have an independent authority that not only supervises, but also guides the deployment of algorithmic systems in our society.

Unlike other purely sanctioning bodies, the AESIA is designed as an intelligence Think & Do, i.e. an organisation that investigates and proposes solutions. Its practical usefulness is divided into three aspects:

- Legal certainty: Provides clear frameworks for businesses, especially SMEs, to know where to go when innovating.

- International benchmark: it acts as the Spanish interlocutor before the European Commission, ensuring that the voice of our technological ecosystem is heard in the development of European standards.

- Citizen trust: ensures that AI systems used in public services or critical areas respect fundamental rights, avoiding bias and promoting transparency.

Since datos.gob.es, we have always defended that the value of data lies in its quality and accessibility. The AESIA complements this vision by ensuring that, once data is transformed into AI models, its use is responsible. As such, these guides are a natural extension of our regular resources on data governance and openness.

Resources for the use of AI: guides and checklists

The AESIA has recently published materials to support the implementation and compliance with the European Artificial Intelligence regulations and their applicable obligations. Although they are not binding and do not replace or develop existing regulations, they provide practical recommendations aligned with regulatory requirements pending the adoption of harmonised implementing rules for all Member States.

They are the direct result of the Spanish AI Regulatory Sandbox pilot. This sandbox allowed developers and authorities to collaborate in a controlled space to understand how to apply European regulations in real-world use cases.

It is essential to note that these documents are published without prejudice to the technical guides that the European Commission is preparing. Indeed, Spain is serving as a "laboratory" for Europe: the lessons learned here will provide a solid basis for the Commission's working group, ensuring consistent application of the regulation in all Member States.

The guides are designed to be a complete roadmap, from the conception of the system to its monitoring once it is on the market.

Figure 1. AESIA guidelines for regulatory compliance. Source: Spanish Agency for the Supervision of Artificial Intelligence

- 01. Introductory to the AI Regulation: provides an overview of obligations, implementation deadlines and roles (suppliers, deployers, etc.). It is the essential starting point for any organization that develops or deploys AI systems.

- 02. Practice and examples: land legal concepts in everyday use cases (e.g., is my personnel selection system a high-risk AI?). It includes decision trees and a glossary of key terms from Article 3 of the Regulation, helping to determine whether a specific system is regulated, what level of risk it has, and what obligations are applicable.

- 03. Conformity assessment: explains the technical steps necessary to obtain the "seal" that allows a high-risk AI system to be marketed, detailing the two possible procedures according to Annexes VI and VII of the Regulation as valuation based on internal control or evaluation with the intervention of a notified body.

- 04. Quality management system: defines how organizations must structure their internal processes to maintain constant standards. It covers the regulatory compliance strategy, design techniques and procedures, examination and validation systems, among others.

- 05. Risk management: it is a manual on how to identify, evaluate and mitigate possible negative impacts of the system throughout its life cycle.

- 06. Human surveillance: details the mechanisms so that AI decisions are always monitorable by people, avoiding the technological "black box". It establishes principles such as understanding capabilities and limitations, interpretation of results, authority not to use the system or override decisions.

- 07. Data and data governance: addresses the practices needed to train, validate, and test AI models ensuring that datasets are relevant, representative, accurate, and complete. It covers data management processes (design, collection, analysis, labeling, storage, etc.), bias detection and mitigation, compliance with the General Data Protection Regulation, data lineage, and design hypothesis documentation, being of particular interest to the open data community and data scientists.

- 08. Transparency: establishes how to inform the user that they are interacting with an AI and how to explain the reasoning behind an algorithmic result.

- 09. Accuracy: Define appropriate metrics based on the type of system to ensure that the AI model meets its goal.

- 10. Robustness: Provides technical guidance on how to ensure AI systems operate reliably and consistently under varying conditions.

- 11. Cybersecurity: instructs on protection against threats specific to the field of AI.

- 12. Logs: defines the measures to comply with the obligations of automatic registration of events.

- 13. Post-market surveillance: documents the processes for executing the monitoring plan, documentation and analysis of data on the performance of the system throughout its useful life.

- 14. Incident management: describes the procedure for reporting serious incidents to the competent authorities.

- 15. Technical documentation: establishes the complete structure that the technical documentation must include (development process, training/validation/test data, applied risk management, performance and metrics, human supervision, etc.).

- 16. Requirements Guides Checklist Manual: explains how to use the 13 self-diagnosis checklists that allow compliance assessment, identifying gaps, designing adaptation plans and prioritizing improvement actions.

All guides are available here and have a modular structure that accommodates different levels of knowledge and business needs.

The self-diagnostic tool and its advantages

In parallel, the AESIA publishes material that facilitates the translation of abstract requirements into concrete and verifiable questions, providing a practical tool for the continuous assessment of the degree of compliance.

These are checklists that allow an entity to assess its level of compliance autonomously.

The use of these checklists provides multiple benefits to organizations. First, they facilitate the early identification of compliance gaps, allowing organizations to take corrective action prior to the commercialization or commissioning of the system. They also promote a systematic and structured approach to regulatory compliance. By following the structure of the rules of procedure, they ensure that no essential requirement is left unassessed.

On the other hand, they facilitate communication between technical, legal and management teams, providing a common language and a shared reference to discuss regulatory compliance. And finally, checklists serve as a documentary basis for demonstrating due diligence to supervisory authorities.

We must understand that these documents are not static. They are subject to an ongoing process of evaluation and review. In this regard, the EASIA continues to develop its operational capacity and expand its compliance support tools.

From the open data platform of the Government of Spain, we invite you to explore these resources. AI development must go hand in hand with well-governed data and ethical oversight.

Data possesses a fluid and complex nature: it changes, grows, and evolves constantly, displaying a volatility that profoundly differentiates it from source code. To respond to the challenge of reliably managing this evolution, we have developed the new 'Technical Guide: Data Version Control'.

This guide addresses an emerging discipline that adapts software engineering principles to the data ecosystem: Data Version Control (DVC). The document not only explores the theoretical foundations but also offers a practical approach to solving critical data management problems, such as the reproducibility of machine learning models, traceability in regulatory audits, and efficient collaboration in distributed teams.

Why is a guide on data versioning necessary?

Historically, data versioning has been done manually (files with suffixes like "_final_v2.csv"), an error-prone and unsustainable approach in professional environments. While tools like Git have revolutionized software development, they are not designed to efficiently handle large files or binaries, which are intrinsic characteristics of datasets.

This guide was created to bridge that technological and methodological gap, explaining the fundamental differences between code versioning and data versioning. The document details how specialized tools like DVC (Data Version Control) allow you to manage the data lifecycle with the same rigor as code, ensuring that you can always answer the question: "What exact data was used to obtain this result?"

Structure and contents

The document follows a progressive approach, starting from basic concepts and progressing to technical implementation, and is structured in the following key blocks:

- Version Control Fundamentals: Analysis of the current problem (the "phantom model", impossible audits) and definition of key concepts such as Snapshots, Data Lineage and Checksums.

- Strategies and Methodologies: Adaptation of semantic versioning (SemVer) to datasets, storage strategies (incremental vs. full) and metadata management to ensure traceability.

- Tools in practice: A detailed analysis of tools such as DVC, Git LFS and cloud-native solutions (AWS, Google Cloud, Azure), including a comparison to choose the most suitable one according to the size of the team and the data.

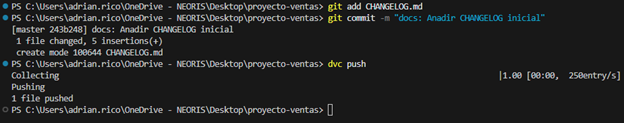

- Practical case study: A step-by-step tutorial on how to set up a local environment with DVC and Git, simulating a real data lifecycle: from generation and initial versioning, to updating, remote synchronization, and rollback.

- Governance and best practices: Recommendations on roles, retention policies and compliance to ensure successful implementation in the organization.

Figure 1: Practical example of using GIT and DVC commands included in the guide.

Who is it aimed at?

This guide is designed for a broad technical profile within the public and private sectors: data scientists, data engineers, analysts and data catalog managers.

It is especially useful for professionals looking to streamline their workflows, ensure the scientific reproducibility of their research, or guarantee regulatory compliance in regulated sectors. While basic knowledge of Git and the command line is recommended, the guide includes practical examples and detailed explanations to facilitate learning.

The future new version of the Technical Standard for Interoperability of Public Sector Information Resources (NTI-RISP) incorporates DCAT-AP-ES as a reference model for the description of data sets and services. This is a key step towards greater interoperability, quality and alignment with European data standards.

This guide aims to help you migrate to this new model. It is aimed at technical managers and managers of public data catalogs who, without advanced experience in semantics or metadata models, need to update their RDF catalog to ensure its compliance with DCAT-AP-ES. In addition, the guidelines in the document are also applicable for migration from other RDF-based metadata models, such as local profiles, DCAT, DCAT-AP or sectoral adaptations, as the fundamental principles and verifications are common.

Why migrate to DCAT-AP-ES?

Since 2013, the Technical Standard for the Interoperability of Public Sector Information Resources has been the regulatory framework in Spain for the management and openness of public data. In line with the European and Spanish objectives of promoting the data economy, the standard has been updated in order to promote the large-scale exchange of information in distributed and federated environments.

This update, which at the time of publication of the guide is in the administrative process, incorporates a new metadata model aligned with the most recent European standards: DCAT-AP-ES. These standards facilitate the homogeneous description of the reusable data sets and information resources made available to the public. DCAT-AP-ES adopts the guidelines of the European metadata exchange scheme DCAT-AP (Data Catalog Vocabulary – Aplication Profile), thus promoting interoperability between national and European catalogues.

The advantages of adopting DCAT-AP-ES can be summarised as follows:

- Semantic and technical interoperability: ensures that different catalogs can understand each other automatically.

- Regulatory alignment: it responds to the new requirements provided for in the NTI-RISP and aligns the catalogue with Directive (EU) 2019/1024 on open data and the re-use of public sector information and Implementing Regulation (EU) 2023/138 establishing a list of specific High Value Datasets or HVD), facilitating the publication of HVDs and associated data services.

- Improved ability to find resources: Makes it easier to find, locate, and reuse datasets using standardized, comprehensive metadata.

- Reduction of incidents in the federation: minimizes errors and conflicts by integrating catalogs from different Administrations, guaranteeing consistency and quality in interoperability processes.

What has changed in DCAT-AP-ES?

DCAT-AP-ES expands and orders the previous model to make it more interoperable, more legally accurate and more useful for the maintenance and technical reuse of data catalogues.

The main changes are:

- In the catalog: It is now possible to link catalogs to each other, record who created them, add a supplementary statement of rights to the license, or describe each entry using records.

- In datasets: New properties are added to comply with regulations on high-value sets, support communication, document provenance and relationships between resources, manage versions, and describe spatial/temporal resolution or website. Likewise, the responsibility of the license is redefined, moving its declaration to the most appropriate level.

- For distributions: Expanded options to indicate planned availability, legislation, usage policy, integrity, packaged formats, direct download URL, own license, and lifecycle status.

A practical and gradual approach

Many catalogs already meet the requirements set out in the 2013 version of NTI-RISP. In these cases, the migration to DCAT-AP-ES requires a reduced adjustment, although the guide also contemplates more complex scenarios, following a progressive and adaptable approach.

The document distinguishes between the minimum compliance required and some extensions that improve quality and interoperability.

It is recommended to follow an iterative strategy: starting from the minimum core to ensure operational continuity and, subsequently, planning the phased incorporation of additional elements, such as data services, contact, applicable legislation, categorization of HVDs and contextual metadata. This approach reduces risks, distributes the effort of adaptation, and favors an orderly transition.

Once the first adjustments have been made, the catalogue can be federated with both the National Catalogue, hosted in datos.gob.es, and the Official European Data Catalogue, progressively increasing the quality and interoperability of the metadata.

The guide is a technical support material that facilitates a basic transition, in accordance with the minimum interoperability requirements. In addition, it complements other reference resources, such as the DCAT-AP-ES Application Profile Model and Implementation Technical Guide, the implementation examples (Migration from NIT-RISP to DCAT-AP-ES and Migration from NTI-RISP to DCAT-AP-ES HHD), and the complementary conventions to the DCAT-AP-ES model that define additional rules to address practical needs.

Data science has become a pillar of evidence-based decision-making in the public and private sectors. In this context, there is a need for a practical and universal guide that transcends technological fads and provides solid and applicable principles. This guide offers a decalogue of good practices that accompanies the data scientist throughout the entire life cycle of a project, from the conceptualization of the problem to the ethical evaluation of the impact.

- Understand the problem before looking at the data. The initial key is to clearly define the context, objectives, constraints, and indicators of success. A solid framing prevents later errors.

- Know the data in depth. Beyond the variables, it involves analyzing their origin, traceability and possible biases. Data auditing is essential to ensure representativeness and reliability.

- Ensure quality. Without clean data there is no science. EDA techniques, imputation, normalization and control of quality metrics allow to build solid and reproducible bases.

- Document and version. Reproducibility is a scientific condition. Notebooks, pipelines, version control, and MLOps practices ensure traceability and replicability of processes and models.

- Choose the right model. Sophistication does not always win: the decision must balance performance, interpretability, costs and operational constraints.

- Measure meaningfully. Metrics should align with goals. Cross-validation, data drift control and rigorous separation of training, validation and test data are essential to ensure generalization.

- Visualize to communicate. Visualization is not an ornament, but a language to understand and persuade. Data-driven storytelling and clear design are critical tools for connecting with diverse audiences.

- Work as a team. Data science is collaborative: it requires data engineers, domain experts, and business leaders. The data scientist must act as a facilitator and translator between the technical and the strategic.

- Stay up-to-date (and critical). The ecosystem is constantly evolving. It is necessary to combine continuous learning with selective criteria, prioritizing solid foundations over passing fads.

-

Be ethical. Models have a real impact. It is essential to assess bias, protect privacy, ensure explainability and anticipate misuse. Ethics is a compass and a condition of legitimacy.

Finally, the report includes a bonus-track on Python and R, highlighting that both languages are complementary allies: Python dominates in production and deployment, while R offers statistical rigor and advanced visualization. Knowing both multiplies the versatility of the data scientist.

The Data Scientist's Decalogue is a practical, timeless and cross-cutting guide that helps professionals and organizations turn data into informed, reliable and responsible decisions. Its objective is to strengthen technical quality, collaboration and ethics in a discipline in full expansion and with great social impact.

To explore the content of the report in greater depth, we have recorded a podcast and a video interview in which the author explains the key points of the Decalogue. In addition, an infographic and an executive summary have been produced.

Listen to the podcast with the author (only available in Spanish)

Watch the video-interview with the author

Download the infographic summary

Content prepared by Alejandro Alija, expert in Digital Transformation and Innovation. The contents and points of view reflected in this publication are the sole responsibility of the author.

Open data is a fundamental fuel for contemporary digital innovation, creating information ecosystems that democratise access to knowledge and foster the development of advanced technological solutions.

However, the mere availability of data is not enough. Building robust and sustainable ecosystems requires clear regulatory frameworks, sound ethical principles and management methodologies that ensure both innovation and the protection of fundamental rights. Therefore, the specialised documentation that guides these processes becomes a strategic resource for governments, organisations and companies seeking to participate responsibly in the digital economy.

In this post, we compile recent reports, produced by leading organisations in both the public and private sectors, which offer these key orientations. These documents not only analyse the current challenges of open data ecosystems, but also provide practical tools and concrete frameworks for their effective implementation.

State and evolution of the open data market

Knowing what it looks like and what changes have occurred in the open data ecosystem at European and national level is important to make informed decisions and adapt to the needs of the industry. In this regard, the European Commission publishes, on a regular basis, a Data Markets Report, which is updated regularly. The latest version is dated December 2024, although use cases exemplifying the potential of data in Europe are regularly published (the latest in February 2025).

On the other hand, from a European regulatory perspective, the latest annual report on the implementation of the Digital Markets Act (DMA)takes a comprehensive view of the measures adopted to ensure fairness and competitiveness in the digital sector. This document is interesting to understand how the regulatory framework that directly affects open data ecosystems is taking shape.

At the national level, the ASEDIE sectoral report on the "Data Economy in its infomediary scope" 2025 provides quantitative evidence of the economic value generated by open data ecosystems in Spain.

The importance of open data in AI

It is clear that the intersection between open data and artificial intelligence is a reality that poses complex ethical and regulatory challenges that require collaborative and multi-sectoral responses. In this context, developing frameworks to guide the responsible use of AI becomes a strategic priority, especially when these technologies draw on public and private data ecosystems to generate social and economic value. Here are some reports that address this objective:

- Generative IA and Open Data: Guidelines and Best Practices: the U.S. Department of Commerce. The US government has published a guide with principles and best practices on how to apply generative artificial intelligence ethically and effectively in the context of open data. The document provides guidelines for optimising the quality and structure of open data in order to make it useful for these systems, including transparency and governance.

- Good Practice Guide for the Use of Ethical Artificial Intelligence: This guide demonstrates a comprehensive approach that combines strong ethical principles with clear and enforceable regulatory precepts.. In addition to the theoretical framework, the guide serves as a practical tool for implementing AI systems responsibly, considering both the potential benefits and the associated risks. Collaboration between public and private actors ensures that recommendations are both technically feasible and socially responsible.

- Enhancing Access to and Sharing of Data in the Age of AI: this analysis by the Organisation for Economic Co-operation and Development (OECD) addresses one of the main obstacles to the development of artificial intelligence: limited access to quality data and effective models. Through examples, it identifies specific strategies that governments can implement to significantly improve data access and sharing and certain AI models.

- A Blueprint to Unlock New Data Commons for AI: Open Data Policy Lab has produced a practical guide that focuses on the creation and management of data commons specifically designed to enable cases of public interest artificial intelligence use. The guide offers concrete methodologies on how to manage data in a way that facilitates the creation of these data commons, including aspects of governance, technical sustainability and alignment with public interest objectives.

- Practical guide to data-driven collaborations: the Data for Children Collaborative initiative has published a step-by-step guide to developing effective data collaborations, with a focus on social impact. It includes real-world examples, governance models and practical tools to foster sustainable partnerships.

In short, these reports define the path towards more mature, ethical and collaborative data systems. From growth figures for the Spanish infomediary sector to European regulatory frameworks to practical guidelines for responsible AI implementation, all these documents share a common vision: the future of open data depends on our ability to build bridges between the public and private sectors, between technological innovation and social responsibility.

The Spanish Data Protection Agency has recently published the Spanish translation of the Guide on Synthetic Data Generation, originally produced by the Data Protection Authority of Singapore. This document provides technical and practical guidance for data protection officers, managers and data protection officers on how to implement this technology that allows simulating real data while maintaining their statistical characteristics without compromising personal information.

The guide highlights how synthetic data can drive the data economy, accelerate innovation and mitigate risks in security breaches. To this end, it presents case studies, recommendations and best practices aimed at reducing the risks of re-identification. In this post, we analyse the key aspects of the Guide highlighting main use cases and examples of practical application.

What are synthetic data? Concept and benefits

Synthetic data is artificial data generated using mathematical models specifically designed for artificial intelligence (AI) or machine learning (ML) systems. This data is created by training a model on a source dataset to imitate its characteristics and structure, but without exactly replicating the original records.

High-quality synthetic data retain the statistical properties and patterns of the original data. They therefore allow for analyses that produce results similar to those that would be obtained with real data. However, being artificial, they significantly reduce the risks associated with the exposure of sensitive or personal information.

For more information on this topic, you can read this Monographic report on synthetic data:. What are they and what are they used for? with detailed information on the theoretical foundations, methodologies and practical applications of this technology.

The implementation of synthetic data offers multiple advantages for organisations, for example:

- Privacy protection: allow data analysis while maintaining the confidentiality of personal or commercially sensitive information.

- Regulatory compliance: make it easier to follow data protection regulations while maximising the value of information assets.

- Risk reduction: minimise the chances of data breaches and their consequences.

- Driving innovation: accelerate the development of data-driven solutions without compromising privacy.

- Enhanced collaboration: Enable valuable information to be shared securely across organisations and departments.

Steps to generate synthetic data

To properly implement this technology, the Guide on Synthetic Data Generation recommends following a structured five-step approach:

- Know the data: cClearly understand the purpose of the synthetic data and the characteristics of the source data to be preserved, setting precise targets for the threshold of acceptable risk and expected utility.

- Prepare the data: iidentify key insights to be retained, select relevant attributes, remove or pseudonymise direct identifiers, and standardise formats and structures in a well-documented data dictionary .

- Generate synthetic data: sselect the most appropriate methods according to the use case, assess quality through completeness, fidelity and usability checks, and iteratively adjust the process to achieve the desired balance.

- Assess re-identification risks: aApply attack-based techniques to determine the possibility of inferring information about individuals or their membership of the original set, ensuring that risk levels are acceptable.

- Manage residual risks: iImplement technical, governance and contractual controls to mitigate identified risks, properly documenting the entire process.

Practical applications and success stories

To realise all these benefits, synthetic data can be applied in a variety of scenarios that respond to specific organisational needs. The Guide mentions, for example:

1 Generation of datasets for training AI/ML models: lSynthetic data solves the problem of the scarcity of labelled (i.e. usable) data for training AI models. Where real data are limited, synthetic data can be a cost-effective alternative. In addition, they allow to simulate extraordinary events or to increase the representation of minority groups in training sets. An interesting application to improve the performance and representativeness of all social groups in AI models.

2 Data analysis and collaboration: eThis type of data facilitates the exchange of information for analysis, especially in sectors such as health, where the original data is particularly sensitive. In this sector as in others, they provide stakeholders with a representative sample of actual data without exposing confidential information, allowing them to assess the quality and potential of the data before formal agreements are made.

3 Software testing: sis very useful for system development and software testing because it allows the use of realistic, but not real data in development environments, thus avoiding possible personal data breaches in case of compromise of the development environment..

The practical application of synthetic data is already showing positive results in various sectors:

I. Financial sector: fraud detection. J.P. Morgan has successfully used synthetic data to train fraud detection models, creating datasets with a higher percentage of fraudulent cases that significantly improved the models' ability to identify anomalous behaviour.

II. Technology sector: research on AI bias. Mastercard collaborated with researchers to develop methods to test for bias in AI using synthetic data that maintained the true relationships of the original data, but were private enough to be shared with outside researchers, enabling advances that would not have been possible without this technology.

III. Health sector: safeguarding patient data. Johnson & Johnson implemented AI-generated synthetic data as an alternative to traditional anonymisation techniques to process healthcare data, achieving a significant improvement in the quality of analysis by effectively representing the target population while protecting patients' privacy.

The balance between utility and protection

It is important to note that synthetic data are not inherently risk-free. The similarity to the original data could, in certain circumstances, allow information about individuals or sensitive data to be leaked. It is therefore crucial to strike a balance between data utility and data protection.

This balance can be achieved by implementing good practices during the process of generating synthetic data, incorporating protective measures such as:

- Adequate data preparation: removal of outliers, pseudonymisation of direct identifiers and generalisation of granular data.

- Re-identification risk assessment: analysis of the possibility that synthetic data can be linked to real individuals.

- Implementation of technical controls: adding noise to data, reducing granularity or applying differential privacy techniques.

Synthetic data represents a exceptional opportunity to drive data-driven innovation while respecting privacy and complying with data protection regulations. Their ability to generate statistically representative but artificial information makes them a versatile tool for multiple applications, from AI model training to inter-organisational collaboration and software development.

By properly implementing the best practices and controls described in Guide on synthetic data generation translated by the AEPD, organisations can reap the benefits of synthetic data while minimising the associated risks, positioning themselves at the forefront of responsible digital transformation. The adoption of privacy-enhancing technologies such as synthetic data is not only a defensive measure, but a proactive step towards an organisational culture that values both innovation and data protection, which are critical to success in the digital economy of the future.