Madriwa is an interactive web application designed to help people find the most suitable neighbourhood to live in Madrid by analysing urban data, nearby services and personal preferences.

The platform collects and processes information from more than 100 data sources, many of them from the Madrid City Council's open data, and updates this information regularly through automatic data analysis processes. With this data, it generates personalized profiles and recommendations on different areas of the city.

Through the application, the user can enter their interests or profile (for example, student, family or couple) and the tool analyzes which neighborhoods best fit those preferences. It also allows you to indicate important places such as work, university or the gym, to calculate distances and travel times.

Madriwa's key features include:

- Classification of neighborhoods in Madrid according to user preferences.

- Display on an interactive map with information on nearby services and facilities.

- Analysis of travel and isochronous times from different locations.

- Consultation of urban indicators such as average income, safety, population density or presence of facilities (schools, hospitals, public transport, etc.).

The app uses data analytics technologies, geographic information systems, and mapping visualization to transform large volumes of urban information into understandable recommendations for those looking for housing in the city.

The application is a tourist mobility analytics platform that integrates overnight stay data from the INE's experimental statistics with its own spatial estimation algorithms, capable of identifying the attraction of tourists at the 100-metre grid level. This approach allows working with a high granularity, facilitating a precise understanding of how tourist flows are distributed and concentrated in the territory.

On this basis, the solution incorporates advanced models for the processing of mobility matrices, aimed at detecting patterns, recurrences and relevant dynamics in the movements of tourists. Based on this data, an automated system of insights generation is activated that identifies high-value events, that is, significant findings that emerge from large volumes of complex, redundant or apparently obvious information, and that are transformed into useful and actionable knowledge.

All this analytical capacity is presented through a conversational interface based on generative artificial intelligence (GenAI/LLM), which allows non-technical users to interact with the system using natural language. In this way, the exploration, understanding and interpretation of data is facilitated, reducing technical complexity and favoring informed decision-making in areas such as tourism planning, destination management and the optimization of strategies within the sector.

Before performing a data visualization, it is important to understand two issues. On the one hand, what exactly you have in your hands, that is, the type of data, its format and other relevant characteristics; and, on the other hand, what is to be visualized, the objective of the graphic representation that is going to be made.

In the specific case of geographical data , enormous narrative possibilities open up because visualizations allow territorial distributions to be shown, spatial patterns to be identified, regions to be compared, or the evolution of a phenomenon to be traced in time and space. To take advantage of these possibilities, it is important to keep in mind that the archive can:

- Contain coordinates in different reference systems.

- Represent phenomena that require very specific types of maps.

Taking a few minutes to understand those features before choosing a tool is actually the shortest path to a useful and rigorous result. In this post we review, step by step, how geographic data should be worked on and what tools exist to represent it graphically.

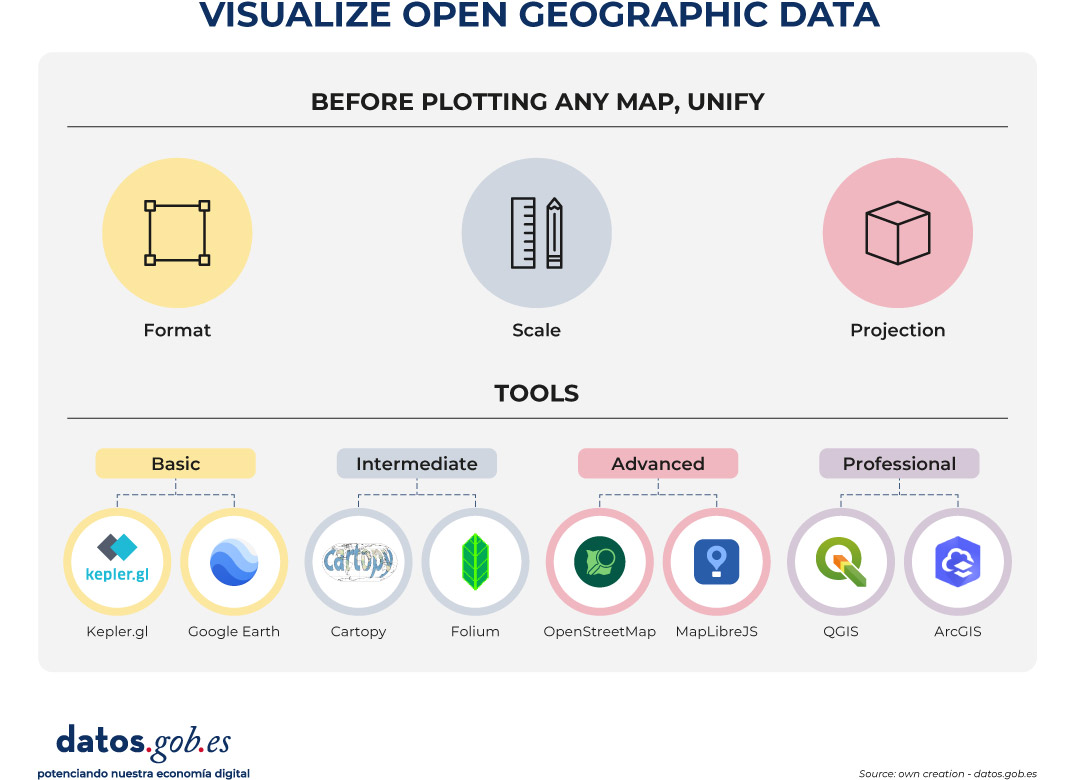

Before drawing any map: format, scale and projection

The first pitfall when working with geospatial data is often format. Georeferenced data comes in a wide variety of presentations: from a simple CSV with latitude and longitude columns, to more specialized formats such as GeoJSON (ideal for exchanging geometries in web environments), Shapefile (SHP, the historical standard for geographic information systems), or scientific formats such as NetCDF and GRIB (designed for climate and meteorological data in grids). Knowing what format the data is in and which one is most suitable for each tool saves a lot of time and avoids import errors.

The second critical aspect is the coordinate reference system (CRS). Not all coordinates speak the same language. The WGS84 system is the one used by GPS and most web map services; UTM, on the other hand, works in meters and is more accurate for distance or area calculations. Mixing data into different systems without reprojecting them (i.e., without converting coordinates from one reference system to another) produces displacements and geometries that do not fit.

The third element to consider before choosing a tool is the type of representation that best communicates the data. It is not the same to show points of interest as it is to trace trajectories, make a choropleth map (with areas colored according to a statistical value), or build digital elevation models or 3D visualizations. Each type of data and each analytical question has its most appropriate cartographic representation.

With these three clear factors (format, projection and type of map) it is time to choose the tool.

Basic Tools: Exploration Without Installation

For those who are new to geographic data visualization, or for those who need to explore a dataset quickly without going into complex configurations, there are accessible options that work directly from the browser or with minimal installation. They are ideal for a first contact with data and for communicating results to non-technical audiences.

Kepler.gl is probably the best option for those who want to get quality interactive maps without writing a single line of code. It is a free and open-source web tool that allows you to drag and drop files in formats such as CSV, GeoJSON or Shapefile and get visualizations immediately.

- What it's used for: Visual exploration of large volumes of mobility data, spatial distribution, and geographic patterns.

- Supported formats: CSV, GeoJSON, Shapefile, and JSON.

- Strength: it offers multiple types of layers – points, arcs, hexbinning, contours – with an intuitive visual interface and visually very careful results, without the need to install anything.

Google Earth is another accessible option for initial exploration. It's free but not open-source, and uploaded data can be processed by Google. Its web version allows you to import KML/KMZ files and is useful for contextualizing information on satellite imagery.

- What it's used for: Contextualization of data on satellite imagery and visual geographic exploration.

- Supported formats: KML and KMZ.

- Strength: the quality and updating of its satellite imagery database makes it a reference tool for placing data in its real territorial context. For rigorous analysis or institutional publication, it is advisable to evaluate more open alternatives.

Intermediate level: Python libraries for analysis and publishing

When the initial exploration gives way to analysis and the need to reproduce, automate, or integrate maps into broader workflows, there are Python libraries that can be a good option. Their use requires basic programming knowledge, but in return they allow much greater control over every aspect of the visualization and facilitate integration with other data analysis tools.

Cartopy is a library that integrates with Matplotlib and is oriented towards the representation of scientific and climate data. Its great strength is the management of cartographic projections, with support for dozens of reference systems.

- What it is used for: Generation of publication maps with scientific data, especially climate and atmospheric data in grid format.

- Supported formats: NetCDF, GRIB, and any source compatible with Matplotlib.

- Strength: fine control over projections and cartographic elements, ideal when the deformation introduced by the projection has a direct impact on the interpretation of the data.

Folium occupies a different niche: it generates interactive web maps based on Leaflet.js directly from Python code, without the need for JavaScript knowledge. It's especially convenient for producing visualizations that are integrated into Jupyter notebooks or web pages.

- What it's used for: Creating interactive maps for web publishing or notebook presentation, with markers, layers, and pop-ups.

- Supported formats: GeoJSON, CSV, and data sources from pandas and GeoPandas.

- Strength: It combines the convenience of Python with the interactivity of Leaflet.js, allowing you to generate complete web visualizations with very few lines of code. Its main limitation is performance with very large datasets.

Advanced level: web maps with full control

If the goal is to build cartographic applications integrated into their own web environments, with the capacity to handle large volumes of data and offer a fluid user experience, it is necessary to go a step further. Tools at this level require web development skills, but offer virtually unlimited control over the behavior and appearance of the map.

OpenStreetMap (OSM) is not exactly a visualization tool, but the world's largest collaborative geographic database, with an open license (ODbL). Its ecosystem includes tools like Overpass Turbo for querying and extracting data, and its cartographic tiles are the foundation on which many web maps are built.

- What it's used for: Obtaining open geographic data and using it as a basemap in web projects.

- Supported formats: OSM XML, PBF and GeoJSON via export.

- Strength: It is the most comprehensive and up-to-date source of open geographic data in the world. For projects committed to open data, using OSM as a foundation is the most consistent option with those principles.

MapLibre GL JS is an open-source JavaScript library that allows you to build high-performance interactive web maps using vector tiles.

- What it's used for: Web mapping app development with full style customization, dynamic data layers, and interactive filters.

- Supported formats: Vector tiles (MVT), GeoJSON, and raster tile fonts.

- Strength: performance far superior to libraries based on SVG or classic canvas, with the ability to handle large geometries smoothly and almost unlimited visual customization.

Professional level: geographic information systems

When spatial analysis goes beyond visualization and requires complex operations on data such as reprojections, network analysis, interpolations, geometrie editing, or precision mapping production, a desktop geographic information system (GIS) is the right tool. This type of software is specifically designed for rigorous work with geospatial data and offers capabilities that no web solution can match.

QGIS is the go-to desktop GIS in the open source world. Free, cross-platform and with a very active community, it covers practically any need for analysis and cartographic production.

- What it's used for: Complex spatial analysis, layer editing, reprojections, generating quality maps for print or digital publishing, and automating geospatial workflows.

- Supported formats: Shapefile, GeoJSON, GeoTIFF, PostGIS, WMS, WFS, and dozens more.

- Strength: The combination of analytical power, flexibility, and zero licensing cost makes it the go-to choice for agencies that regularly work with geospatial data. The learning curve is real, but the investment pays for itself quickly.

ArcGIS, developed by Esri, is the most widely used commercial GIS platform in professional and institutional settings. It offers advanced map analysis, editing, and publishing capabilities, and its cloud ecosystem makes it easy to collaborate and manage geographic data portals.

- What it is used for: advanced spatial analysis, management of geospatial data infrastructures and publication of institutional cartographic portals.

- Supported formats: All industry standards, with native integration with Esri services.

- Strength: very mature ecosystem with professional technical support and wide implementation in the public sector. Its licensing model comes at a high cost that puts it out of reach for many teams. It is mentioned here because of its relevance in the sector, with QGIS being the open alternative that covers most needs without license cost.

Figure 1. Displays open spatial data. Source: own creation – datos.gob.es

None of these tools is better than the others in absolute terms: each one responds well to a type of task, a user profile and a context of use. However, in this post we select some of the most used according to the level of technical knowledge of each professional profile:

- For fast exploration and data communication: Kepler.gl

- For accessible geographic visualization and 3D exploration of the territory: Google Earth

- For scientific analysis reproducible in Python: Cartopy and Folium

- For web development with advanced mapping: MapLibre GL JS

- For open base mapping and projects that require free and editable data: OpenStreetMap

- And for spatial analysis and mapping production: QGIS

In all cases, the starting point is always the same: know the data, understand its structure, and make sure that the map to be built is the one that best communicates what that data has to say. The tool, in the end, is only the last step in a process that begins much earlier.

Description: The Housing Viewer of the Institute of Statistics and Cartography of Andalusia (IECA) is an interactive web application that allows you to explore and analyse the spatial distribution of housing in Andalusia using detailed maps and geographical data.

The tool is part of the statistical project "Characterization and distribution of built space in Andalusia", whose objective is to offer exhaustive information on the housing stock of the autonomous community. The data come mainly from the Real Estate Cadastre and are organised into a statistical grid of cells of 250 × 250 metres, which allows the location and characteristics of the dwellings to be studied with great territorial precision.

Through the viewer, the user can:

- To visualize the geographical distribution of homes in the Andalusian territory.

- Consult statistical information associated with each area of the map.

- Search and navigate the map using zoom and cell selection tools.

- Access alphanumeric data and cartographic layers that show different characteristics of the residential stock.

The application is designed for citizens, researchers, technicians and public administrations, as it facilitates the territorial analysis of housing and supports urban planning, the study of the real estate market or research on the development of the territory. In addition, the data can be downloaded and used in other geographic information systems.

Transporta’m is a tool for real-time tracking of public transportation. With a simple and intuitive interface, you can:

- Check the schedule and current location of trains, subways, trams, and—in the future—buses.

- View service disruptions for the trains you’re tracking.

- Plan alternative routes quickly and efficiently.

AL TEU COSTAT is an application that aims to improve the quality of life of people with disabilities and their environment through the strategic use of technology and data generated by the community itself. It is a platform that centralises information, resources, activities and support channels in a single accessible, intuitive and secure space.

This solution allows you to consult activities and news, access a specialized directory and, for those who register, publish needs, offer support and share proposals for improvement. This interaction generates real-time data on demands, concerns and opportunities for improvement in the field of disability.

The project, which arose from a citizen proposal in participatory budgets, is also an example of collaborative public innovation. The alliance with IThinkUPC and the Universitat Politècnica de Catalunya guarantees a development based on technical rigour, accessibility, digital security and long-term vision, avoiding commercial dependencies and reinforcing the public technology model at the service of the common good.

In short, AL TEU COSTAT is not only an informative app, but a community digital infrastructure that transforms citizen data into public action, strengthening inclusion, participation and quality of life in Rubí.

Just a few months after the success of its first award, the Madrid City Council has opened the call for the second edition of the Open Data Reuse Awards. It is an initiative that seeks to recognize and promote innovative projects that use the datasets published on the datos.madrid.es portal. With a total endowment of 15,000 euros, these awards consolidate the municipal commitment to data culture, transparency and the creation of social and economic value from public information.

In this article we tell you some of the keys you must take into account to participate.

Two award categories to consider

The call establishes two categories, each with several prizes:

1) Web services, applications and visualizations: rewards projects that generate services, visualizations or web or mobile applications.

- First prize: €4,000

- Second prize: €3,000

- Third prize: €1,500

- Student prize: €1,500

2) Studies, research and ideas: focuses on research projects, analysis or description of ideas to create services, studies, visualizations, web or mobile applications. This category is also open to university end-of-degree and end-of-master's projects (TFG-TFM).

- First prize: €2,500

- Second prize: €1,500

- Third prize: €1,000

- Projects already awarded, subsidized or contracted by the Madrid City Council.

- Projects that do not use any datasets from the municipal portal.

In both categories, it is necessary that at least one set of data from the municipal portal is used, and can be combined with public or private sources from any territorial area. Projects can be recent or have been completed in the two years prior to the closing of the call.

Awards may be declared void if the minimum quality is not reached. In this case, the remaining amounts will be redistributed proportionally among the rest of the winners.

Requirements to participate

The call is open to natural and legal persons who are the authors of the projects or initiatives. The aim is for any person or entity with an interest in the reuse of data to be able to submit their proposal, regardless of their technical level. Therefore, both professionals and companies, researchers, journalists and developers, as well as amateurs and amateurs interested in data analysis and visualization can participate.

In the case of the student prize, only those individuals enrolled in official courses 2023/24, 2024/25 or 2025/26 may participate.

On the other hand, the following are excluded from all categories:

Process Phases

The municipal portal details the phases of the call, which include:

- Publication of the call. On March 3, the regulatory bases were published in the Official Gazette of the Madrid City Council.

- Submission of nominations. The deadline for submitting applications is from March 4 to May 4 (both included). They can be submitted online or in person, as explained below.

-

Analysis and correction. Until June 3, the review of the documentation submitted will be carried out. If necessary, applicants will be contacted to correct errors.

-

Assessment and deliberation. A jury will evaluate all the admitted projects, according to the criteria established in the rules of the call. Their usefulness, economic value, social value and contribution to transparency will be taken into account; their degree of innovation and creativity; the variety of datasets used from the Madrid Open Data Portal; and its technical quality. This phase will run until September 15.

-

Resolution. In the months of September and October , the proposal for the granting and official publication of the resolution will be carried out.

-

Awards ceremony. The awards will be presented at a public event, estimated for the month of November.

The official website will update dates and documentation as the process progresses.

How applications are submitted

As mentioned above, applications can be submitted electronically or in person:

- Online, through the electronic headquarters of the Madrid City Council. Identification and electronic signature are required for this.

- In person, at the registration assistance offices of the Madrid City Council, as well as at the registries of other public administrations.

Individuals may submit the application in both ways, while legal persons may only submit the application electronically.

In both cases, nominations must include:

- Official application form, to be downloaded from the Madrid City Council's electronic headquarters.

- Project report, based on a model to be downloaded from the aforementioned electronic office. This document will include the title, authorship and a detailed description, as well as the list of datasets used, the objectives, the target audience, the expected impact, the degree of innovation and the technology used.

- Responsible declaration.

- Collaboration agreement, in the case of presenting itself as a group.

Get inspired by the winning projects of the first edition

The second edition of the Open Data Reuse Awards comes on the heels of the success of the previous edition. In 2025, the Madrid City Council held the first edition of these awards, which brought together 65 nominations of great quality and diversity. Among them, proposals promoted by university students, startups, multidisciplinary teams and citizens committed to the intelligent use of public data stood out.

The award-winning projects demonstrated that open data can become real tools to improve urban life, boost transparency and generate useful knowledge for the city. In this article we summarize what these projects consisted of.

In summary, the II Open Data Reuse Awards 2026 are an opportunity to demonstrate how public data can be turned into real innovation. An invitation to develop projects that promote a smarter, more transparent and participatory Madrid.

embalses.es is a free web platform that gathers, integrates and visualises in real time the hydrological data of the 449 reservoirs in Spain. It is aimed at farmers, fishermen, professionals in the hydrological sector, environmental researchers, data journalists and anyone interested in the state of water resources in Spain.

The project was born with the aim of facilitating citizen access to hydrological information, currently dispersed in multiple institutional portals, integrating it into a single modern, fast and accessible interface. The information is automatically updated every 15 minutes from official sources, such as the Ministry for the Ecological Transition and the Demographic Challenge (MITECO) and the 13 SAIH systems of the hydrographic confederations.

Main functionalities

- Interactive choropletic map by basins and provinces with real-time fill levels.

- Interactive historical graphs with zoom, selection of up to 8 years and comparison with the 10-year average.

- Reservoir rankings: fuller, emptier, higher weekly rises and falls.

- Comparator of up to 10 reservoirs with simultaneous visualization of their data.

- Favorites system for quick access to selected reservoirs.

- Detailed technical data sheets with information on location, capacity and construction characteristics.

- Navigation through river basins, provinces, autonomous communities and rivers.

- Recent readings with hourly volume, level, and flow data.

- Download graphics in image format and generate infographics.

- Options to share data via WhatsApp, X (Twitter) or direct link.

- Global search engine for reservoirs, basins and provinces.

- Integration of graphics into other websites using iframe.

- Progressive web application (PWA) installable on mobile devices with offline access.

Data available by reservoir

- Reservoir volume (hm³)

- Water level (m)

- Fill Percentage (%)

- Inlet and outlet flow (m³/s)

- Accumulated precipitation (mm)

All data is displayed without alterations, respecting its original format.

Gasofinder is a modern and efficient web application designed to help users find the cheapest gas stations closest to their location in real time. Using official data and an interactive map, the application helps save money on every refuel. Specifically, it offers:

-

Interactive Map:

-

Clear Visualization: uses OpenStreetMap maps with a clean design (Carto Voyager).

-

Smart Markers:

🟢 Green: low prices.

🟡 Yellow: average prices.

🔴 Red: high prices. -

Special Icons: Quickly identifies the cheapest (⭐) and the most expensive (⚠️) gas station within the visible area.

-

Savings and Real-Time Pricing: retrieves updated prices directly from the Ministry for the Ecological Transition.

-

Tank Fill Calculation: enter your tank capacity (default 55L) to see how much it will cost to fill it.

-

Savings Estimation: shows how much you save compared to the most expensive option in the area.

-

Price Thermometer: a visual bar that indicates whether a station’s price is good, average, or bad compared to local minimums and maximums.

-

Customization and Filters

Fuel Type: filter by Diesel A, Gasoline 95 E5, Gasoline 98 E5, or Premium Diesel. -

Tank Size: adjustable for personalized calculations.

-

Charging Points Link: direct access to the official electric vehicle charging points map.

-

Navigation and Location

Geolocation: automatically detects your location (first approximately, then precisely). -

Routes: automatically calculates the distance and travel time to the selected gas station.

-

GPS Integration: opens the location directly in Google Maps, Waze, or Apple Maps with a single click.

-

Lock Mode: allows you to "pin" a selected gas station so it does not change automatically while moving the map.

Icon Clarification

Location (📍) Shows your current position.

Star (⭐) The most affordable visible option.

Alert (⚠️) The most expensive visible option.

VirusMap is a free platform that shows the evolution of viruses and public health outbreaks in Catalonia based on official data from the Catalan Public Health Agency. It offers a clear and up-to-date view of the health situation through interactive maps, temporal trends, and predictive analysis, all focused exclusively on Catalonia.

What you can do with VirusMap

- Check the distribution of viruses by municipality in near real time.

- Follow the evolution of influenza, acute respiratory infections, and other respiratory infections.

- Analyze trends by time periods and age groups.

- Compare historical data and detect increases or decreases in cases.

- Access indicative predictions based on AI models Transparent and privacy-friendly

Exclusive use of official and open data. No personal data is collected. Information is processed and stored locally. VirusMap is an open and accessible tool to better understand the evolution of viruses in Catalonia and support informed decisions, both personally and collectively.