We live in an era where science is increasingly reliant on data. From urban planning to the climate transition, data governance has become a structural pillar of evidence-based decision-making. However, there is one area where the traditional principles of data management, validation and control are subjected to extreme tensions: the universe.

Space data—produced by scientific satellites, telescopes, interplanetary probes, and exploration missions— do not describe accessible or repeatable realities. They observe phenomena that occurred millions of years ago, at distances impossible to travel and under conditions that can never be replicated in the laboratory. There is no "in situ" measurement that directly confirms these phenomena.

In this context, data governance ceases to be an organizational issue and becomes a structural element of scientific trust. Quality, traceability and reproducibility cannot be supported by direct physical references, but by methodological transparency, comprehensive documentation and the robustness of instrumental and theoretical frameworks.

Governing data in the universe therefore involves facing unique challenges: managing structural uncertainty, documenting extreme scales, and ensuring trust in information we can never touch.

Below, we explore the main challenges posed by data governance when the object of study is beyond Earth.

I. Specific challenges of the datum of the universe

1. Beyond Earth: new sources, new rules

When we talk about space data, we mean much more than satellite images of the Earth's surface. We delve into a complex ecosystem that includes space and ground-based telescopes, interplanetary probes, planetary exploration missions, and observatories designed to detect radiation, particles, or extreme physical phenomena.

These systems generate data with clearly different challenges compared to other scientific domains:

| Challenge | Impact on data governance |

|---|---|

| Non-existent physical access | There is no direct validation; Trust lies in the integrity of the channel. |

| Instrumental dependence | The data is a direct "child" of the sensor's design. If the sensor fails or is out of calibration, reality is distorted. |

| Uniqueness | Many astronomical events are unique. There is no "second chance" to capture them. |

| Extreme cost | The value of each byte is very high due to the investment required to put the sensor into orbit |

Figure 1. Challenges in data governance across the universe. Source: own elaboration - datos.gob.es.

Unlike Earth observation data -which in many cases can be contrasted by field campaigns or redundant sensors -data from the universe depend fundamentally on the mission architecture, instrument calibration, and physical models used to interpret the captured signal.

In many cases, what is recorded is not the phenomenon itself, but an indirect signal: spectral variations, electromagnetic emissions, gravitational alterations or particles detected after traveling millions of kilometers. The data is, in essence, an instrumental translation of an inaccessible phenomenon.

For all these reasons, in space data cannot be understood without the technical context that generates it.

2. Structural uncertainty and extreme scales

Uncertainty refers to the degree of margin of error or indeterminacy associated with a scientific measurement, interpretation, or result due to the limits of the instruments, observing conditions, and models used to analyze the data. If in other areas uncertainty is a factor that is tried to be reduced by direct, repeatable and verifiable measurements, in the observation of the universe uncertainty is part of the knowledge process itself. It is not simply a matter of "not knowing enough", but of facing physical and methodological limits that cannot be completely eliminated.

Therefore, in the observation of the universe, uncertainty is structural. It is not a specific anomaly, but a condition inherent to the object of study.

There are several critical dimensions:

- Extreme spatial and temporal scales: cosmic distances prevent any direct validation. Timescales imply that the data often captures an "instant" of the remote past and not a verifiable present reality.

- Weak signals and unavoidable noise: the instruments capture extremely subtle emissions. The useful signal coexists with interference, technological limitations and background noise. Interpretation depends on advanced statistical treatments and complex physical models.

- Limited-observation phenomena: Some astrophysical phenomena—such as certain supernovae, gamma-ray bursts, or singular gravitational configurations—cannot be experimentally recreated and can only be observed when they occur. In these cases, the available record may be unique or profoundly limited, increasing the responsibility for documentation and preservation.

Not all phenomena are unrepeatable, but in many cases the opportunities for observation are scarce or depend on exceptional conditions.

II. Building trust when we can't touch the object observed

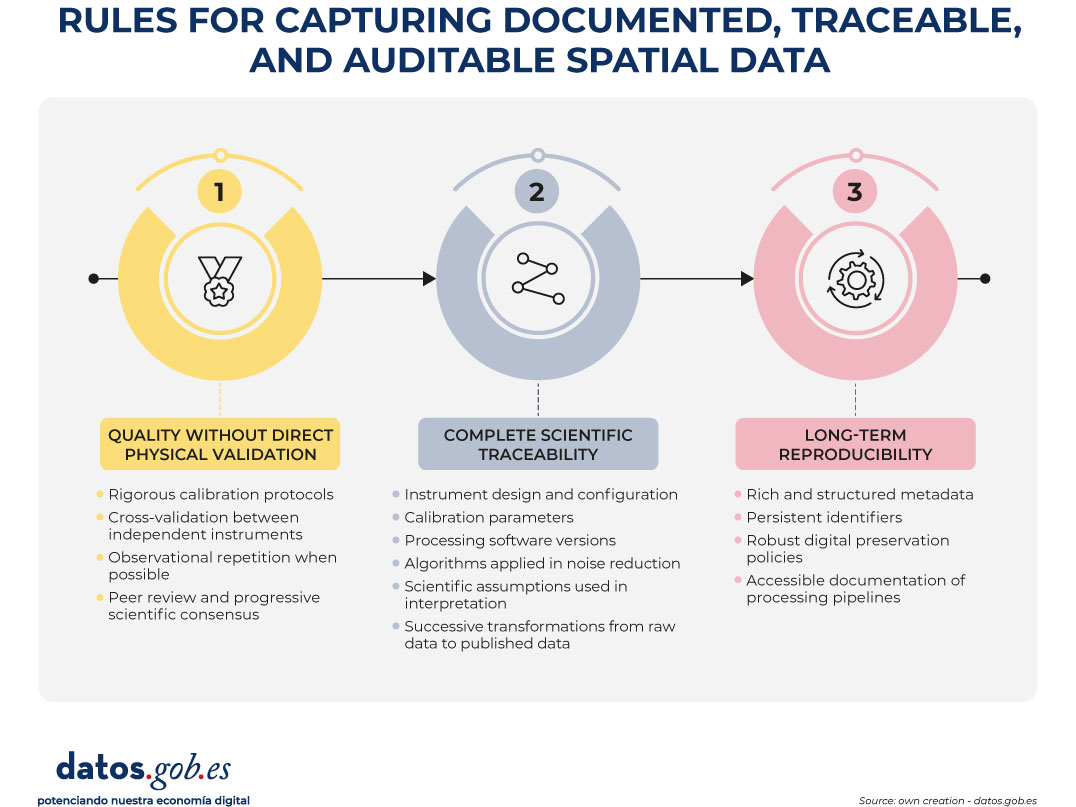

In the face of these challenges, data governance takes on a structural role. It is not limited to guaranteeing storage or availability, but defines the rules by which scientific processes are documented, traceable and auditable.

In this context, governing does not mean producing knowledge, but rather ensuring that its production is transparent, verifiable and reusable.

1. Quality without direct physical validation

When the observed phenomenon cannot be directly verified, the quality of the data is based on:

- Rigorous calibration protocols: instruments must undergo systematic calibration processes before, during, and after operation. This involves adjusting your measurements against known baselines, characterizing your margins of error, documenting deviations, and recording any modifications to your configuration. Calibration is not a one-off event, but an ongoing process that ensures that the recorded signal reflects, as accurately as possible, the observed phenomenon within the physical boundaries of the system.

- Cross-validation between independent instruments: when different instruments – either on the same mission or on different missions – observe a similar phenomenon, the comparison of results allows the reliability of the data to be reinforced. The convergence between observations obtained with different technologies reduces the probability of instrumental bias or systematic errors. This inter-instrumental coherence acts as an indirect verification mechanism.

- Observational repetition when possible: although not all phenomena can be repeated, many observations can be made at different times or under different conditions. Repetition allows to evaluate the stability of the signal, identify anomalies and estimate natural variability against measurement error. Consistency over time strengthens the robustness of the result.

- Peer review and progressive scientific consensus: the data and their interpretations are subject to evaluation by the scientific community. This process involves methodological scrutiny, critical analysis of assumptions, and verification of consistency with existing knowledge. Consensus does not emerge immediately, but through the accumulation of evidence and scientific debate. Quality, in this sense, is also a collective construction.

Quality is not just a technical property; it is the result of a documented and auditable process.

2. Complete scientific traceability

In the spatial context, data is inseparable from the technical and scientific process that generates it. It cannot be understood as an isolated result, but as the culmination of a chain of instrumental, methodological and analytical decisions.

Therefore, traceability must explicitly and documented:

- Instrument design and configuration: information about the technical characteristics of the instrument that captured the signal, such as its architecture, sensing capabilities, resolution limits, and operational configurations, needs to be retained. These conditions determine what type of signal can be recorded and how accurately.

- Calibration parameters: The adjustments applied to ensure that the instrument operates within the intended margins must be recorded, as well as the modifications made over time. The calibration parameters directly influence the interpretation of the obtained signal.

- Processing software versions: the processing of raw data depends on specific IT tools. Preserving the versions used allows you to understand how the results were generated and avoid ambiguities if the software evolves.

- Algorithms applied in noise reduction: since signals are often accompanied by interference or background noise, it is essential to document the methods used to filter, clean, or transform the information before analysis. These algorithms influence the final result.

- Scientific assumptions used in the interpretation: the reading of the data is not neutral: it is based on theoretical frameworks and physical models accepted at the time of analysis. Recording these assumptions allows you to contextualize the conclusions and understand possible future revisions.

- Successive transformations from the raw data to the published data: from the original signal to the final scientific product, the data goes through different phases of processing, aggregation and analysis. Each transformation must be able to be reconstructed to understand how the communicated result was reached.

Without exhaustive traceability, reproducibility is weakened and future interpretability is compromised. When it is not possible to reconstruct the entire process that led to a result, its independent evaluation becomes limited and its scientific reuse loses its robustness.

3. Long-term reproducibility

Space missions can span decades, and their data can remain relevant long after the mission has ended. In addition, scientific interpretation evolves over time: new models, new tools, and new questions may require reanalyzing information generated years ago.

Therefore, data must remain interpretable even when the original equipment no longer exists, technological systems have changed, or the scientific context has evolved.

This requires:

- Rich and structured metadata: the contextual information that accompanies the data – about its origin, acquisition conditions, processing and limitations – must be organized in a clear and standardized way. Without sufficient metadata, the data loses meaning and becomes difficult to reinterpret in the future.

- Persistent identifiers: Each dataset must be able to be located and cited in a stable manner over time. Persistent identifiers allow the reference to be maintained even if storage systems or technology infrastructures change.

- Robust digital preservation policies: Long-term preservation requires strategies that take into account format obsolescence, technological migration, and archive integrity. It is not enough to store; It is necessary to ensure that the data remains accessible and readable over time.

- Accessible documentation of processing pipelines: the process that transforms raw data into scientific product must be described in a comprehensible way. This allows future researchers to reconstruct the analysis, verify the results, or apply new methods on the same original data.

Reproducibility, in this context, does not mean physically repeating the observed phenomenon, but being able to reconstruct the analytical process that led to a given result. Governance doesn't just manage the present; It ensures the future reuse of knowledge and preserves the ability to reinterpret information in the light of new scientific advances.

Figure 2. Rules for capturing documented, traceable, and auditable spatial data. Source: own elaboration - datos.gob.es.

Conclusion: Governing What We Can't Touch

The data of the universe forces us to rethink how we understand and manage information. We are working with realities that we cannot visit, touch or verify directly. We observe phenomena that occur at immense distances and in times that exceed the human scale, through highly specialized instruments that translate complex signals into interpretable data.

In this context, uncertainty is not a mistake or a weakness, but a natural feature of the study of the cosmos. The interpretation of data depends on scientific models that evolve over time, and quality is not based on direct verification, but on rigorous processes, well documented and reviewed by the scientific community. Trust, therefore, does not arise from direct experience, but from the transparency, traceability and clarity with which the methods used are explained.

Governing spatial data does not only mean storing it or making it available to the public. It means keeping all the information that allows us to understand how they were obtained, how they were processed and under what assumptions they were interpreted. Only then can they be evaluated, reinterpreted and reused in the future.

Beyond Earth, data governance is not a technical detail or an administrative task. It is the foundation that sustains the credibility of human knowledge about the universe and the basis that allows new generations to continue exploring what we cannot yet achieve physically.

Content prepared by Mayte Toscano, Senior Consultant in technologies related to the data economy. The contents and viewpoints expressed in this publication are the sole responsibility of the author.