The Spanish Data Protection Agency (AEPD), through its own Innovation and Technology section, carries out an essential didactic task by providing a documentary corpus that translates the legal obligations of the General Data Protection Regulation (GDPR) into specific technological realities. Its value lies in its ability to offer legal certainty and technical guidelines in areas where regulations are still finding their practical fit, such as artificial intelligence or biometrics.

These are reference guides, articles and other teaching materials aimed especially at SMEs and entrepreneurs. In this post we present some of the most recent, ordered by sector and subject.

The new trends in artificial intelligence and its secure deployment

The evolution of artificial intelligence towards increasingly autonomous systems poses new challenges in terms of data protection. For this reason, the Spanish Data Protection Agency has developed various guides and documents aimed at facilitating a secure and responsible deployment of this technology. In general, AI is one of the areas of greatest document activity of the AEPD due to its transversal impact. The Agency's resources range from internal management to state-of-the-art technologies.

- Guide to agentric artificial intelligence from the perspective of data protection: theso-called agentric AI is one capable of making decisions and acting with a certain degree of independence. Unlike purely reactive models, an agent AI can carry out multiple tasks autonomously and make intermediate decisions during complex processes. This guide discusses the risks of loss of human control and sets out criteria to ensure that decision traceability is not lost in automation.

- General policy for the use of generative AI in AEPD administrative processes: generative artificial intelligence (IAG or GenAI) is a type of AI capable of producing new content, such as text, images, audio or code from learned patterns. This document establishes an internal policy for its responsible use in administrative processes.

- Implementation annex of the AEPD's general IAG policy: this annex to the above document includes the permitted use cases, the type of systems recommended (external, internal or ad hoc), the level of risk associated with each application and the specific obligations of review, human control, security and data protection.

- Basic summary of obligations and recommendations for the management of generative AI: this is a synthesized outline on aspects of governance, design and development of use cases, processing of personal data and sensitive information, transparency and explainability, and responsible use of tools, among others.

- Federated Learning Report: Federated learning is an AI approach that allows models to be trained collaboratively without centralizing data, improving privacy, and aligning with GDPR. This guide explains what it consists of, where personal data can be processed and what are the benefits and challenges in data protection.

To complement this information, users can also visit the AEPD's blog, which serves as a trend observatory where the visible and invisible risks of consumer technologies are analyzed. Some of the topics covered are:

- Image and voice processing: Analyses have been published on AI voice transcription and the use of services that convert photos to other formats (such as animations). These articles warn about the processing of biometric data and the ownership of data in the cloud.

- Algorithmic literacy: resources such as "Addressing AI Misconceptions" seek to raise the level of critical judgment of users and managers in the face of the opacity of algorithms.

- Balance of rights: the analysis of the protection of minors in the digital environment and the design of public contracts that integrate privacy by design stands out.

European Digital Identity Wallet

The evolution towards an interconnected Europe requires robust identity standards and security measures accessible to all levels of business.

Building a secure, interoperable and trustworthy digital identity is one of the pillars of digital transformation in Europe. The future European Digital Identity Portfolio is a project that aims to allow citizens to identify themselves electronically and share personal attributes in a controlled way across multiple services, both public and private.

To analyse its implications from the point of view of privacy, the Spanish Data Protection Agency has published a series of four monographic articles throughout 2025. In them, the Agency breaks down the relationship between the new digital identity wallet and the GDPR.

These contents address key issues such as:

- Data minimisation and the principle of proportionality in information exchange: explains how the eIDAS2 Regulation boosts the European digital identity portfolio. This regulation establishes a framework for secure, interoperable and user-centric electronic identification, aligned with the GDPR to ensure the control and protection of personal data across the EU.

- The risks associated with interoperability between systems: delves into how to prevent the use of the European Digital Identity Wallet from tracking citizens when they present credentials in different public or private services, highlighting the need for advanced cryptographic solutions.

- The need to ensure user control over their credentials: examines identification threats in digital identity wallets under eIDAS2, highlighting that, without strong safeguards such as pseudonymization and non-bonding, even selective disclosure of data can allow for the improper identification and profiling of users.

- The security measures needed to prevent misuse or data breaches: Raises the threats of inaccuracy in digital identity wallets under eIDAS2, highlighting how outdated data or linkable cryptographic mechanisms can lead to erroneous decisions and compromise privacy. To solve this, it stresses the need for solutions that guarantee both reliability and plausible deniability (that there is no technical evidence to prove that a person has carried out a specific action with their wallet or digital credential).

This series provides a progressive overview that helps to understand both the potential of European digital identity and the challenges posed by its implementation from a data protection perspective.

Personal Data Protection Encryption in SMBs

For many small and medium-sized businesses, ensuring the security of personal data remains a challenge, especially due to a lack of technical resources or specialized knowledge. In this context, encryption is presented as a fundamental tool to protect the confidentiality and integrity of information.

With the aim of bringing this concept closer to a non-expert audience, the Spanish Data Protection Agency has published the Encryption Guide for the self-employed and SMEs, accompanied by an explanatory infographic.

These resources explain in a clear and practical way:

- What is encryption and why is it important in data protection?

- What types of encryption exist and in which cases they are applied.

- How to implement encryption measures in common situations, such as sending emails or storing information.

- Which tools can be used without the need for advanced knowledge.

Scientific research and the European legal framework

For profiles that require a more in-depth and academic analysis, the Agency has promoted the publication of scientific articles in various international media, which connect technology with ethics and law. Some examples are:

- Addictive patterns: analysis of how interface design affects human behavior.

- Neurotechnology: study on the risks of brain-computer interfaces.

- Algorithmic governance: A comprehensive analysis that aligns the GDPR with the European Artificial Intelligence Regulation (AI Act), the Digital Services Act (DSA), and the Cyber Resilience Act.

The didactic value of these materials lies in their ability to offer a 360-degree view of the data. From cutting-edge academic research to encryption infographics for a small business, the AEPD provides the building blocks for innovation that doesn't sacrifice privacy.

Together, these materials shared by the Spanish Data Protection Agency help to incorporate effective security measures and comply with the requirements of the General Data Protection Regulation in a proportionate and accessible way. All of them, and some others, are compiled and ordered by theme in its website, available here.

In recent years, artificial intelligence (AI) has gone from being a futuristic promise to becoming an everyday tool: today we live with language models, generative systems and algorithms capable of learning more and more tasks. But as their popularity grows, so does an essential question: how do we ensure that these technologies are truly reliable and trustworthy? Today we are going to explore that challenge with two invited experts in the field:

- David Escudero, director of the Artificial Intelligence Center of the University of Valladolid.

- José Luis Marín, senior consultant in strategy, innovation and digitalisation.

Listen to the podcast (availible in spanish) completo

Summary / Transcript of the interview

1. Why is it necessary to know how artificial intelligences work and evaluate this behavior?

Jose Luis Marín: It is necessary for a very simple reason: when a system influences important decisions, it is not enough that it seems to work well in an eye-catching demo, but we have to know when it gets it right, when it can fail and why. Right now we are already in a phase in which AI is beginning to be applied in such delicate issues as medical diagnoses, the granting of public aid or citizen care itself in many scenarios. For example, if we ask ourselves whether we would trust a system that operates like a black box and decides whether to grant us a grant, whether we are selected for an interview or whether we pass an exam without being able to explain to us how that decision was made, surely the answer would be that we would not trust it; And not because the technology is better or worse, but simply because we need to understand what is behind these decisions that affect us.

David Escudero: Indeed, it is not so much to understand how algorithms work internally, how the logic or mathematics behind all these systems works, but to understand or make users see that this type of system has degrees of reliability that have their limits, just like people. People can also make mistakes, they can fail at a certain time, but you have to give guarantees for users to use them with a certain level of security. Providing metrics on the performance of these algorithms and making them appear reliable to some degree is critical.

2. A concept that arises when we talk about these issues is that of explainable artificial intelligence . How would you define this idea and why is it so relevant now?

David Escudero: Explainable AI is a technicality that arises from the need for the system not only to offer decisions, not only to say whether a certain file has to be classified in a certain way or another, but to give the reasons that lead the system to make that decision. It's opening that black box. We talk about a black box because the user does not see how the algorithm works. It doesn't need it either, but it does at least give you some clues as to why the algorithm has made a certain decision or another, which is extremely important. Imagine an algorithm that classifies files to refer them to one administration or another. If the end user feels harmed, he needs to have a reason why this has been so, and he will ask for it; He can ask for it and he can demand it. And if from a technological point of view we are not able to provide that solution, artificial intelligence has a problem. In this sense, there are techniques that advance in providing not only solutions, but also in saying what are the reasons that lead an algorithm to make certain decisions.

Jose Luis Marín: I can't explain it much better than David has explained it. What we are really looking for with explainable artificial intelligence is to understand the reason for those answers or those decisions made by artificial intelligence algorithms. To simplify it a lot, I think that we are not really talking about anything other than applying the same standards as when those decisions are made by people, whom we also make responsible for the decisions. We need to be able to explain why a decision has been made or what rules have been followed, so that we can trust those decisions.

3. How is this need for explainability and rigorous evaluation being addressed? Which methodologies or frameworks are gaining the most weight? And what is the role of open data in them?

Jose Luis Marín: This question has many dimensions. I would say that several layers are converging here. On the one hand, specific explainability techniques such as LIME (Interpretable Model-agnostic Explanations) or SHAP (SHapley Additive exPlanations) or many others. I usually follow, for example, the catalog of reliable AI tools and metrics of the OECD's Observatory of Public Policies on Artificial Intelligence, because there progress in the domain is recorded quite well. But, on the other hand, we have broader evaluation frameworks, which do not only look at purely technical issues, but also issues such as biases, robustness, stability over time and regulatory compliance. There are different frameworks such as the NIST (National Institute of Standards and Technology) risk management framework, the impact assessment of the algorithms of the Government of Canada or our own AI Regulations. We are in a phase in which a lot of public and private initiatives are emerging that will help us to have better and better tools.

David Escudero: For research, it is still a fairly open field. There are methodologies, indeed, but new models based on neural networks have opened up a huge challenge. The artificial intelligence that had been developed in the years prior to the generative AI boom, to a large extent, was based on expert systems that accumulated a lot of knowledge rules about the domain. In this type of technology, explainability was given because, since what was done was to trigger a series of rules to make decisions, following backwards the order in which the rules had been applied, you had an explanation; But now with neural systems, especially with large models, where we are talking about billions and billions of parameters, these types of approximations have become impossible, unapproachable, and other types of methodologies are applied that are mainly based on knowing, when you train a machine learning model, what are the properties or attributes in the training that lead you to make one decision or another. Let's say, what are the weights of each of the properties they are using.

For example, if you're using a machine learning system to decide whether to advertise a certain car to a bunch of potential customers, the machine learning system is trained based on an experience. In the end, you are left with a neural model where it is very difficult to enter, but you can do it by analyzing the weight of each of the input variables that you have used to make that decision. For example, the person's income will be one of the most important attributes, but there may be other issues that lead you to very important considerations, such as biases. Imagine that one of the most important variables is the gender of the person. There you enter into a series of considerations that are delicate. In other types of algorithms, for example, that are based on images, an explainable AI algorithm can tell you which part of the image was most relevant. For example, if you are using an algorithm to, based on the image of a person's face - I am talking about a hypothetical, a future, which would also be an extreme case - decide whether that person is trustworthy or not. Then you could look at what traits of that person artificial intelligence is paying more attention to, for example, in the eyes or expression. This type of consideration is what AI would make explainable today: to know which are the variables or which are the input data of the algorithm that take on greater value when making decisions.

This brings me to another part of your question about the importance of data. The quality of the training data is absolutely important. This data, these explainable algorithms, can even lead you to derive conclusions that indicate that you need data of more or less quality, because it may be giving you some surprising result, which may indicate that some training or input data is deriving outputs and should not. Then you have to check your own input data. Have quality reference data like you can find in datos.gob.es. It is absolutely essential to be able to contrast the information that this type of system gives you.

José Luis Marín: I think open data is key in two dimensions. First, because they allow evaluations to be contrasted and replicated with greater independence. For example, when there are validation datasets that are public, it not only assesses who builds the system, but also that third parties can evaluate (universities, administrations or civil society itself). That openness of evaluation data is very important for AI to be verifiable and much less opaque. But I also believe that open data for training and evaluation also provides diversity and context. In any minority context in which we think, surely large systems have not paid the same attention to these aspects, especially commercial systems. Surely they have not been tested at the same level in majority contexts as in minority contexts and hence many biases or poor performances appear. So, open datasets can go a long way toward filling those gaps and correcting those problems.

I think that open data in explainable artificial intelligence fits very well, because deep down they share a very similar objective, related to transparency.

4. Another challenge we face is the rapid evolution in the artificial intelligence ecosystem. We started talking about the popularity of chatbots and LLMs, but we find that we are still moving towards agentic AI, systems capable of acting more autonomously. What do these systems consist of and what specific challenges do they pose from an ethical point of view?

David Escudero: Agent AI seems to be the big topic of 2026. It is not such a new term, but if last year we were talking about AI agents, now we are talking about agent AI as a new technology that coordinates different agents to solve more complex tasks. To simplify, if an agent serves you to carry out a specific activity, for example, to book a plane ticket, what the agent AI would do is: plan the trip, contrast different offers, book the plane, plan the outward trip, the stay, again the return and, finally, evaluate the entire activity. What the system based on agent AI does is coordinate different agents. In addition, with a nuance. When we talk about the word agéntica – which we don't have a very direct translation in Spanish – we think of a system that takes the initiative. In the end, it is no longer just you who, as a user, ask artificial intelligence for things, but AI is already capable of knowing how it can solve things. It will ask you for information when it needs it and will try to adapt to give you a final solution as a user, but more or less autonomously, making decisions in intermediate processes.

Here precision and explainability are fundamental because a very important challenge is opened again. If at any given moment one of these agents used by the agentic AI fails, the effect of summing errors can be created and in the end it ends up like the phone smashed. From one system to another, from one agent to another, information is passed and if that information is not as accurate as it should be, in the end the solution can be catastrophic. Then new elements are introduced that make the problem even more exciting from a technological point of view. But we also have to understand that it is absolutely necessary, because in the end we have to move from systems that provide a very specific solution for a very particular case to systems that combine the output of different systems to be a little more ambitious in the response given to possible users.

Jose Luis Marín: Indeed. The moment we go from a type of system that, in principle, we give the "ability to think" about the actions that should be done and tell us about them, to other systems that it is as if they have hands to interact with the digital world - and we begin to see systems that even interact with the physical world and can execute those actions, that do not stop at telling you or recommending them to you – very interesting opportunities open up. But the complexity of the evaluation is also multiplied. The problem is no longer just whether the answer is right or wrong, but it is beginning to be who controls what the system does, what margin of decision it has, who supervises it and, above all, who responds if something goes wrong, because we are not only talking about recommendations, we are talking about actions that sometimes may not be so easy to undo. This leads to new or at least more intense risks: if traceability is lost in the execution of actions that were not foreseen or that should not have occurred at a certain time; or there may be misuses of information, or many other risks. I believe that agentic AI requires even more governance and a much more careful design aligned with people's rights.

5. Let's talk about real applications, where do you see the most potential and need for evaluation and explainability in the public sector?

Jose Luis Marín: I would say that the need for evaluation and explainability is greater where AI can influence decisions that affect people. The greater the impact on rights or opportunities or, even on trust in institutions, the greater this demand must be. If we think, for example, of areas such as health, social services, employment, education... In all of them, logically, the need for evaluation in the public sector is unavoidable.

In all cases, AI can be very useful in supporting decisions to achieve efficiencies in multiple scenarios. But we need to know very well how it behaves and what criteria are being used. This doesn't just affect the most complex systems. I think we have to look at the systems that at first may seem more or less sensitive at first glance, such as virtual assistants that we are already starting to see in many administrations or automatic translation systems... There is no final decision made by the AI, but a bad recommendation or a wrong answer can also have consequences for people. In other words, I think it does not depend so much on technological complexity as on the context of use. In the public sector, even a seemingly simple system can have a lot of impact.

David Escudero: I'll throw the rag at you to make another podcast about the concept that is also very fashionable, which is Human in the loop or Human on the loop. In the public sector we have a body of public officials who know their work very well and who can help. Human in the loop would be the role that the civil servant can play when it comes to generating data that can be useful for training systems, checking that the data with which systems can be trained is reliable, etc.; and Human on the loop would be the supervision of the decisions that artificial intelligence can make. The one who can review, who can know if that decision made by an automatic system is good or bad, is a public official.

In this sense, and also related to agentic AI, we have a project with the Spanish Foundation for Science and Technology to advise the Provincial Council of Valladolid on artificial intelligence tasks in the administration. And we see that many of the tasks that the civil servants themselves ask us do not have so much to do with AI, but with the interoperability of the services they already offer and that are automatic. Maybe in an administration they have a service developed by an automatic system, next to another service that offers them a form with results, but then they have to type in the data communicated by both services by hand. There we would also be talking about possibilities for the agency AI to intercommunicate. The challenge is to involve in this entire process the role of the civil servant as a watchdog that public functions are carried out rigorously.

Jose Luis Marín: The concept of Human in the loop is key in many of the projects we work on. In the end, it is the combination not only of technology, but of people who really know the processes and can supervise them and complement those actions that the Agent AI can perform. In any system of simple care, such supervision is already necessary in many cases, because a bad recommendation can also have many consequences, not only in the action of a complex system.

6. In closing, I'd like each of you to share a key idea about what we need to move towards a more trustworthy, assessable, and explainable AI.

David Escudero: I would point out, taking advantage of the fact that we are on the datos.gob.es podcast, the importance of data governance: to make sure that institutions, both public and private, are very concerned about the quality of the data, about having well-shared data that is representative, well documented and, of course, accessible. Data from public institutions is essential for citizens to have these guarantees and for companies and institutions to prepare algorithms that can use this information to improve services or provide guarantees to citizens. Data governance is critical.

Jose Luis Marín: If I had to summarise everything in a single idea, I would say that we are still a long way from assessment being a common practice. In AI systems we will have to make it mandatory within the development and deployment processes. Evaluating is not trying once and taking it for granted, it is necessary to continuously check how and where they can fail, what risks they introduce and if they are still appropriate when the context in which a certain system was designed has changed. I think we are still far from this.

Indeed, open data is key to contributing to this process. An AI is going to be more reliable the more we can observe it and improve it with shared criteria, not only with those of the organization that designs them. That is why open data provides transparency, can help us facilitate verification and build a more solid basis so that services are really aligned with the general interest.

David Escudero Mancebo: In that sense, I would also like to thank spaces like this that undoubtedly serve to promote that culture of data, quality and evaluation that is so necessary in our society. I think a lot of progress has been made, but that, without a doubt, there is still a long way to go and opening spaces for dissemination is very important.

At the epicentre of global innovation that defines Mobile World Congress (MWC), a space has emerged where human talent takes centre stage: the Talent Arena.

The 2026 edition, promoted by Mobile World Capital Barcelona, brought together professionals, technology companies, training centres and emerging talent between 2 and 4 March with a common goal: to learn, connect and explore new opportunities in the digital field. At this event, Red.es actively participated with several sessions focused on one of the great current challenges: how to promote digital transformation through talent, training and innovation. Among them was the workshop "Open Data in Spain. From theory to practice with datos.gob.es", a session that focused on the strategic role of open data and its connection with emerging technologies such as artificial intelligence.

In this post we review the contents of the presentation that combined:

- A didactic look at the evolution, current state and future of open data in Spain

- A hands-on workshop on creating a conversational agent with MCP

What is open data? Evolution and milestones

The session began by establishing a fundamental pillar: the importance of open data in today's ecosystem. Beyond their technical definition – data that can be freely used, reused and shared by anyone, for any purpose – the talk underscored that their true power lies in the transformative impact they generate.

As addressed in the workshop, this data comes from multiple sources (public administrations, universities, companies and even citizens) and its openness allows:

- Promote institutional transparency, by facilitating access to public information.

- Encourage innovation, by enabling developers and businesses to create new services.

- Generate economic and social value, from the reuse of information in multiple sectors, such as health, education or the environment.

One of the key aspects of the workshop was to contextualize the historical evolution of open data. Although the first antecedents date back to the 50s and 60s, the modern concept of "open data" began to consolidate in the 90s. Subsequently, milestones such as the Memorandum on Transparency and Open Government (2007-2009) or the creation of the Open Government Partnership in 2011 marked a turning point at the international level.

In Spain, this development has been supported by a solid regulatory framework, such as Law 37/2007, which establishes key principles:

- Default opening of public data, especially high-value data.

- Creation of interoperable catalogs.

- Promotion of the reuse of information.

- Establishment of units responsible for data management.

The role of datos.gob.es: the national open data portal

At the heart of this ecosystem is datos.gob.es, the national open data portal, which acts as a unified access point to the public information available in Spain.

During the workshop, it was explained how this platform has evolved over time: from a few hundred datasets to hosting more than 100,000 today. It has also been incorporating new functionalities and adapting to international standards such as DCAT-AP and its national adaptation DCAT-AP-ES. These standards allow metadata to be structured in an interoperable way, facilitating integration between different catalogs.

Check here the Practical Guide to Implementing DCAT-AP-ES step by step

In addition, the data federation process in datos.gob.es was detailed , which ensures that data from different sources can be integrated in a consistent and accessible way.

Despite the progress, the presentation also addressed the remaining challenges:

- Data quality and updating.

- Standardization and interoperability.

- Security and access control, especially in AI-connected environments.

- Training of users, both technical and non-technical.

Figure 1. Photo taken during the Talent Arena presentation at the Mobile World Congress. The photo shows the slide from the presentation explaining the concept of open data. Source: own elaboration - datos.gob.es.

From data to intelligence: the leap to AI

One of the most innovative elements of the workshop was its practical approach, focused on the application of artificial intelligence to open data. This is where the Model Context Protocol (MCP) came into play, an open standard that allows you to connect language models (Large Language Model or LLM) with external data sources in real time.

The initial problem that the workshop had to answer is how AI models, on their own, do not have up-to-date access to information or external systems. This limits their usefulness in real contexts. One solution may be to develop an MCP that acts as a "bridge" between the model and data sources, enabling:

- Access up-to-date information.

- Execute actions on external systems.

- Integrate multiple data sources securely.

In simple words, it is about connecting the "brain" (the AI model) with the "tools" (databases, APIs, internal systems).

The exercise, which took place live in the Talent Arena, began with a simple example: creating a database of film preferences and developing an MCP that would allow it to be consulted using natural language.

From there, key concepts were introduced:

- Identification of the intention of the model.

- Function calling.

- Generation of natural language responses from structured data.

This approach allows us to abstract the technical complexity and bring the use of data closer to non-specialized profiles.

The next step was to apply this same approach to the datos.gob.es catalog. Through its API, it's possible. First, it allows you to search for datasets by title and filter by topic; then through the API you can obtain detailed information about a dataset and access catalog statistics.

The MCP developed in the workshop acted as an intermediary between the AI model and this API, allowing complex queries to be made using natural language.

This exercise combined a local database (SQLite) and the consumption of external data through an API, all integrated through an MCP server that allowed these functionalities to be exposed as accessible tools. The goal was to understand how to structure data, query it, and make it available to other AI systems or models in an organized way.

The full code is available as an attachment to this post in Python Notebook format.

This exercise is a sign of the enormous opportunities before us. The combination of open data and artificial intelligence can:

- Democratize access to information.

- Accelerate innovation.

- Improve decision-making in the public and private sectors.

In summary, the workshop "Open Data in Spain. From theory to practice with datos.gob.es" highlighted a fundamental idea: data, by itself, does not generate value. It is their use, interpretation and combination with other technologies that allows them to be transformed into knowledge and real solutions.

The evolution of open data in Spain shows that much progress has been made in recent years. However, the real potential is yet to be exploited, especially in its integration with technologies such as artificial intelligence. Events like Talent Arena 2026 serve precisely that: connecting ideas, sharing knowledge, and exploring new ways of doing things.

There's an idea that is repeated in almost any data initiative: "if we connect different sources, we'll get more value". And it is usually true. The nuance is that value appears when we can combine data without friction, without misunderstandings and without surprises. The Public Sector Data reuser´s decalogue sums it up nicely: interoperability is especially critical just when we're trying to mix data from a variety of sources, which is where open data tends to bring the most in.

In practice, interoperability is not just "that there is an API" or "that the file is downloadable". It is a broader concept, with several layers: if we only take care of one, the others end up breaking the reuse. We connect... But we don't understand what each field means. We understand... but there is no stability or versioning. There is stability... but there is no common process for resolving incidents. And, even with all of the above, clear rules of use may be lacking. For this reason, it is also a mistake to think that interoperability is a purely computer problem that can be fixed by "buying the right software": technology is only the tip of the iceberg. If we want data to truly flow between public administration, business and research centres, we need a holistic vision.

And here is the good news: it can be tackled incrementally, step by step. To do it well, the first thing is to clarify what type of interoperability we are looking for in each case, because not all barriers are technical or solved in the same way.

In this post we are going to break down the different types of interoperability, to identify what each one brings and what fails when we leave it out.

The different types of interoperability

Following the European Interoperability Framework (EIF), it is convenient to think of interoperability as a building with four main layers: technical, semantic, organisational and legal. If one fails, the whole suffers.

We then unify the four layers with a data-centric approach, including examples applied to different industries.

1. Technical interoperability: systems can exchange data

It is the "visible" layer: infrastructures, protocols and mechanisms to reliably send/receive data.

But what does it mean in practice?

-

Machine-readable formats: such as CSV, JSON, XML, RDF, avoiding human-readable documents only (such as PDF).

-

Stable APIs and endpoints: with documentation, authentication when applicable, and versioning.

-

Non-functional requirements: availability, performance, security and technical traceability.

What are the typical errors or failures that generate problems?

In the specific case of technical interoperability, these issues mainly arise from ‘silent’ changes, for example, columns and/or structure being altered and breaking integrations, or the presence of non‑persistent URLs, APIs without versioning, or lacking documentation.

Example: let's land it in a specific case for the mobility domain

Let's imagine that a city council publishes in real time the occupancy of parking lots. If the API changes the name of a field or the endpoint without warning, the navigation apps stop showing available spaces, even if "the data exists". The problem is technical: there is a lack of stability, versioning, and interface contract.

2. Semantic interoperability: they also understand each other

If technical interoperability is "the pipes", semantics is the language. We can have perfectly connected systems and still get disastrous results if each part interprets the data differently.

But what does it mean in practice?

-

Glossaries of clear terms: definition of each field, unit, format, range, business rules, granularity, and examples.

-

Controlled vocabularies , taxonomies, and ontologies for unambiguous classification and encoding of values.

-

Unique identifiers and standardised references through reference data with official codes, common catalogues, etc.

What are the typical errors or failures that generate problems?

These issues usually arise when there is ambiguity (for example, if it only says ‘date’, we don’t know whether it refers to the registration date, publication date, or effective date), different units (for example, the unit of measurement of the data is not known: kWh vs MWh, euros vs thousands of euros), incompatible codes (M/F vs 1/2 vs male/female) or even changes in meaning in historical series without explaining it.

Example: let's land it on a specific case in the energy sector

An administration publishes data on electricity consumption by building. A reuser crosses this data with another regional dataset, but one is in kWh and the other in MWh, or one measures "final" consumption and the other "gross". The crossing "fits" technically, but the conclusions go wrong because there is a lack of semantics: definitions and shared units.

3. Organisational interoperability: processes must maintain consistency

Here we talk less about systems and more about people, responsibilities and processes. Data doesn't stand on its own: it's published, updated, corrected, and explained because there's an organization behind it that makes it possible.

But what does it mean in practice?

-

Clear roles and responsibilities: who defines, who validates, who publishes, who maintains and who responds to incidents.

-

Change management: what is a major/minor change, how it is versioned, how it is communicated, and whether the history is preserved.

-

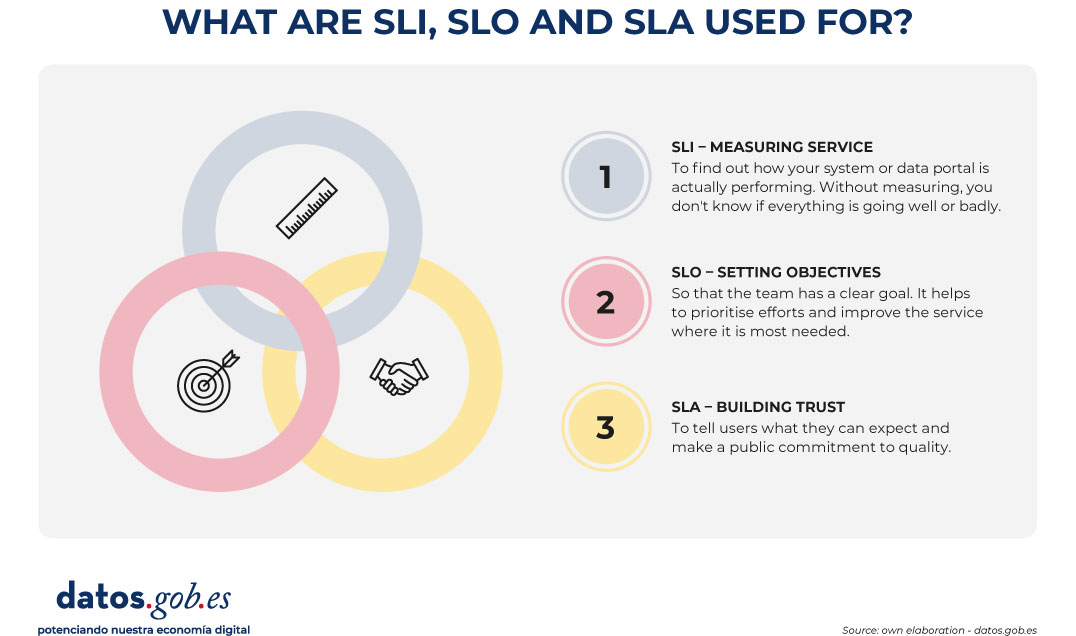

Incident management: single channel, response times, prioritization, traceability and closure.

-

Operational commitments (such as service level agreements or SLAs): update frequency, maintenance windows, quality criteria and periodic reviews.

Here, for example, the UNE specifications on data governance and management can help us, where the keys to establishing organisational models, roles, management processes and continuous improvement are given. Therefore, they fit precisely into this layer: they help to ensure that publishing and sharing data does not depend on the "heroic effort" of a team, but on a stable way of working in which the team matures.

What are the typical errors or failures that generate problems?

The classics: "each unit publishes in its own way", there is no clear responsible, there is no circuit to correct errors, it is updated without warning, it is not preserved historical or the feedback of the reuser is lost in a generic mailbox without tracking.

Example: let's land it in a specific case in the environment

A confederation publishes water quality data and several units provide measurements. Without a common validation process, a coordinated schedule, and an incident channel, the dataset begins to have inconsistent values, gaps, and late corrections. The problem is not the API or the format: it is organizational, because maintenance is not governed.

4. Legal interoperability: that the exchange is viable and compliant

This is the layer that makes the exchange secure and scalable. You can have perfect data at a technical, semantic and organizational level... and even so, not being able to reuse them if there is no legal clarity.

But what does it mean in practice?

-

Clear license and terms of use: attribution, redistribution, commercial use, obligations, etc.

-

Compatibility between licenses when mixing sources: avoiding unfeasible combinations.

-

Data protection compliance: such as the General Data Protection Regulation (GDPR), intellectual property, trade secrets or industry boundaries.

-

Explicit rules on what can and cannot be done: also indicating with what requirements).

What are the typical errors or failures that generate problems?

The classic "jungle": absent or ambiguous licenses, contradictory conditions between datasets, doubts about whether there is personal data or risk of re-identification, or restrictions that are discovered when the project is already advanced.

Example: let's land it in a specific case in culture and heritage

A public archive publishes images and metadata from a collection. Technically everything is fine, and the metadata is rich, but the license is confusing or incompatible with other data that you want to cross (for example, a private repository with restrictions). Result: a company or a university decides not to reuse due to legal uncertainty. The blockade is not technical: it is legal.

In short, interoperability works as a "pack" of four layers: connect (technical), understand the same (semantics), maintain it in a sustained way (organizational) and be able to reuse without risk (legal).

For a quick overview with real-world examples, the following infographic summarizes how each layer is implemented across different sectors (standards, models, practices, and regulatory frameworks) and which components are typically used as references in each case.

Figure 1. Infographic: “Interoperability: the key to working with data from diverse sources”. An accessible version is available here. Source: own elaboration - datos.gob.es.

The infographic above makes a clear idea: interoperability does not depend on a single decision, but on combining standards, agreements and rules that change according to the sector. From here, it makes sense to go down one level and see what references and tools are used in Spain and in Europe so that these four layers (technical, semantic, organisational and legal) do not remain in theory.

A practical reference in Spain: NTI-RISP (and why it makes sense to cite it)

In the Spanish context, the NTI‑RISP is a very useful guide because it clearly lays out what needs to be taken care of when publishing information so that others can reuse it: identification, description (metadata), formats, and terms of use, among other aspects.

Metadata as glue: DCAT-AP and DCAT-AP-ES

In open data, the place where interoperability is most noticeable in everyday practice is in catalogs: if datasets are not described consistently, they become harder to find, understand, and federate.

-

DCAT-AP provides a common metadata model for data catalogues in Europe, based on widely reused vocabularies.

-

In Spain, DCAT-AP-ES is promoted precisely to reinforce the interoperability of catalogues with a common profile that facilitates exchange and federation between portals.

How to approach interoperability without dying of ambition

Rather than "fixing it all at once," it often works better to treat interoperability as continuous improvement because it breaks down with changes in technology, organization, or regulation. A simple and realistic approach:

-

Start with the "why": Do you want to integrate into a service, cross for analysis, build comparable indicators, enrich entities...? The objective determines the level of rigor required.

-

It ensures the minimum level of stability: machine-readable access and formats, persistent identifiers, minimal documentation, and some versioning (even if it is basic). This prevents "useful today" datasets that break tomorrow.

-

Apply semantics where it hurts (Pareto principle: 80/20 - states that 80% of the results come from 20% of the causes or actions-): define very well the critical fields (those that intersect/filter), units, code tables and the exact meaning of dates/states. You don't need to "model it all" to reduce most errors.

-

Put minimum operating agreements: who maintains, when it is updated, how incidents are reported, how changes are announced, and if the history is preserved. This is where a data governance approach (and guidelines like NTI-RISP) makes the difference between "published dataset" and "sustainable dataset".

-

Pilot with a real crossover: a small pilot quickly detects whether the problem was technical, semantic, organizational or legal, and gives you a specific list of frictions to eliminate.

In conclusion, interoperability is not simply "having an API": it is the result of aligning four layers – technical, semantic, organizational and legal – to be able to combine data without friction, without misunderstandings and with security. Each layer solves a different problem: the technical one avoids integration breaks, the semantic one avoids misinterpretations, the organizational one makes publication and maintenance sustainable over time, and the legal one eliminates the uncertainty about what can be done with the data.

In this context, sectoral frameworks and standards act as practical shortcuts to accelerate agreements and reduce ambiguity, and that is why it is useful to see examples by sector. In addition, interoperable metadata and catalogs are a real multiplier: When a dataset is well described, it is found more quickly, better understood, and can be federated at lower cost. Finally, an incremental and measurable approach is usually most effective: start with the "why", ensure technical stability, reinforce critical semantics (80/20), formalize minimum operational agreements and validate with a real crossover, instead of trying to "solve interoperability" as a single closed project.

Content created by Dr. Fernando Gualo, Professor at UCLM and Government and Data Quality Consultant. The content and views expressed in this publication are the sole responsibility of the author.

"I'm going to upload a CSV file for you. I want you to analyze it and summarize the most relevant conclusions you can draw from the data". A few years ago, data analysis was the territory of those who knew how to write code and use complex technical environments, and such a request would have required programming or advanced Excel skills. Today, being able to analyse data files in a short time with AI tools gives us great professional autonomy. Asking questions, contrasting preliminary ideas and exploring information first-hand changes our relationship with knowledge, especially because we stop depending on intermediaries to obtain answers. Gaining the ability to analyze data with AI independently speeds up processes, but it can also cause us to become overconfident in conclusions.

Based on the example of a raw data file, we are going to review possibilities, precautions and basic guidelines to explore the information without assuming conclusions too quickly.

The file:

To show an example of data analysis with AI we will use a file from the National Institute of Statistics (INE) that collects information on tourist flows in Europe, specifically on occupancy in rural tourism accommodation. The data file contains information from January 2001 to December 2025. It contains disaggregations by sex, age and autonomous community or city, which allows comparative analyses to be carried out over time. At the time of writing, the last update to this dataset was on January 28, 2026.

Figure 1. Dataset information. Source: National Institute of Statistics (INE).

1. Initial exploration

For this first exploration we are going to use a free version of Claude, the AI-based multitasking chat developed by Anthropic. It is one of the most advanced language models in reasoning and analysis benchmarks, which makes it especially suitable for this exercise, and it is the most widely used option currently by the community to perform tasks that require code.

Let's think that we are facing the data file for the first time. We know in broad strokes what it contains, but we do not know the structure of the information. Our first prompt, therefore, should focus on describing it:

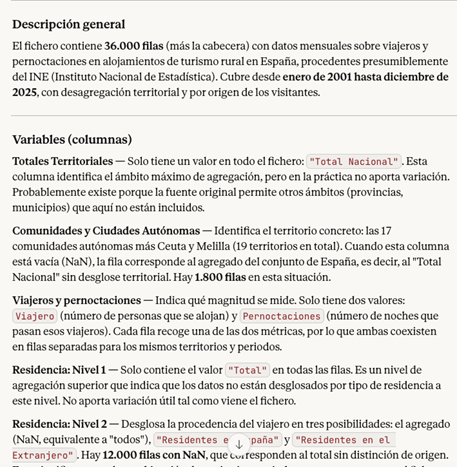

PROMPT: I want to work with a data file on occupancy in rural tourism accommodation. Explain to me what structure the file has: what variables it contains, what each one measures and what possible relationships exist between them. It also points out possible missing values or elements that require clarification.

Figure 2. Initial exploration of the data file with Claude. Source: Claude.

Once Claude has given us the general idea and explanation of the variables, it is good practice to open the file and do a quick check. The objective is to assess that, at a minimum, the number of rows, the number of columns, the names of the variables, the time period and the type of data coincide with what the model has told us.

If we detect any errors at this point, the LLM may not be reading the data correctly. If after trying in another conversation the error persists, it is a sign that there is something in the file that makes it difficult to read automatically. In this case, it is best not to continue with the analysis, as the conclusions will be very apparent, but will be based on misinterpreted data.

2. Anomaly management

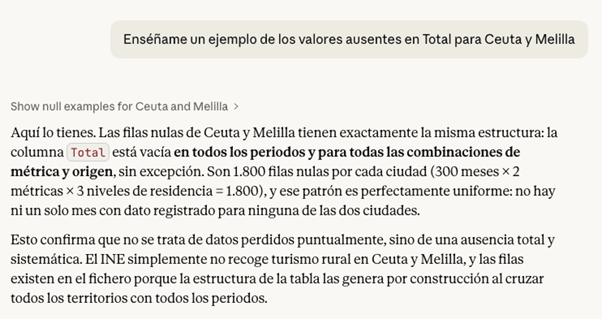

Second, if we have discovered anomalies, it is common to document them and decide how to handle them before proceeding with the analysis. We can ask the model to suggest what to do, but the final decisions will be ours. For example:

- Missing values: if there are empty cells, we need to decide whether to fill them with an "average" value from the column or simply delete those rows.

- Duplicates: we have to eliminate repeated rows or rows that do not provide new information.

- Formatting errors or inconsistencies: we must correct these so that the variables are coherent and comparable. For example, dates represented in different formats.

- Outliers: if a number appears that does not make sense or is exaggeratedly different from the rest, we have to decide whether to correct it, ignore it or treat it as it is.

Figure 3. Example of missing values analysis with Claude. Source: Claude.

In the case of our file, for example, we have detected that in Ceuta and Melilla the missing values in the Total variable are structural, there is no rural tourism registered in these cities, so we could exclude them from the analysis.

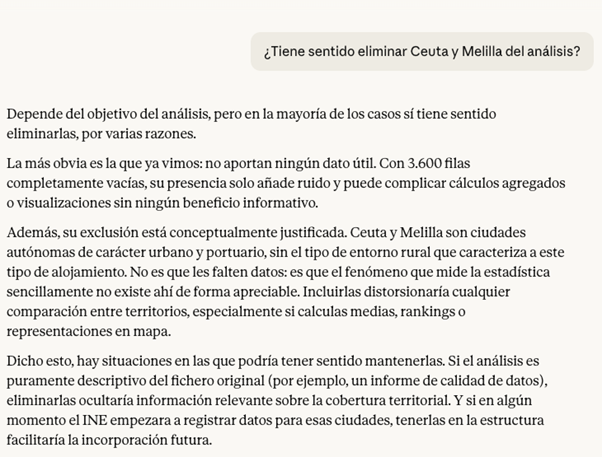

Before making the decision, a good practice at this point is to ask the LLM for the pros and cons of modifying the data. The answer can give us some clue as to which is the best option, or indicate some inconvenience that we had not taken into account.

Figure 4. Claude's analysis on the possibility of eliminating or not securities. Source: Claude.

If we decide to go ahead and exclude the cities of Ceuta and Melilla from the analysis, Claude can help us make this modification directly on the file. The prompt would be as follows:

PROMPT: Removes all rows corresponding to Ceuta and Melilla from the file, so that the rest of the data remains intact. Also explain the steps you're following so they can review them.

Figura 5. Step by step in the modification of data in Claude. Source: Claude.

At this point, Claude offers to download the modified file again, so a good checking practice would be to manually validate that the operation was done correctly. For example, check the number of rows in one file and another or check some rows at random with the first file to make sure that the data has not been corrupted.

3. First questions and visualizations

If the result so far is satisfactory, we can already start exploring the data to ask ourselves initial questions and look for interesting patterns. The ideal when starting the exploration is to ask big, clear and easy to answer questions with the data, because they give us a first vision.

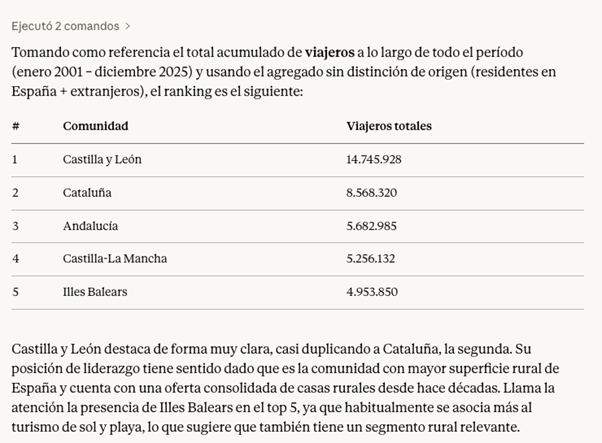

PROMPT: It works with the file without Ceuta and Melilla from now on. Which have been the five communities with the most rural tourism in the total period?

Figure 6. Claude's response to the five communities with the most rural tourism in the period. Source: Claude.

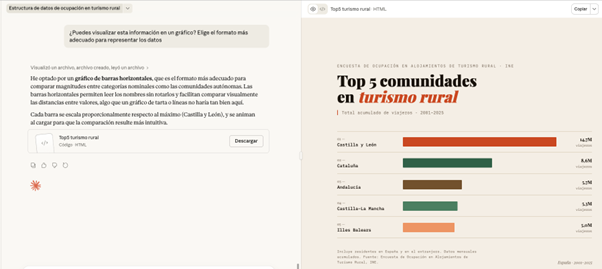

Finally, we can ask Claude to help us visualize the data. Instead of making the effort to point you to a particular chart type, we give you the freedom to choose the format that best displays the information.

PROMPT: Can you visualize this information on a graph? Choose the most appropriate format to represent the data.

Figure 7. Graph prepared by Cloude to represent the information. Source: Claude.

Here, the screen unfolds: on the left, we can continue with the conversation or download the file, while on the right we can view the graph directly. Claude has generated a very visual and ready-to-use horizontal bar chart. The colors differentiate the communities and the date range and type of data are correctly indicated.

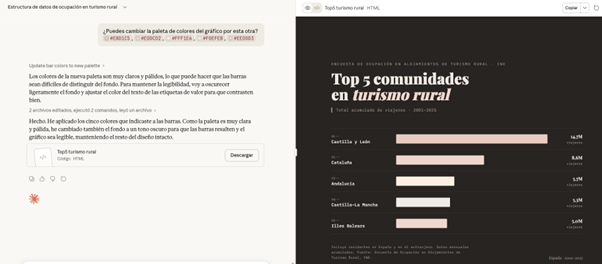

What happens if we ask you to change the color palette of the chart to an inappropriate one? In this case, for example, we are going to ask you for a series of pastel shades that are hardly different.

PROMPT: Can you change the color palette of the chart to this? #E8D1C5, #EDDCD2, #FFF1E6, #F0EFEB, #EEDDD3

.Figure 8. Adjustments made to the graph by Claude to represent the information. Source: Claude.

Faced with the challenge, Claude intelligently adjusts the graphic himself, darkens the background and changes the text on the labels to maintain readability and contrast

All of the above exercise has been done with Claude Sonnet 4.6, which is not Anthropic's highest quality model. Its higher versions, such as Claude Opus 4.6, have greater reasoning capacity, deep understanding and finer results. In addition, there are many other tools for working with AI-based data and visualizations, such as Julius or Quadratic. Although the possibilities are almost endless in them, when we work with data it is still essential to maintain our own methodology and criteria.

Contextualizing the data we are analyzing in real life and connecting it with other knowledge is not a task that can be delegated; We need to have a minimum prior idea of what we want to achieve with the analysis in order to transmit it to the system. This will allow us to ask better questions, properly interpret the results and therefore make a more effective prompting.

Content created by Carmen Torrijos, expert in AI applied to language and communication. The content and views expressed in this publication are the sole responsibility of the author.

We live in an era where science is increasingly reliant on data. From urban planning to the climate transition, data governance has become a structural pillar of evidence-based decision-making. However, there is one area where the traditional principles of data management, validation and control are subjected to extreme tensions: the universe.

Space data—produced by scientific satellites, telescopes, interplanetary probes, and exploration missions— do not describe accessible or repeatable realities. They observe phenomena that occurred millions of years ago, at distances impossible to travel and under conditions that can never be replicated in the laboratory. There is no "in situ" measurement that directly confirms these phenomena.

In this context, data governance ceases to be an organizational issue and becomes a structural element of scientific trust. Quality, traceability and reproducibility cannot be supported by direct physical references, but by methodological transparency, comprehensive documentation and the robustness of instrumental and theoretical frameworks.

Governing data in the universe therefore involves facing unique challenges: managing structural uncertainty, documenting extreme scales, and ensuring trust in information we can never touch.

Below, we explore the main challenges posed by data governance when the object of study is beyond Earth.

I. Specific challenges of the datum of the universe

1. Beyond Earth: new sources, new rules

When we talk about space data, we mean much more than satellite images of the Earth's surface. We delve into a complex ecosystem that includes space and ground-based telescopes, interplanetary probes, planetary exploration missions, and observatories designed to detect radiation, particles, or extreme physical phenomena.

These systems generate data with clearly different challenges compared to other scientific domains:

| Challenge | Impact on data governance |

|---|---|

| Non-existent physical access | There is no direct validation; Trust lies in the integrity of the channel. |

| Instrumental dependence | The data is a direct "child" of the sensor's design. If the sensor fails or is out of calibration, reality is distorted. |

| Uniqueness | Many astronomical events are unique. There is no "second chance" to capture them. |

| Extreme cost | The value of each byte is very high due to the investment required to put the sensor into orbit |

Figure 1. Challenges in data governance across the universe. Source: own elaboration - datos.gob.es.

Unlike Earth observation data -which in many cases can be contrasted by field campaigns or redundant sensors -data from the universe depend fundamentally on the mission architecture, instrument calibration, and physical models used to interpret the captured signal.

In many cases, what is recorded is not the phenomenon itself, but an indirect signal: spectral variations, electromagnetic emissions, gravitational alterations or particles detected after traveling millions of kilometers. The data is, in essence, an instrumental translation of an inaccessible phenomenon.

For all these reasons, in space data cannot be understood without the technical context that generates it.

2. Structural uncertainty and extreme scales

Uncertainty refers to the degree of margin of error or indeterminacy associated with a scientific measurement, interpretation, or result due to the limits of the instruments, observing conditions, and models used to analyze the data. If in other areas uncertainty is a factor that is tried to be reduced by direct, repeatable and verifiable measurements, in the observation of the universe uncertainty is part of the knowledge process itself. It is not simply a matter of "not knowing enough", but of facing physical and methodological limits that cannot be completely eliminated.

Therefore, in the observation of the universe, uncertainty is structural. It is not a specific anomaly, but a condition inherent to the object of study.

There are several critical dimensions:

- Extreme spatial and temporal scales: cosmic distances prevent any direct validation. Timescales imply that the data often captures an "instant" of the remote past and not a verifiable present reality.

- Weak signals and unavoidable noise: the instruments capture extremely subtle emissions. The useful signal coexists with interference, technological limitations and background noise. Interpretation depends on advanced statistical treatments and complex physical models.

- Limited-observation phenomena: Some astrophysical phenomena—such as certain supernovae, gamma-ray bursts, or singular gravitational configurations—cannot be experimentally recreated and can only be observed when they occur. In these cases, the available record may be unique or profoundly limited, increasing the responsibility for documentation and preservation.

Not all phenomena are unrepeatable, but in many cases the opportunities for observation are scarce or depend on exceptional conditions.

II. Building trust when we can't touch the object observed

In the face of these challenges, data governance takes on a structural role. It is not limited to guaranteeing storage or availability, but defines the rules by which scientific processes are documented, traceable and auditable.

In this context, governing does not mean producing knowledge, but rather ensuring that its production is transparent, verifiable and reusable.

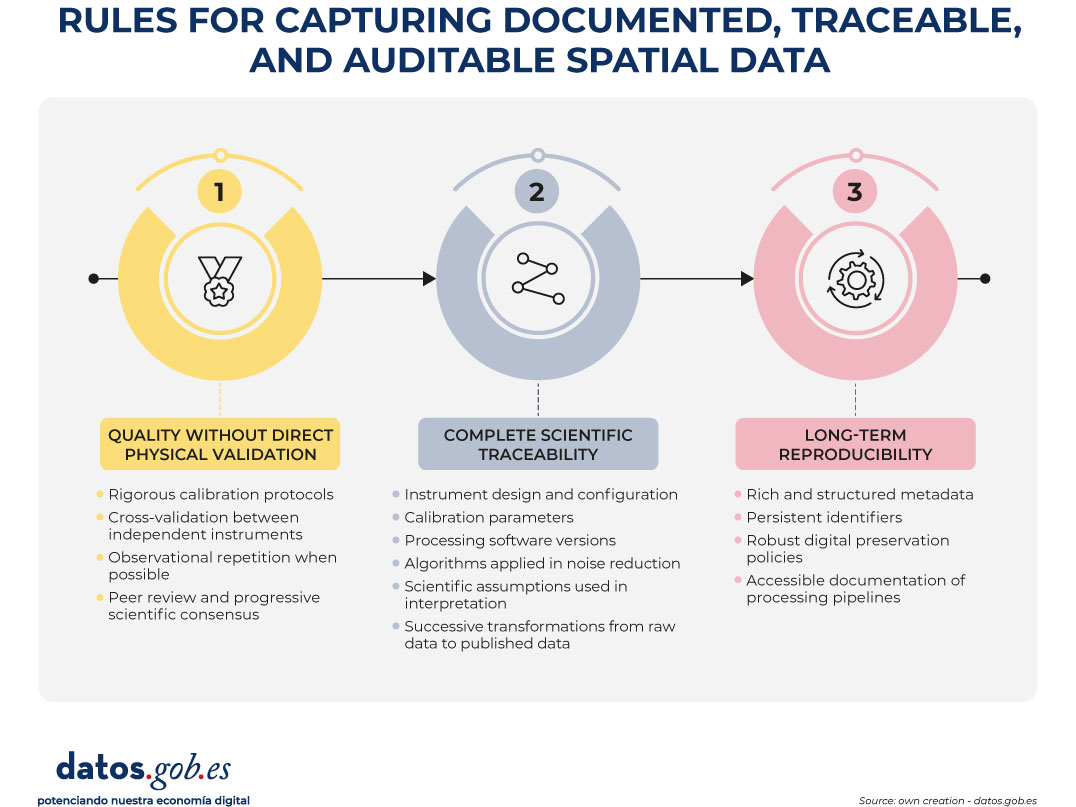

1. Quality without direct physical validation

When the observed phenomenon cannot be directly verified, the quality of the data is based on:

- Rigorous calibration protocols: instruments must undergo systematic calibration processes before, during, and after operation. This involves adjusting your measurements against known baselines, characterizing your margins of error, documenting deviations, and recording any modifications to your configuration. Calibration is not a one-off event, but an ongoing process that ensures that the recorded signal reflects, as accurately as possible, the observed phenomenon within the physical boundaries of the system.

- Cross-validation between independent instruments: when different instruments – either on the same mission or on different missions – observe a similar phenomenon, the comparison of results allows the reliability of the data to be reinforced. The convergence between observations obtained with different technologies reduces the probability of instrumental bias or systematic errors. This inter-instrumental coherence acts as an indirect verification mechanism.

- Observational repetition when possible: although not all phenomena can be repeated, many observations can be made at different times or under different conditions. Repetition allows to evaluate the stability of the signal, identify anomalies and estimate natural variability against measurement error. Consistency over time strengthens the robustness of the result.

- Peer review and progressive scientific consensus: the data and their interpretations are subject to evaluation by the scientific community. This process involves methodological scrutiny, critical analysis of assumptions, and verification of consistency with existing knowledge. Consensus does not emerge immediately, but through the accumulation of evidence and scientific debate. Quality, in this sense, is also a collective construction.

Quality is not just a technical property; it is the result of a documented and auditable process.

2. Complete scientific traceability

In the spatial context, data is inseparable from the technical and scientific process that generates it. It cannot be understood as an isolated result, but as the culmination of a chain of instrumental, methodological and analytical decisions.

Therefore, traceability must explicitly and documented:

- Instrument design and configuration: information about the technical characteristics of the instrument that captured the signal, such as its architecture, sensing capabilities, resolution limits, and operational configurations, needs to be retained. These conditions determine what type of signal can be recorded and how accurately.

- Calibration parameters: The adjustments applied to ensure that the instrument operates within the intended margins must be recorded, as well as the modifications made over time. The calibration parameters directly influence the interpretation of the obtained signal.

- Processing software versions: the processing of raw data depends on specific IT tools. Preserving the versions used allows you to understand how the results were generated and avoid ambiguities if the software evolves.

- Algorithms applied in noise reduction: since signals are often accompanied by interference or background noise, it is essential to document the methods used to filter, clean, or transform the information before analysis. These algorithms influence the final result.

- Scientific assumptions used in the interpretation: the reading of the data is not neutral: it is based on theoretical frameworks and physical models accepted at the time of analysis. Recording these assumptions allows you to contextualize the conclusions and understand possible future revisions.

- Successive transformations from the raw data to the published data: from the original signal to the final scientific product, the data goes through different phases of processing, aggregation and analysis. Each transformation must be able to be reconstructed to understand how the communicated result was reached.

Without exhaustive traceability, reproducibility is weakened and future interpretability is compromised. When it is not possible to reconstruct the entire process that led to a result, its independent evaluation becomes limited and its scientific reuse loses its robustness.

3. Long-term reproducibility

Space missions can span decades, and their data can remain relevant long after the mission has ended. In addition, scientific interpretation evolves over time: new models, new tools, and new questions may require reanalyzing information generated years ago.

Therefore, data must remain interpretable even when the original equipment no longer exists, technological systems have changed, or the scientific context has evolved.

This requires:

- Rich and structured metadata: the contextual information that accompanies the data – about its origin, acquisition conditions, processing and limitations – must be organized in a clear and standardized way. Without sufficient metadata, the data loses meaning and becomes difficult to reinterpret in the future.

- Persistent identifiers: Each dataset must be able to be located and cited in a stable manner over time. Persistent identifiers allow the reference to be maintained even if storage systems or technology infrastructures change.

- Robust digital preservation policies: Long-term preservation requires strategies that take into account format obsolescence, technological migration, and archive integrity. It is not enough to store; It is necessary to ensure that the data remains accessible and readable over time.

- Accessible documentation of processing pipelines: the process that transforms raw data into scientific product must be described in a comprehensible way. This allows future researchers to reconstruct the analysis, verify the results, or apply new methods on the same original data.

Reproducibility, in this context, does not mean physically repeating the observed phenomenon, but being able to reconstruct the analytical process that led to a given result. Governance doesn't just manage the present; It ensures the future reuse of knowledge and preserves the ability to reinterpret information in the light of new scientific advances.

Figure 2. Rules for capturing documented, traceable, and auditable spatial data. Source: own elaboration - datos.gob.es.

Conclusion: Governing What We Can't Touch

The data of the universe forces us to rethink how we understand and manage information. We are working with realities that we cannot visit, touch or verify directly. We observe phenomena that occur at immense distances and in times that exceed the human scale, through highly specialized instruments that translate complex signals into interpretable data.

In this context, uncertainty is not a mistake or a weakness, but a natural feature of the study of the cosmos. The interpretation of data depends on scientific models that evolve over time, and quality is not based on direct verification, but on rigorous processes, well documented and reviewed by the scientific community. Trust, therefore, does not arise from direct experience, but from the transparency, traceability and clarity with which the methods used are explained.

Governing spatial data does not only mean storing it or making it available to the public. It means keeping all the information that allows us to understand how they were obtained, how they were processed and under what assumptions they were interpreted. Only then can they be evaluated, reinterpreted and reused in the future.

Beyond Earth, data governance is not a technical detail or an administrative task. It is the foundation that sustains the credibility of human knowledge about the universe and the basis that allows new generations to continue exploring what we cannot yet achieve physically.

Content prepared by Mayte Toscano, Senior Consultant in technologies related to the data economy. The contents and viewpoints expressed in this publication are the sole responsibility of the author.

Data visualization is not a recent discipline. For centuries, people have used graphs , maps, and diagrams to represent complex information. Classic examples such as the statistical maps of the nineteenth century or the graphs used in the press show that the need to "see" the data in order to understand it has always existed.

For a long time, creating visualizations required specialized knowledge and access to professional tools, which limited their production to very specific profiles. However, the digital and technological revolution has profoundly transformed this landscape. Today, anyone with access to a computer and data can create visualizations. Tools have been democratized, many of them are free or open source, and visualization work has extended beyond design to integrate into areas such as statistics, data science, academic research, public administration, or education.

Today, data visualization is a transversal competence that allows citizens to explore public information, institutions to better communicate their policies, and reusers to generate new services and knowledge from open data. In this post we present some of the most accessible and used options in data visualization.

A broad and diverse ecosystem of tools

The ecosystem of data visualization tools is broad and diverse, both in functionalities and levels of complexity. There are options designed for a first exploration of the data, others aimed at in-depth analysis and some designed to create interactive visualizations or complex digital narratives.

This variety allows you to tailor the visualization to different contexts and goals—from understanding a dataset in advance to publishing interactive charts, dashboards, or maps on the web.

The Data Visualization Society's annual survey reflects this diversity and shows how the use of certain tools evolves over time, consolidating some widely known options and giving way to new solutions that respond to emerging needs. These are some of the tools mentioned in the survey, ordered according to usage profiles.

The following criteria have been taken into account for the preparation of this list:

- Degree of use and maturity of the tool.

- Free access, free or with open versions.

- Useful for projects related to public data.

- Priority to open tools or with free versions.

Simple tools to get started

These tools are characterized by visual interfaces, a low learning curve, and the ability to create basic charts quickly. They are especially useful for getting started exploring open datasets or for outreach activities.

- Excel: it is one of the most widespread and well-known tools. It allows basic graphs and first data scans to be carried out in a simple way. While not specifically designed for advanced visualization, it is still a common gateway to working with data and its graphical representation.

- Google Sheets: works as a free and collaborative alternative to Excel. Its main advantage is the ability to work in a shared way and publish simple graphics online, which facilitates the dissemination of basic visualizations.

- Datawrapper: widely used in public communication and data journalism. It allows you to create clear graphs, maps, and interactive tables without the need for technical knowledge. It is particularly suitable for explaining data in a way that is understandable to a wide audience.

- RAWGraphs: free software tool aimed at visual exploration. It allows you to experiment with less common types of charts and discover new ways to represent data. It is especially useful in exploratory phases.

- Canva: While its approach is more informative than analytical, it can be useful for creating simple visual pieces that integrate basic graphics with design elements. It is suitable for visual communication of results, not so much for data analysis.

Data exploration and analysis tools

This group of tools is geared towards profiles that want to go beyond basic charts and perform more structured analysis. Many of them are open and widely consolidated in the field of data analysis.

- A: Free programming language widely used in statistics and data analysis. It has a wide ecosystem of packages that allow you to work with public data in a reproducible and transparent way.

- Ggplot2: R language display library. It is one of the most powerful tools for creating rigorous and well-structured graphs, both for analysis and for communicating results.

- Python (Matplotlib and Plotly): Python is one of the most widely used languages in data analysis. Matplotlib allows you to create customizable static charts, while Plotly makes it easy to create interactive visualizations. Together they offer a good balance between power and flexibility.

- Apache Superset: Open source platform for data analysis and dashboard creation. It has a more institutional and scalable approach, making it suitable for organizations that work with large volumes of public data.

This block is especially relevant for open data reusers and intermediate technical profiles who seek to combine analysis and visualization in a systematic way.

Tools for interactive and web visualization

These tools allow you to create advanced visualizations for publication in web environments. Although they require greater technical knowledge, they offer great flexibility and expressive possibilities.

- D3.js: it is one of the benchmarks in web visualization. It is based on open standards and allows full control over the visual representation of data. Its flexibility is very high, although so is its complexity.

In this practical exercise you can see how to use this library

- Vega and Vega-Lite: declarative languages for visualization that simplify the use of D3. They allow you to define graphics in a structured and reproducible way, offering a good balance between power and simplicity.

- Observable: interactive environment closely linked to D3 and Vega. It's especially useful for creating educational examples, prototypes, and exploratory visualizations that combine code, text, and graphics.

- Three.js and WebGL: technologies aimed at advanced and three-dimensional visualizations. Its use is more experimental and is usually linked to dissemination projects or visual research.

In this section, it should be noted that, although the technical barriers are greater, these tools allow for the creation of rich interactive experiences that can be very effective in communicating complex public data.

Geospatial data and mapping tools

Geographic visualization is especially relevant in the field of open data, since a large part of public information has a territorial dimension. In this field, free software has a prominent weight and is closely aligned with use in public administrations.

- QGIS: a benchmark in free software for geographic information systems (GIS). It is widely used in public administrations and allows spatial data to be analysed and visualised in great detail.

- ArcGIS: very widespread in the institutional field. Although it is not free software, its use is well established and is part of the regular ecosystem of many public organizations.

- Mapbox: platform aimed at creating interactive web maps. It is widely used in online visualization projects and allows geographic data to be integrated into web applications.

- Leaflet: A popular open-source library for creating interactive maps on the web. It is lightweight, flexible, and widely used in geographic open data reuse projects.

This toolkit facilitates the territorial representation of data and its reuse in local, regional or national contexts.

In conclusion, the choice of a visualization tool depends largely on the goal being pursued. Learning and experimenting is not the same as analyzing data in depth or communicating results to a wide audience. Therefore, it is useful to reflect beforehand on the type of data available, the audience to which the visualization is aimed and the message you want to convey.

Betting on accessible and open tools allows more people to explore, interpret and communicate public data. In this sense, visualising data is also a way of bringing information closer to citizens and encouraging its reuse.