The European High-Value Datasets (HVD) regulation, established by Implementing Regulation (EU) 2023/138, consolidates the role of APIs as an essential infrastructure for the reuse of public information, making their availability a legal obligation and not just a good technological practice.

Since 9 June 2024, public bodies in all Member States are required to publish datasets classified as HVDs free of charge, in machine-readable formats and accessible via APIs. The six categories regulated are: geospatial data, Earth observation, environment, statistics, business information and mobility.

This framework is not merely declarative. Member States must report to the European Commission compliance status every two years, including persistent links to APIs that give access to such data. The situation in Spain in terms of transparency, open data and Systematic API Provisioning can be consulted in the indicators published by the Open Data Maturity Report.

In practice, this means that APIs are the bridge between the norm and reality. The regulation not only says what data must be opened, but also requires it to be done in such a way that it can be automatically integrated into applications, studies or digital services. Therefore, reviewing the public APIs available in Spain is a concrete way to understand how this framework is being applied on a day-to-day basis.

Inventory of public APIs in Spain

INE — API JSON (Tempus3)

The National Institute of Statistics offers a API REST that Exposes the entire database Tempus3 broadcast format JSON, which includes official statistical series on demography, economy, labour market, industry, services, prices, living conditions and other socio-economic indicators.

To make calls, the structure must follow the pattern https://servicios.ine.es/wstempus/js/{language}/{function}/{input}. The tip=AM parameter allows you to get metadata along with the data, and tv filters by specific variables. For example, to obtain the population figures by province, simply consult the corresponding operation (IOE 30243) and filter by the desired geographical variable.

No authentication or API key required: any well-formed GET request returns data directly.

Example in Python — get the resident population series with metadata:

import requests

url = ("https://servicios.ine.es/wstempus/js/ES/"

"DATOS_TABLA/t20/e245/p08/l0/01002.px?tip=AM")

response = requests.get(url)

data = response.json()

for serie in data[:3]: # primeras 3 series

name = series["Name"]

last = series["Date"][-1]

print(f"{name}: {last['Value']:,.0f} ({last['PeriodName']})")

TOTAL AGES, TOTAL, Both sexes: 39,852,651 (1998)

TOTAL AGES, TOTAL, Males: 19,488,465 (1998)

TOTAL EDADES, TOTAL, Mujeres: 20,364,186 (1998)AEMET — OpenData API REST

The State Meteorological Agency exposes its data through a REST API, documented with Swagger UI (an open-source tool that generates interactive documentation), observed meteorological data and official predictions, including temperature, precipitation, wind, alerts and adverse phenomena.

Unlike the INE, AEMET requires a Free API key, which is obtained by providing an email address in the portal opendata.aemet.es. A API key works as A type of "password" or identifier: it is used to allow the agency to know who is using the service, control the volume of requests and ensure proper use of the infrastructure.

A relevant technical aspect is that AEMET implements a two-call model: the first request returns a JSON with a temporary URL in the data field, and a second request to that URL retrieves the actual dataset. The rate limit is 50 requests per minute.

Example in Python — daily weather data (double call):

import requests

API_KEY = "tu_api_key_aqui"

headers = {"api_key": API_KEY}

#1st call: Get temporary data URLs

url = ("https://opendata.aemet.es/opendata/api/"

"Values/Climatological/Daily/Data/"

"fechaini/2025-01-01T00:00:00UTC/"

"fechafin/2025-01-10T23:59:59UTC/"

"allseasons")

resp1 = requests.get(url, headers=headers).json()

#2nd call: Download the actual dataset

datos = requests.get(resp1["datos"], headers=headers).json()

for estacion in datos[:3]:

print(f"{station['name']}: "

f"Tmax={station.get('tmax','N/A')}°C, "

f"Prec={estacion.get('prec','N/A')}mm")

CITFAGRO_88_GAITERO: Tmax=8.8°C, Prev=0.0mm

ABANILLA: Tmax=14,8°C, Prec=0,0mm

LA RODA DE ANDALUCÍA: Tmax=15.7°C, Prec=0.2mmCNIG / IDEE — Servicios OGC y OGC API Features

The National Center for Geographic Information It publishes official geospatial data – base mapping, digital terrain models, river networks, administrative boundaries and other topographic elements – through interoperable services. These have evolved from WMS/WFS to the OGC API (Features, Maps and Processes), implemented with open software such as pygeoapi.

The main advantage of OGC API Features over WFS is the response format: instead of GML (heavy and complex), the data is served in GeoJSON and HTML, native formats of the web ecosystem. This allows them to be consumed directly from libraries such as Leaflet, OpenLayers or GDAL. Available datasets include Cartociudad addresses, hydrography, transport networks and geographical gazetteer.

Example in Python — query geographic features via OGC API:

import requests

# OGC API Features - Basic Geographical Gazetteer of Spain

base = "https://api-features.idee.es/collections"

collection = "falls" # Waterfalls

url = f"{base}/{collection}/items?limit=5&f=json"

resp = requests.get(url).json()

for feat in resp["features"]:

props = feat["properties"]

coords = feat["geometry"]["coordinates"]

print(f"{props['number']}: ({coords[0]:.4f}, {coords[1]:.4f})")

None: (-6.2132, 42.8982)

Cascada del Cervienzo: (-6.2572, 42.9763)

El Xaral Waterfall: (-6.3815, 42.9881)

Rexiu Waterfall: (-7.2256, 42.5743)

Santalla Waterfall: (-7.2543, 42.6510)MITECO — Open Data Portal (CKAN)

The Ministry for the Ecological Transition maintains a CKAN-based portal that exposes three access layers: the CKAN Action API for metadata and dataset search, the Datastore API (OpenAPI) for live queries on tabular resources, and RDF/JSON-LD endpoints compliant with DCAT-AP and GeoDCAT-AP. In its catalogue you can find data on air quality, emissions and climate change, water (state of masses and hydrological planning), biodiversity and protected areas, waste, energy and environmental assessment.

Featured datasets include Natura 2000 Network protected areas, bodies of water, and greenhouse gas emissions projections.

Example in Python — search for datasets:

import requests

BASE = "https://catalogo.datosabiertos.miteco.gob.es/ catalog"

# Search for datasets containing 'natura 2000'

busqueda = requests.get(

f"{BASE}/api/3/action/package_search",

params={"q": "natura 2000", "rows": 3},

).json()

for ds in busqueda["result"]["results"]:

print(f"{ds['title']} ({ds['num_resources']} resources)")

Protected Areas of the Natura 2000 Network (13 resources)

Database of Natura 2000 Network Protected Areas of Spain (CNTRYES) (1 resources)

Protected Areas of the Natura 2000 Network - API - High Value Data (1 resources)Technical comparison

| Organisim | Protocol | Format | Authentication | Rate limit | HVD |

|---|---|---|---|---|---|

| INE | REST | JSON | None | Undeclared | Yes (statistic) |

| AEMET | REST | JSON | API key (free) | 50 reg/min | Yes (environment) |

| CNIG/IDEA | OGC API/WFS | GeoJSON/GML | None | Undeclared | Yes (geoespatial) |

| MITECO | CKAN/REST | JSON/RDF | None | Undeclared | Yes (environment) |

Figure 1. Comparative table of the APIs from various public agencies discussed in this post. Source: Compiled by the author – datos.gob.es.

The availability of public APIs isn't just a matter of technical convenience. From a data perspective, these interfaces enable three critical capabilities:

- Pipeline automation: the periodic ingestion of public data can be orchestrated with standard tools (Airflow, Prefect, cron) without manual intervention or file downloads.

- Reproducibility: API URLs act as static references to authoritative sources, facilitating auditing and traceability in analytics projects.

- Interoperability: the use of open standards (REST, OGC API, DCAT-AP) allows heterogeneous sources to be crossed without depending on proprietary formats.

The public API ecosystem in Spain has different levels of development depending on the body and the sectoral scope. While entities such as the INE and AEMET have consolidated and well-documented interfaces, in other cases access is articulated through CKAN portals or traditional OGC services. The regulation regarding High Value Datasets (HVDs) is driving the progressive adoption of REST standards, although the degree of implementation evolves at different rates. For data professionals, these APIs are already a fully operational source that is increasingly common to integrate into data architectures in engineering and analytical environments.ás habitual en entornos analíticos y de ingeniería.

Content produced by Juan Benavente, a senior industrial engineer and expert in technologies related to the data economy. The content and views expressed in this publication are the sole responsibility of the author.

The Organisation for Economic Co-operation and Development (OECD) has published the main findings of the 2025 edition of the Open, Useful and Re-usable Data Index (OURdata) and the Digital Government Index (DGI), two indices that evaluate the good work of governments in fields related to digital transformation.

Both studies are born from a central idea: "digital transformation is no longer optional for governments: it is an absolute necessity". It enables better services, smarter decision-making and collaboration across borders, but for this to work, a bold and balanced vision is needed, supported by a strong and reliable foundation. Thanks to the analysis offered by the two indices published by the OECD, it is possible to guide policies, prioritize investments and measure the progress of digital transformation in the public sector.

Specifically, the indices assess:

- OURdata Index: national efforts to design and implement useful and reusable open data policies.

- Digital Government Index (DGI): Governments' progress in building the foundations for a coherent and people-centred digital transformation.

Both analyses are based on data collected during the first half of 2025, covering initiatives and policies implemented between January 1, 2023 and December 31, 2024. Its results will also feed into the OECD Digital Government Outlook 2026, which will include more in-depth analysis, key trends and country notes.

Keys to the OURdata Index 2025

The OURdata Index 2025 shows important progress in the opening and reuse of public data in OECD countries. In this index, Spain is in the top 5, consolidating its position among the countries with the best open data policies.

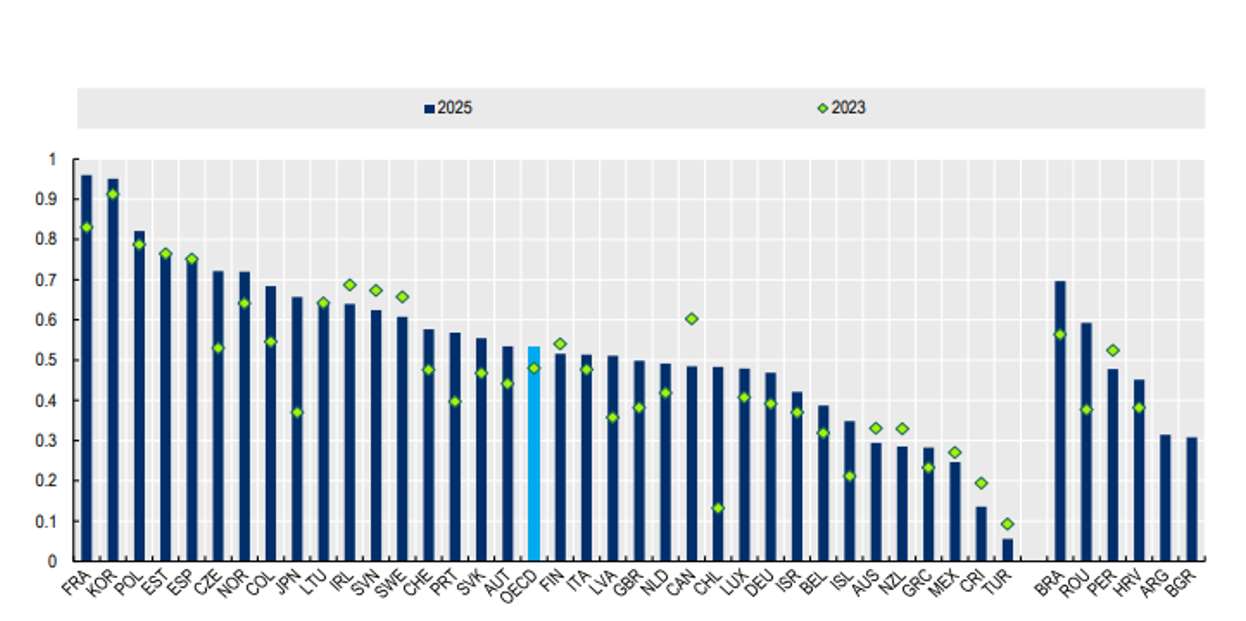

The OECD average rises from 0.48 to 0.53 out of a total score of 1, with almost 60% of countries exceeding the 0.50 threshold. France leads the ranking, followed by South Korea, Poland, Estonia and the aforementioned Spain, as can be seen in the following graph.

Figure 1. Result by country of the Open, Useful and Re-usable Data Index (OURdata). Source: 2025 Open, Useful and Re-usable Data Index (OURdata), OECD.

To arrive at these data, the report analyzes three pillars, as in 2023:

- Pillar 1: Data availability. It measures the extent to which governments have adopted and implemented formal requirements for publishing open data. It also assesses the involvement of relevant actors to identify the demand for data and the availability of high-value datasets such as open data. It should be noted that, although the report talks about high value datasets, it is not the same concept that the EU handles. In the case of the OECD, other high-impact categories are also taken into account, such as health, education, crime and justice or public finances, among others.

- Pillar 2: Data accessibility. It assesses the existence of requirements to offer open data in reusable formats. In addition, it focuses on the degree to which high-value government datasets are published in a timely manner, in open formats, with standardized and detailed metadata, and through Application Programming Interfaces (APIs). It also analyzes the participation of relevant actors (stakeholders) in the central open data portal and in initiatives to improve its quality.

- Pillar 3: Government support for data reuse. It measures the extent to which governments play a proactive role in promoting the reuse of open data both inside and outside the public sector. Specifically, it analyzes whether there are alliances and organizes events that increase awareness of open data and promote its reuse; whether public officials are involved in the publication of open data and in data analysis and reuse activities; and whether impact assessments of open data are carried out and examples of reuse are collected.

The results show that, as in previous editions, OECD countries perform better in Data Availability (Pillar 1) and Data Accessibility (Pillar 2) than in Government Support for Data Reuse. However, Spain is an exception: it ranks third (0.91) in government support when it comes to promoting the creation of public value from open data and in measuring its real impact. In the rest of the pillars, 1 and 2, it is in 14th position, also ahead of the average of OECD countries.

Claves del Digital Government Index

The 2025 edition of the DGI assesses the digital maturity of governments. To do this, it analyzes whether they have the necessary foundations to leverage data and technology in a comprehensive transformation of the public sector focused on people.

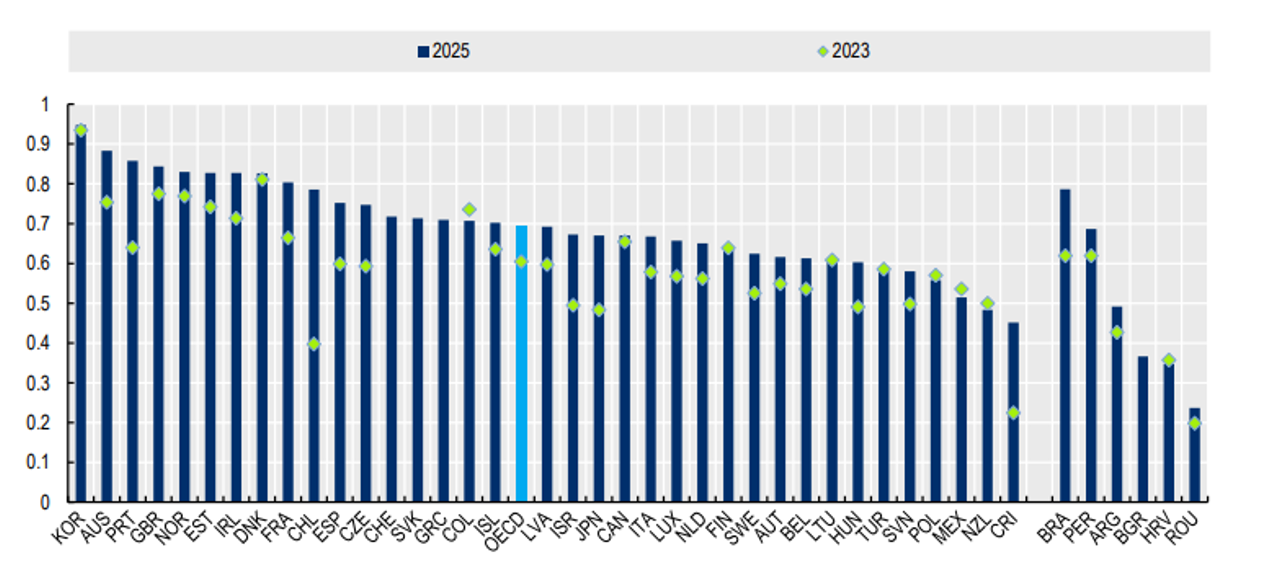

As with the OURData index, the DGI score is based on the same methodology used in the 2023 edition, which allows a longitudinal evaluation to be carried out and progress between that year and 2025 to be compared. In this period, the OECD average in the DGI increased by 0.08 points, from 0.61 (out of 1) in 2023 to 0.70 in 2025, representing a total increase of 14%. Almost all governments exceeded the 0.50 threshold, and 17 of them were above the OECD average, including Spain.

The ranking is headed by South Korea, Australia, Portugal, the United Kingdom and Norway, with Spain in twelfth position, as shown in the following graph.

Figure 2. Result by country of the Digital Government Index. Source: 2025 Digital Government Index (DGI), OECD.

The DGI measures the maturity of digital government along six dimensions:

- Dimension 1: Digital by design. It assesses how digital government policies enable the public sector to use digital tools and data consistently to transform services.

- Dimension 2: Data-driven. It discusses advances in governance and the enablers for data access, sharing, and reuse in the public sector.

- Dimension 3: Government as a platform. It measures the deployment of common components such as guides, tools, data, digital identity, and software to drive consistent transformation of processes and services.

- Dimension 4: Open by default. It assesses openness beyond open data, including the use of technologies and data to communicate and engage with different actors.

- Dimension 5: User-centered. It measures the ability of governments to place people's needs at the centre of the design and delivery of policies and services.

- Dimension 6: Proactivity. It analyzes the ability to anticipate the needs of users and service providers to proactively offer public services.

The DGI assessment focuses on both the strategic and operational levels. Therefore, for each dimension, it examines four cross-cutting facets of the policy cycle: strategic approach (strategies and general frameworks), policy levers (resources and tools), implementation (concrete practices), and monitoring (monitoring and evaluation).

While countries have made progress compared to 2023, the 2025 results show that there is still room to increase the pace and depth of digital government policies. As in 2023, OECD countries excel in the Digital by Design, Data-Driven Public Sector, Government as a Platform and User-Centric dimensions, with widespread improvements in their scores. These advances are explained by the strengthening of governance and the use of data, the development of digital infrastructures -such as digital identity systems and service platforms-, the consolidation of digital talent in public administrations and the adoption of service standards.

In contrast, the Proactivity and Open dimensions by default continue to show lower performance, as was already the case in 2023. This is due to weaker results in the use and governance of artificial intelligence in the public sector, in service design and delivery practices, and in open data. Even so, improvements are observed in areas such as the availability of governance instruments for a reliable use of AI and the expansion of tools to test and monitor whether services are adapted to the needs of users.

In this case, Spain does follow the general trend, standing out especially in Digital by design where it enters the top 10 with a ninth position, although with one exception: it also obtains a good score in Proactivity, with a 12th place. In the rest of the indicators, it remains fairly stable, between positions 13 and 19.

Conclusion

Governments around the world face a common challenge: rigid structures, slow processes, and rules that sometimes make it difficult to respond with agility to today's challenges. As a result, digital modernization has become a strategic necessity.

Embracing digital technologies, connecting data, and working with agile methodologies allows governments to be faster, more efficient, and proactive while remaining active in accountability and facilitates collaboration between institutions and countries. Studies conducted by the OECD allow countries to determine their areas for improvement, facilitating informed decision-making regarding digital infrastructure, data or the use of AI.

To find out more about the details of Spain's position, we will have to wait for the country notes to be published in the OECD Digital Government Outlook 2026, but for now, we can take note of our strengths (government support for the reuse of data or the development of digital government policies) and the challenges to be faced (continuing to promote the accessibility and availability of data).

Since its origins, the open data movement has focused mainly on promoting the openness of data and promoting its reuse. The objective that has articulated most of the initiatives, both public and private, has been to overcome the obstacles to publishing increasingly complete data catalogues and to ensure that public sector information is available so that citizens, companies, researchers and the public sector itself could create economic and social value.

However, as we have taken steps towards an economy that is increasingly dependent on data and, more recently, on artificial intelligence – and in the near future on the possibilities that autonomous agents bring us through agentic artificial intelligence – priorities have been changing and the focus has been shifting towards issues such as improving the quality of published data.

It is no longer enough for the datasets to be published in an open data portal complying with good practices, or even for the data to meet quality standards at the time of publication. It is also necessary that this publication of the datasets meets service levels that transform the mere provision into an operational commitment that mitigates the uncertainties that often hinder reuse.

When a developer integrates a real-time transportation data API into their mobility app, or when a data scientist works on an AI model with historical climate data, they are taking a risk if they are uncertain about the conditions under which the data will be available. If at any given time the published data becomes unavailable because the format changes without warning, because the response time skyrockets, or for any other reason, the automated processes fail and the data supply chain breaks, causing cascading failures in all dependent systems.

In this context, the adoption of service level agreements (SLAs) could be the next step for open data portals to evolve from the usual "best effort" model to become critical, reliable and robust digital infrastructures.

What are an SLA and a Data Contract in the context of open data?

In the context of site reliability engineering (SRE), an SLA is a contract negotiated between a service provider and its customers in order to set the level of quality of the service provided. It is, therefore, a tool that helps both parties to reach a consensus on aspects such as response time, time availability or available documentation.

In an open data portal, where there is often no direct financial consideration, an SLA could help answer questions such as:

- How long will the portal and its APIs be available?

- What response times can we expect?

- How often will the datasets be updated?

- How are changes to metadata, links, and formatting handled?

- How will incidents, changes and notifications to the community be managed?

In addition, in this transition towards greater operational maturity, the concept, still immature, of the data contract (data contract) emerges. If the SLA is an agreement that defines service level expectations, the data contract is an implementation that formalizes this commitment. A data contract would not only specify the schema and format, but would act as a safeguard: if a system update attempts to introduce a change that breaks the promised structure or degrades the quality of the data, the data contract allows you to detect and block such an anomaly before it affects end users.

INSPIRE as a starting point: availability, performance and capacity

The European Union's Infrastructure for Spatial Information (INSPIRE) has established one of the world's most rigorous frameworks for quality of service for geospatial data. Directive 2007/2/EC, known as INSPIRE, currently in its version 5.0, includes some technical obligations that could serve as a reference for any modern data portal. In particular , Regulation (EC) No 976/2009 sets out criteria that could well serve as a standard for any strategy for publishing high-value data:

- Availability: Infrastructure must be available 99% of the time during normal operating hours.

- Performance: For a visualization service, the initial response should arrive in less than 3 seconds.

- Capacity: For a location service, the minimum number of simultaneous requests served with guaranteed throughput must be 30 per second.

To help comply with these service standards, the European Commission offers tools such as the INSPIRE Reference Validator. This tool helps not only to verify syntactic interoperability (that the XML or GML is well formed), but also to ensure that network services comply with the technical specifications that allow those SLAs to be measured.

At this point, the demanding SLAs of the European spatial data infrastructure make us wonder if we should not aim for the same for critical health, energy or mobility data or for any other high-value dataset.

What an SLA could cover on an open data platform

When we talk about open datasets in the broad sense, the availability of the portal is a necessary condition, but not sufficient. Many issues that affect the reuser community are not complete portal crashes, but more subtle errors such as broken links, datasets that are not updated as often as indicated, inconsistent formats between versions, incomplete metadata, or silent changes in API behavior or dataset column names.

Therefore, it would be advisable to complement the SLAs of the portal infrastructure with "data health" SLAs that can be based on already established reference frameworks such as:

- Quality models such as ISO/IEC 25012, which allows the quality of the data to be broken down into measurable dimensions such as accuracy (that the data represents reality), completeness (that necessary values are not missing) and consistency (that there are no contradictions between tables or formats) and convert them into measurable requirements.

- FAIR Principles, which stands for Findable, Accessible, Interoperable, and Reusable. These principles emphasize that digital assets should not only be available, but should be traceable using persistent identifiers, accessible under clear protocols, interoperable through the use of standard vocabularies, and reusable thanks to clear licenses and documented provenance. The FAIR principles can be put into practice by systematically measuring the quality of the metadata that makes location, access and interoperability possible. For example, data.europa.eu's Metadata Quality Assurance (MQA) service helps you automatically evaluate catalog metadata, calculate metrics, and provide recommendations for improvement.

To make these concepts operational, we can focus on four examples where establishing specific service commitments would provide a differential value:

- Catalog compliance and currency: The SLA could ensure that the metadata is always aligned with the data it describes. A compliance commitment would ensure that the portal undergoes periodic validations (following specifications such as DCAT-AP-ES or HealthDCAT-AP) to prevent the documentation from becoming obsolete with respect to the actual resource.

- Schema stability and versioning: One of the biggest enemies of automated reuse is "silent switching." If a column changes its name or a data type changes, the data ingestion flows will fail immediately. A service level commitment might include a versioning policy. This would mean that any changes that break compatibility would be announced at least notice, and preferably keep the previous version in parallel for a reasonable amount of time.

- Freshness and refresh frequency: It's not uncommon to find datasets labeled as daily but last actually modified months ago. A good practice could be the definition of publication latency indicators. A possible SLA would establish the value of the average time between updates and would have alert systems that would automatically notify if a piece of data has not been refreshed according to the frequency declared in its metadata.

- Success rate: In the world of data APIs, it's not enough to just receive an HTTP 200 (OK) code to determine if the answer is valid. If the response is, for example, a JSON with no content, the service is not useful. The service level would have to measure the rate of successful responses with valid content, ensuring that the endpoint not only responds, but delivers the expected information.

A first step, SLA, SLO, and SLI: measure before committing

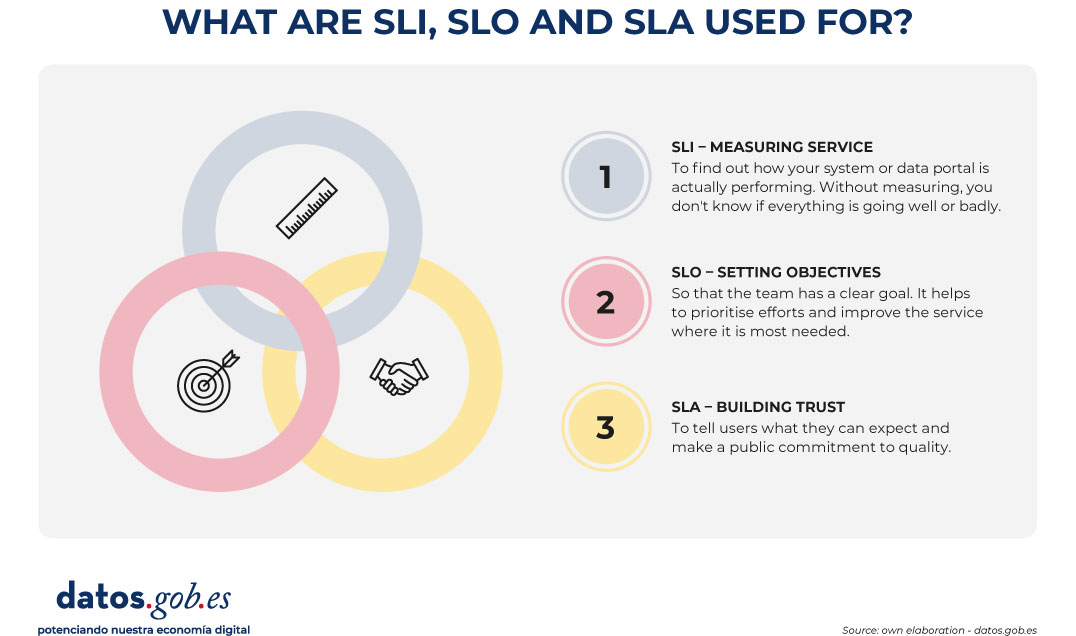

Since establishing these types of commitments is really complex, a possible strategy to take action gradually is to adopt a pragmatic approach based on industry best practices. For example, in reliability engineering, a hierarchy of three concepts is proposed that helps avoid unrealistic compromises:

- Service Level Indicator (SLI): it is the measurable and quantitative indicator. It represents the technical reality at a given moment. Examples of SLI in open data could be the "percentage of successful API requests", "p95 latency" (the response time of 95% of requests) or the "percentage of download links that do not return error".

- Service Level Objective (SLO): this is the internal objective set for this indicator. For example: "we want 99.5% of downloads to work correctly" or "p95 latency must be less than 800ms". It is the goal that guides the work of the technical team.

- Service Level Agreement (SLA): is the public and formal commitment to those objectives. This is the promise that the data portal makes to its community of reusers and that includes, ideally, the communication channels and the protocols for action in the event of non-compliance.

Figure 1. Visual to explain the difference between SLI, SLO and SLA. Source: own elaboration - datos.gob.es.

This distinction is especially valuable in the open data ecosystem due to the hybrid nature of a service in which not only an infrastructure is operated, but the data lifecycle is managed.

In many cases, the first step might be not so much to publish an ambitious SLA right away, but to start by defining your SLIs and looking at your SLOs. Once measurement was automated and service levels stabilized and predictable, it would be time to turn them into a public commitment (SLA).

Ultimately, implementing service tiers in open data could have a multiplier effect. Not only would it reduce technical friction for developers and improve the reuse rate, but it would make it easier to integrate public data into AI systems and autonomous agents. New uses such as the evaluation of generative Artificial Intelligence systems, the generation and validation of synthetic datasets or even the improvement of the quality of open data itself would benefit greatly.

Establishing a data SLA would, above all, be a powerful message: it would mean that the public sector not only publishes data as an administrative act, but operates it as a digital service that is highly available, reliable, predictable and, ultimately, prepared for the challenges of the data economy.

Content created by Jose Luis Marín, Senior Consultant in Data, Strategy, Innovation & Digitalisation. The content and views expressed in this publication are the sole responsibility of the author.

The Open Data Maturity Report is an annual evaluation that since 2015 has analysed the development and evolution of open data initiatives in the European Union. Coordinated by the European Data Portal (data.europa.eu) and carried out in collaboration with the European Commission, this report assesses 36 participating countries: the 27 EU Member States, 3 European Free Trade Association countries (Iceland, Norway and Switzerland) and 6 candidate countries.

The report assesses four key dimensions:

- Policy (strategies and regulatory frameworks)

- Portal (functionalities and usability)

- Quality (metadata and data standards)

- Impact (reuse and benefits generated)

In the 2025 edition, Spain stood out with a score of 100% in the impact block compared to the European average of 82.1%. In general terms, it occupies the fifth position among the countries of the European Union with a total score of 95.6%, forming part of the group of countries that prescribe trends.

A differential aspect of this edition of the report is the incorporation of a descriptive and contextual approach that complements the traditional regulatory model, creating clusters of countries to allow fairer comparisons. These clusters group countries with similar economic, social, political, and digital characteristics, and are based on profiles that explain how open data policies are implemented, not just what results are obtained. The aim is to invite countries to look at their peers , learn from comparable experiences and promote more effective peer-to-peer learning than based solely on general rankings.

In addition to quantifying it, the report includes use cases and good practices carried out by countries to open and reuse public sector data. In this post, we highlight some of them that can serve as inspiration to continue improving our open data ecosystem.

Croatia's inclusive and coordinated governance

One of the most noteworthy aspects of the 2025 report is how some countries have managed to establish strong governance structures that ensure coordination between different levels of administration and multi-stakeholder participation.

Croatia stands out for having established in 2025 the Coordination for the Implementation of the Open Data Policy, a multisectoral body that monitors regulatory compliance, improves data accessibility, and supports authorities. This model ensures broad participation and ensures that national and local initiatives are aligned. The national portal functions as a central hub, complemented by local portals such as the one for the city of Zagreb. In addition, knowledge exchanges are encouraged through coordination meetings, regular updates and collaborations with universities, such as the Faculty of Electrical and Computer Engineering at the University of Zagreb.

France's complete data governance structure

This country leads the ranking of the Open Data Maturity Report thanks, among others, to its comprehensive governance model that integrates open data roles at all administrative levels. At the national level, the General Data Administrator coordinates public data policy and oversees a network of chief data officers in each ministry. Etalab, the national open data and digital innovation unit, manages this network and provides technical support.

At the ministerial level, each data controller manages the data policy (openness, quality and reuse), supported by Etalab. Some ministries also appoint specific open data officers and data stewards who handle technical and organizational aspects of the publication. At the local level, each regional representative (préfet) designates a referent for data, algorithms and source codes. The Digital Inter-Ministerial Directorate also coordinates a network of API managers to enable dynamic access to data. They also ensure compliance with DCAT-AP in their metadata, as we do in Spain.

Effective implementation: from strategy to action in Italy

Italian public administrations are obliged to adopt data publication plans, following national guidelines, which prioritise high-value datasets, dynamic data and user-requested information. The implementation is supported by a robust monitoring system. The Agency for Digital Italy (AgID) tracks progress through its Digital Transformation Dashboard, which reports the growth of datasets in dati.gov.it.

Policies are updated regularly: the latest three-year plan (2024-2026) was adopted in December 2024. To assist data holders and officials, AgID provides guidance, conducts webinars, and launched the AgID Academy to strengthen digital competencies.

Culture of reuse in Poland and Ukraine

A crucial aspect of encouraging open data is to provide practical resources to guide public organizations throughout the process. Poland stands out for its open data manual, the second edition of which was published by the Ministry of Digital Affairs.

This updated handbook introduces new categories of data, explains how regulations shape open data policies, and introduces the Poland Data Portal.

The handbook functions as a checklist for offices, guiding them through their responsibilities to open data and foster a culture of reuse and include tools such as an openness checklist for compliance.

In this regard, Ukraine has also adopted an approach towards reuse and the generation of resources that incentivise this reuse of data. The Ministry of Digital Transformation has developed a comprehensive set of resources and tools including detailed technical documentation and templates to help prepare and publish datasets aligned with national standards, covering metadata structuring, licensing, and compliance with the DCAT-AP standard.

The national portal includes functionalities for tracking the publication and reuse of datasets. Suppliers receive feedback on the quality and completeness of their metadata, helping them identify areas for improvement. In addition, regular training sessions and workshops are organized to develop the skills of publishers, promoting a shared understanding of open data principles and technical requirements.

Albania: comprehensive redesign of the portal

This country exemplifies the maturity improvements that can be achieved through a comprehensive update of the national open data portal. The large-scale revamp of the portal improved usability, transparency, and user engagement.

The updated portal now features a dataset rating system (1-5 stars), a dedicated news section on open data topics , and multiple notification options, including RSS and Atom feeds, and email. Users can track the progress of their data requests, which are actively monitored and responses summarized in publicly available reports.

To better understand and respond to user needs, the portal team tracks search keywords, analyzes traffic, and conducts user surveys and workshops.

Lithuania: official monitoring methodology

One of the key practices highlighted in the report is the adoption of formal frameworks and structured methodologies that provide a systematic way to assess the impact of open data. Lithuania excels with a comprehensive approach because it defines how institutions should report on open data activities, ensuring consistency, accountability, and compliance across the public sector.

In addition, the Ministry of Economy and Innovation made calculations to estimate the economic impact of open data. This analysis provides quantifiable evidence of the contribution of open data to innovation, productivity and job creation. The results show that open data in Lithuania creates a market value of approximately €566 billion (around 1.2% of GDP) and supports close to 8,000 value-added jobs.

Germany: systematic funding for collaboration

Germany's mFund initiative provides structured financial support for mobility-related data projects, fostering partnerships beyond government.

An example is the miki (mobil im Kiez) project, which develops navigation and orientation solutions for people with limited mobility through the active engagement of civil society. The team created a national prototype with visualizations for cities such as Cologne, Kassel, Munich, Potsdam and Saarbrücken, showing building barriers and road surfaces. These visualizations will be integrated into Wheelmap.org, helping individuals with mobility disabilities.

Conclusion

In conclusion, the Open Data Maturity Report 2025 demonstrates that the most open data mature European countries share common characteristics: inclusive and well-structured governance, effective implementation supported by planning and monitoring, practical support to data publishers, continuous technical innovation in portals and, crucially, systematic impact measurement.

The good practices highlighted here are transferable and adaptable. We invite Spanish public administrations to explore these experiences, adapt them to their local contexts and share their own innovations, thus contributing to an increasingly robust and impact-oriented European open data ecosystem.

At the crossroads of the 21st century, cities are facing challenges of enormous magnitude. Explosive population growth, rapid urbanization and pressure on natural resources are generating unprecedented demand for innovative solutions to build and manage more efficient, sustainable and livable urban environments.

Added to these challenges is the impact of climate change on cities. As the world experiences alterations in weather patterns, cities must adapt and transform to ensure long-term sustainability and resilience.

One of the most direct manifestations of climate change in the urban environment is the increase in temperatures. The urban heat island effect, aggravated by the concentration of buildings and asphalt surfaces that absorb and retain heat, is intensified by the global increase in temperature. Not only does this affect quality of life by increasing cooling costs and energy demand, but it can also lead to serious public health problems, such as heat stroke and the aggravation of respiratory and cardiovascular diseases.

The change in precipitation patterns is another of the critical effects of climate change affecting cities. Heavy rainfall episodes and more frequent and severe storms can lead to urban flooding, especially in areas with insufficient or outdated drainage infrastructure. This situation causes significant structural damage, and also disrupts daily life, affects the local economy and increases public health risks due to the spread of waterborne diseases.

In the face of these challenges, urban planning and design must evolve. Cities are adopting sustainable urban planning strategies that include the creation of green infrastructure, such as parks and green roofs, capable of mitigating the heat island effect and improving water absorption during episodes of heavy rainfall. In addition, the integration of efficient public transport systems and the promotion of non-motorised mobility are essential to reduce carbon emissions.

The challenges described also influence building regulations and building codes. New buildings must meet higher standards of energy efficiency, resistance to extreme weather conditions and reduced environmental impact. This involves the use of sustainable materials and construction techniques that not only reduce greenhouse gas emissions, but also offer safety and durability in the face of extreme weather events.

In this context, urban digital twins have established themselves as one of the key tools to support planning, management and decision-making in cities. Its potential is wide and transversal: from the simulation of urban growth scenarios to the analysis of climate risks, the evaluation of regulatory impacts or the optimization of public services. However, beyond technological discourse and 3D visualizations, the real viability of an urban digital twin depends on a fundamental data governance issue: the availability, quality, and consistent use of standardized open data.

What do we mean by urban digital twin?

An urban digital twin is not simply a three-dimensional model of the city or an advanced visualization platform. It is a structured and dynamic digital representation of the urban environment, which integrates:

-

The geometry and semantics of the city (buildings, infrastructures, plots, public spaces).

-

Geospatial reference data (cadastre, planning, networks, environment).

-

Temporal and contextual information, which allows the evolution of the territory to be analysed and scenarios to be simulated.

-

In certain cases, updatable data streams from sensors, municipal information systems or other operational sources.

From a standards perspective, an urban digital twin can be understood as an ecosystem of interoperable data and services, where different models, scales and domains (urban planning, building, mobility, environment, energy) are connected in a coherent way. Its value lies not so much in the specific technology used as in its ability to align heterogeneous data under common, reusable and governable models.

In addition, the integration of real-time data into digital twins allows for more efficient city management in emergency situations. From natural disaster management to coordinating mass events, digital twins provide decision-makers with a real-time view of the urban situation, facilitating a rapid and coordinated response.

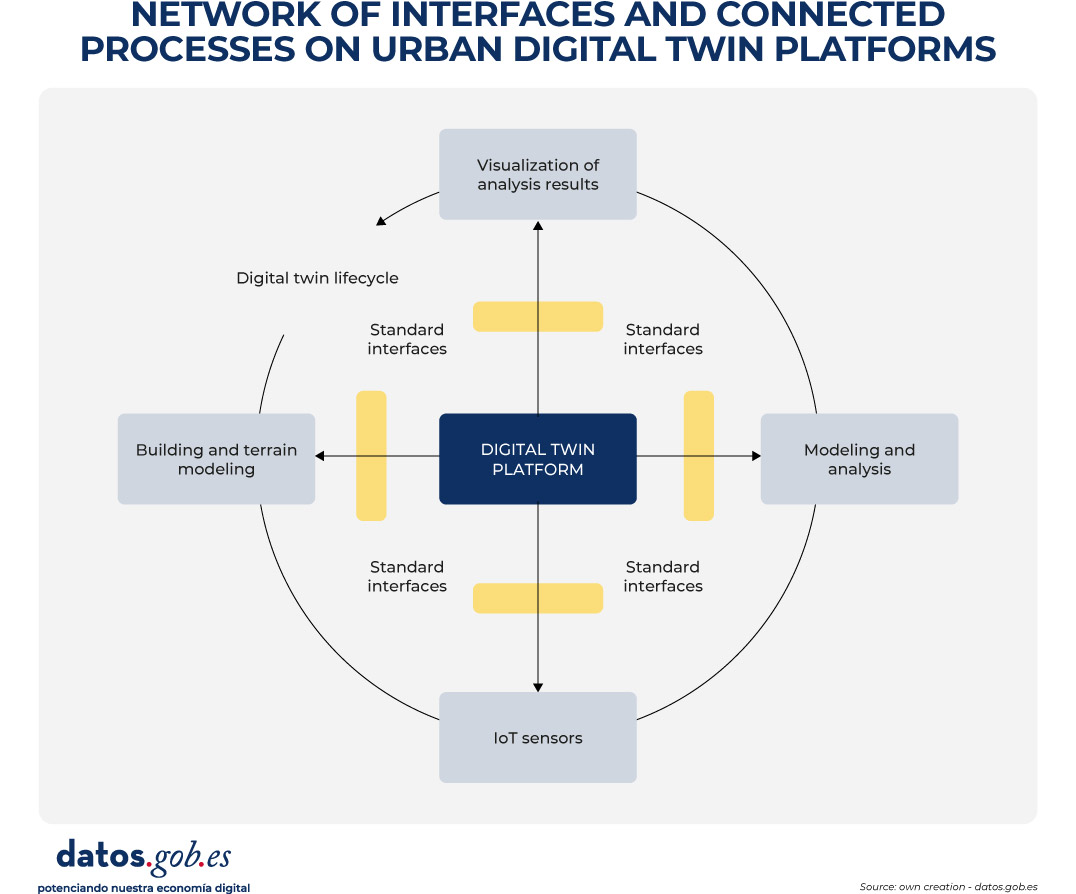

In order to contextualize the role of standards and facilitate the understanding of the inner workings of an urban digital twin, Figure 1 presents a conceptual diagram of the network of interfaces, data models, and processes that underpin it. The diagram illustrates how different sources of urban information – geospatial reference data, 3D city models, regulatory information and, in certain cases, dynamic flows – are integrated through standardised data structures and interoperable services.

Figure 1. Conceptual diagram of the network of interfaces and connected processes in urban digital twin platforms. Source: own elaboration – datos.gob.es.

In these environments, CityGML and CityJSON act as urban information models that allow the city to be digitally described in a structured and understandable way. In practice, they function as "common languages" to represent buildings, infrastructures and public spaces, not only from the point of view of their shape (geometry), but also from the point of view of their meaning (e.g. whether an object is a residential building, a public road or a green area). As a result, these models form the basis on which urban analyses and the simulation of different scenarios are based.

In order for these three-dimensional models to be visualized in an agile way in web browsers and digital applications, especially when dealing with large volumes of information, 3D Tiles can be incorporated. This standard allows urban models to be divided into manageable fragments, facilitating their progressive loading and interactive exploration, even on devices with limited capacities.

The access, exchange and reuse of all this information is usually articulated through OGC APIs, which can be understood as standardised interfaces that allow different applications to consult and combine urban data in a consistent way. These interfaces make it possible, for example, for an urban planning platform, a climate analysis tool or a citizen viewer to access the same data without the need to duplicate or transform it in a specific way.

In this way, the diagram reflects the flow of data from the original sources to the final applications, showing how the use of open standards allows for a clear separation of data, services, and use cases. This separation is key to ensuring interoperability between systems, the scalability of digital solutions and the sustainability of the urban digital twin over time, aspects that are addressed transversally in the rest of the document.

Real example: Urban regeneration project in Barcelona

An example of the impact of urban digital twins on urban construction and management can be found in the urban regeneration project of the Plaza de las Glòries Catalanes, in Barcelona (Spain). This project aimed to transform one of the city's most iconic urban areas into a more accessible, greener and sustainable public space.

Figure 2. General view. Image by the joint venture Fuses Viader + Perea + Mansilla + Desvigne.

By using digital twins from the initial phases of the project, the design and planning teams were able to create detailed digital models that represented not only the geometry of existing buildings and infrastructure, but also the complex interactions between different urban elements, such as traffic, public transport and pedestrian areas.

These models not only facilitated the visualization and communication of the proposed design among all stakeholders, but also allowed different scenarios to be simulated and their impact on mobility, air quality, and walkability to be assessed. As a result, more informed decisions could be made, contributing decisively to the overall success of the urban regeneration initiative.

The critical role of open data in urban digital twins

In the context of urban digital twins, open data should not be understood as an optional complement or as a one-off action of transparency, but as the structural basis on which sustainable, interoperable and reusable digital urban systems are built over time. An urban digital twin can only fulfil its function as a planning, analysis and decision-support tool if the data that feeds it is available, well defined and governed according to common principles.

When a digital twin develops without a clear open data strategy, it tends to become a closed system and dependent on specific technology solutions or vendors. In these scenarios, updating information is costly and complex, reuse in new contexts is limited, and the twin quickly loses its strategic value, becoming obsolete in the face of the real evolution of the city it intends to represent. This lack of openness also hinders integration with other systems and reduces the ability to adapt to new regulatory, social or environmental needs.

One of the main contributions of urban digital twins is their ability to base public decisions on traceable and verifiable data. When supported by accessible and understandable open data, these systems allow us to understand not only the outcome of a decision, but also the data, models and assumptions that support it, integrating geospatial information, urban models, regulations and, in certain cases, dynamic data. This traceability is key to accountability, the evaluation of public policies and the generation of trust at both the institutional and citizen levels. Conversely, in the absence of open data, the analyses and simulations that support urban decisions become opaque, making it difficult to explain how and why a certain conclusion has been reached and weakening confidence in the use of advanced technologies for urban management.

Urban digital twins also require the collaboration of multiple actors – administrations, companies, universities and citizens – and the integration of data from different administrative levels and sectoral domains. Without an approach based on standardized open data, this collaboration is hampered by technical and organizational barriers: each actor tends to use different formats, models, and interfaces, which increases integration costs and slows down the creation of reuse ecosystems around the digital twin.

Another significant risk associated with the absence of open data is the increase in technological dependence and the consolidation of information silos. Digital twins built on non-standardized or restricted access data are often tied to proprietary solutions, making it difficult to evolve, migrate, or integrate with other systems. From the perspective of data governance, this situation compromises the sovereignty of urban information and limits the ability of administrations to maintain control over strategic digital assets.

Conversely, when urban data is published as standardised open data, the digital twin can evolve as a public data infrastructure, shared, reusable and extensible over time. This implies not only that the data is available for consultation or visualization, but that it follows common information models, with explicit semantics, coherent geometry and well-defined access mechanisms that facilitate its integration into different systems and applications.

This approach allows the urban digital twin to act as a common database on which multiple use cases can be built —urban planning, license management, environmental assessment, climate risk analysis, mobility, or citizen participation—without duplicating efforts or creating inconsistencies. The systematic reuse of information not only optimises resources, but also guarantees coherence between the different public policies that have an impact on the territory.

From a strategic perspective, urban digital twins based on standardised open data also make it possible to align local policies with the European principles of interoperability, reuse and data sovereignty. The use of open standards and common information models facilitates the integration of digital twins into wider initiatives, such as sectoral data spaces or digitalisation and sustainability strategies promoted at European level. In this way, cities do not develop isolated solutions, but digital infrastructures coherent with higher regulatory and strategic frameworks, reinforcing the role of the digital twin as a transversal, transparent and sustainable tool for urban management.

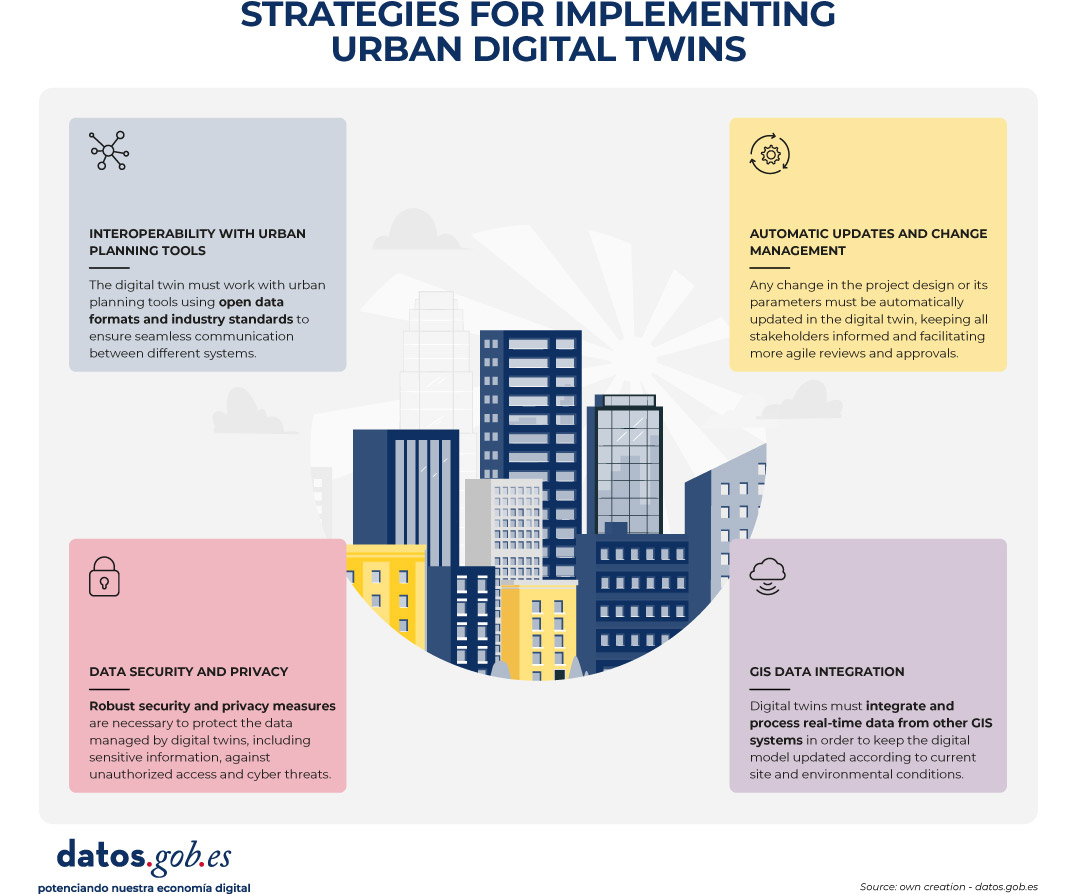

Figure 3. Strategies to implement urban digital twins. Source: own elaboration – datos.gob.es.

Conclusion

Urban digital twins represent a strategic opportunity to transform the way cities plan, manage and make decisions about their territory. However, their true value lies not in the technological sophistication of the platforms or the quality of the visualizations, but in the robustness of the data approach on which they are built.

Urban digital twins can only be consolidated as useful and sustainable tools when they are supported by standardised, well-governed open data designed from the ground up for interoperability and reuse. In the absence of these principles, digital twins risk becoming closed, difficult to maintain, poorly reusable solutions that are disconnected from the actual processes of urban governance.

The use of common information models, open standards and interoperable access mechanisms allows the digital twin to evolve as a public data infrastructure, capable of serving multiple public policies and adapting to social, environmental and regulatory changes affecting the city. This approach reinforces transparency, improves institutional coordination, and facilitates decision-making based on verifiable evidence.

In short, betting on urban digital twins based on standardised open data is not only a technical decision, but also a public policy decision in terms of data governance. It is this vision that will enable digital twins to contribute effectively to addressing major urban challenges and generating lasting public value for citizens.

The Cabildo Insular de Tenerife has announced the II Open Data Contest: Development of APPs, an initiative that rewards the creation of web and mobile applications that take advantage of the datasets available on its datos.tenerife.es portal. This call represents a new opportunity for developers, entrepreneurs and innovative entities that want to transform public information into digital solutions of value for society. In this post, we tell you the details about the competition.

A growing ecosystem: from ideas to applications

This initiative is part of the Cabildo de Tenerife's Open Data project, which promotes transparency, citizen participation and the generation of economic and social value through the reuse of public information.

The Cabildo has designed a strategy in two phases:

-

The I Open Data Contest: Reuse Ideas (already held) focused on identifying creative proposals.

-

The II Contest: Development of PPPs (current call) that gives continuity to the process and seeks to materialize ideas in functional applications.

This progressive approach makes it possible to build an innovation ecosystem that accompanies participants from conceptualization to the complete development of digital solutions.

The objective is to promote the creation of digital products and services that generate social and economic impact, while identifying new opportunities for innovation and entrepreneurship in the field of open data.

Awards and financial endowment

This contest has a total endowment of 6,000 euros distributed in three prizes:

-

First prize: 3,000 euros

-

Second prize: 2,000 euros

-

Third prize: 1,000 euros

Who can participate?

The call is open to:

-

Natural persons: individual developers, designers, students, or anyone interested in the reuse of open data.

-

Legal entities: startups, technology companies, cooperatives, associations or other entities.

As long as they present the development of an application based on open data from the Cabildo de Tenerife. The same person, natural or legal, can submit as many applications as they wish, both individually and jointly.

What kind of applications can be submitted?

Proposals must be web or mobile applications that use at least one dataset from the datos.tenerife.es portal. Some ideas that can serve as inspiration are:

-

Applications to optimize transport and mobility on the island.

-

Tools for visualising tourism or environmental data.

-

Real-time citizen information services.

-

Solutions to improve accessibility and social participation.

-

Economic or demographic data analysis platforms.

Evaluation criteria: what does the jury assess?

The jury will evaluate the proposals considering the following criteria:

-

Use of open data: degree of exploitation and integration of the datasets available in the portal.

-

Impact and usefulness: value that the application brings to society, ability to solve real problems or improve existing services.

-

Innovation and creativity: originality of the proposal and innovative nature of the proposed solution.

-

Technical quality: code robustness, good programming practices, scalability and maintainability of the application.

-

Design and usability: user experience (UX), attractive and intuitive visual design, guarantee of digital accessibility on Android and iOS devices.

How to participate: deadlines and form of submission:

Applications can be submitted until March 10, 2026, three months from the publication of the call in the Official Gazette of the Province.

Regarding the required documentation, proposals must be submitted in digital format and include:

-

Detailed technical description of the application.

-

Report justifying the use of open data.

-

Specification of technological environments used.

-

Video demonstration of how the application works.

-

Complete source code.

-

Technical summary sheet.

The organising institution recommends electronic submission through the Electronic Office of the Cabildo de Tenerife, although it is also possible to submit it in person at the official registers enabled. The complete bases and the official application form are available at the Cabildo's Electronic Office.

With this second call, the Cabildo de Tenerife consolidates its commitment to transparency, the reuse of public information and the creation of a digital innovation ecosystem. Initiatives like this demonstrate how open data can become a catalyst for entrepreneurship, citizen participation, and local economic development.

In the last six months, the open data ecosystem in Spain has experienced intense activity marked by regulatory and strategic advances, the implementation of new platforms and functionalities in data portals, or the launch of innovative solutions based on public information.

In this article, we review some of those advances, so you can stay up to date. We also invite you to review the article on the news of the first half of 2025 so that you can have an overview of what has happened this year in the national data ecosystem.

Cross-cutting strategic, regulatory and policy developments

Data quality, interoperability and governance have been placed at the heart of both the national and European agenda, with initiatives seeking to foster a robust framework for harnessing the value of data as a strategic asset.

One of the main developments has been the launch of a new digital package by the European Commission in order to consolidate a robust, secure and competitive European data ecosystem. This package includes a digital bus to simplify the application of the Artificial Intelligence (AI) Regulation. In addition, it is complemented by the new Data Union Strategy, which is structured around three pillars:

- Expand access to quality data to drive artificial intelligence and innovation.

- Simplify the existing regulatory framework to reduce barriers and bureaucracy.

- Protect European digital sovereignty from external dependencies.

Its implementation will take place gradually over the next few months. It will be then that we will be able to appreciate its effects on our country and the rest of the EU territories.

Activity in Spain has also been - and will be - marked by the V Open Government Plan 2025-2029, approved last October. This plan has more than 200 initiatives and contributions from both civil society and administrations, many of them related to the opening and reuse of data. Spain's commitment to open data has also been evident in its adherence to the International Open Data Charter, a global initiative that promotes the openness and reuse of public data as tools to improve transparency, citizen participation, innovation and accountability.

Along with the promotion of data openness, work has also been done on the development of data sharing spaces. In this regard, the UNE 0087 standard was presented, which is in addition to UNE specifications on data and defines for the first time in Spain the key principles and requirements for creating and operating in data spaces, improving their interoperability and governance.

More innovative data-driven solutions

Spanish bodies continue to harness the potential of data as a driver of solutions and policies that optimise the provision of services to citizens. Some examples are:

- The Ministry of Health and citizen science initiative, Mosquito Alert, are using artificial intelligence and automated image analysis to improve real-time detection and tracking of tiger mosquitoes and invasive species.

- The Valenciaport Foundation, together with other European organisations, has launched a free tool that allows the benefits of installing wind and photovoltaic energy systems in ports to be assessed.

- The Cabildo de la Palma opted for smart agriculture with the new Smart Agro website: farmers receive personalised irrigation recommendations according to climate and location. The Cabildo has also launched a viewer to monitor mobility on the island.

- The City Council of Segovia has implemented a digital twin that centralizes high-value applications and geographic data, allowing the city to be visualized and analyzed in an interactive three-dimensional environment. It improves municipal management and promotes transparency and citizen participation.

- Vila-real City Council has launched a digital application that integrates public transport, car parks and tourist spots in real time. The project seeks to optimize urban mobility and promote sustainability through smart technology.

- Sant Boi City Council has launched an interactive map made with open data that centralises information on urban transport, parking and sustainable options on a single platform, in order to improve urban mobility.

- The DataActive International Research Network has been inaugurated, an initiative funded by the Higher Sports Council that seeks to promote the design of active urban environments through the use of open data.

Not only public bodies reuse open data, universities are also working on projects linked to digital innovation based on public information:

- Students from the Universitat de València have designed projects that use AI and open data to prevent natural disasters.

- Researchers from the University of Castilla-La Mancha have shown that it is feasible to reuse air quality prediction models in different areas of Madrid using transfer learning.

In addition to solutions, open data can also be used to shape other types of products, including sculptures. This is the case of "The skeleton of climate change", a figure presented by the National Museum of Natural Sciences, based on data on changes in global temperature from 1880 to 2024.

New portals and functionalities to extract value from data

The solutions and innovations mentioned above are possible thanks to the existence of multiple platforms for opening or sharing data that do not stop incorporating new data sets and functionalities to extract value from them. Some of the developments we have seen in this regard in recent months are:

- The National Observatory of Technology and Society (ONTSI) has launched a new website. One of its new features is Ontsi Data, a tool for preparing reports with indicators from both its portal and third parties.

- The General Council of Notaries has launched a Housing Statistical Portal, an open tool with reliable and up-to-date data on the real estate market in Spain.

- The Spanish Agency for Food Safety and Nutrition (AESAN) has inaugurated on its website an open data space with microdata on the composition of food and beverages marketed in Spain.

- The Centre for Sociological Research (CIS) launched a renewed website, adapted to any device and with a more powerful search engine to facilitate access to its studies and data.

- The National Geographic Institute (IGN) has presented a new website for SIOSE, the Information System on Land Occupation in Spain, with a more modern, intuitive and dynamic design. In addition, it has made available to the public a new version of the Geographic Reference Information of Transport Networks (IGR-RT), segmented by provinces and modes of transport, and available in Shapefile and GeoPackage.

- The AKIS Advisors Platform, promoted by the Ministry of Agriculture, Fisheries and Food, has launched a new open data API that allows registered users to download and reuse content related to the agri-food sector in Spain.

- The Government of Catalonia launched a new corporate website that centralises key aspects of European funds, public procurement, transparency and open data in a single point. It has also launched a website where it collects information on the AI systems it uses.

- PortCastelló has published its 2024 Proceedings in open data format. All the management, traffic, infrastructures and economic data of the port are now accessible and reusable by any citizen.

- Researchers from the Universitat Oberta de Catalunya and the Institute of Photonic Sciences have created an open library with data on 140 biomolecules. A pioneering resource that promotes open science and the use of open data in biomedicine.

- CitriData, a federated space for data, models and services in the Andalusian citrus value chain, was also presented. Its goal is to transform the sector through the intelligent and collaborative use of data.

Other organizations are immersed in the development of their novelties. For example, we will soon see the new Open Data Portal of Aguas de Alicante, which will allow public access to key information on water management, promoting the development of solutions based on Big Data and AI.

These months have also seen strategic advances linked to improving the quality and use of data, such as the Data Government Model of the Generalitat Valenciana or the Roadmap for the Provincial Strategy of artificial intelligence of the Provincial Council of Castellón.

Datos.gob.es also introduced a new platform aimed at optimizing both publishing and data access. If you want to know this and other news of the Aporta Initiative in 2025, we invite you to read this post.

Encouraging the use of data through events, resources and citizen actions

The second half of 2025 was the time chosen by a large number of public bodies to launch tenders aimed at promoting the reuse of the data they publish. This was the case of the Junta de Castilla y León, the Madrid City Council, the Valencia City Council and the Provincial Council of Bizkaia. Our country has also participated in international events such as the NASA Space Apps Challenge.

Among the events where the power of open data has been disseminated, the Open Government Partnership (OGP) Global Summit, the Iberian Conference on Spatial Data Infrastructures (JIIDE), the International Congress on Transparency and Open Government or the 17th International Conference on the Reuse of Public Sector Information of ASEDIE stand out. although there were many more.

Work has also been done on reports that highlight the impact of data on specific sectors, such as the DATAGRI Chair 2025 Report of the University of Cordoba, focused on the agri-food sector. Other published documents seek to help improve data management, such as "Fundamentals of Data Governance in the context of data spaces", led by DAMA Spain, in collaboration with Gaia-X Spain.

Citizen participation is also critical to the success of data-driven innovation. In this sense, we have seen both activities aimed at promoting the publication of data and improving those already published or their reuse:

- The Barcelona Open Data Initiative requested citizen help to draw up a ranking of digital solutions based on open data to promote healthy ageing. They also organized a participatory activity to improve the iCuida app, aimed at domestic and care workers. This app allows you to search for public toilets, climate shelters and other points of interest for the day-to-day life of caregivers.

- The Spanish Space Agency launched a survey to find out the needs and uses of Earth Observation images and data within the framework of strategic projects such as the Atlantic Constellation.

In conclusion, the activities carried out in the second half of 2025 highlight the consolidation of the open data ecosystem in Spain as a driver of innovation, transparency and citizen participation. Regulatory and strategic advances, together with the creation of new platforms and solutions based on data, show a firm commitment on the part of institutions and society to take advantage of public information as a key resource for sustainable development, the improvement of services and the generation of knowledge.

As always, this article is just a small sample of the activities carried out. We invite you to share other activities that you know about through the comments.

The recent Meeting Forum between the Government of Spain and the Autonomous Communities has marked a turning point in how public administrations approach digital transformation. For the first time, the debate has not focused on convincing about the importance of data or the need to modernize processes, but on executing a coherent strategy that allows the deployment of AI to take advantage of its full potential. All this highlighting the importance of having a solid database of well-governed data that is useful for citizens.

The conclusions of the meeting, articulated in specialized working groups, outline a roadmap that confirms the maturity reached. Far from focusing solely on technological aspects, the forum has focused on where the challenge really lies: on the cultural, organisational and governance obstacles that will determine the success or failure of this transformation in the coming years. The debate took place in three working tables:

-

Table 1: Unlocking the data, from the Standard to the Practice

-

Table 2: Orchestration of Data, Symphony or Cacophony of Roles?

-

Table 3: Sectoral Data Spaces, the Public Boost to Market Value

In this post we tell you the main conclusions.

Data as a strategic asset: from theory to practice

The starting point of the first table of the forum was to understand that the main challenge to turn data into a strategic asset is no longer technological. Administrations today have robust, stable and capable solutions. The real obstacle is cultural: overcoming the vision of data as a burden and consolidating it as an engine of innovation and public service.

Breaking this inertia, according to the participants in the forum, requires decisive leadership capable of aligning regulatory framework and technological capabilities. And, in this change, artificial intelligence is emerging as the catalyst because it highlights the hidden value of data and, above all, because it cannot function without overcoming the traditional administrative silos that still fragment public information.

One of the most repeated messages at this table was the still widespread fear of sharing information between organizations. The fear of taking responsibility creates barriers that limit the potential of public data. To reverse this situation, the need to combine clear mandates with strong incentives was underlined. It is not enough to order; you have to convince by showing real profits. Attractive and mutually beneficial use cases are thus revealed as a fundamental tool to foster collaboration.

In relation to this, legal certainty also occupied a large part of the debate. Although it is often used as a reason to stop projects, participants stressed that it should not become an excuse for paralysis. The way forward is to clarify, simplify and harmonise the rules, evolving from an excessively legalistic approach to a model based on trust and the social value generated by the responsible use of data.

In addition, the key role of public-private collaboration was highlighted. Companies don't just bring technology, they can also accelerate innovation if they feel part of a stable and trusted ecosystem. To this end, administrations can offer guarantees of sovereignty and utility, and, in the event of a lack of reciprocity, resort to public procurement regulation to ensure participation.

Coordinating roles so that the orchestra does not go out of tune

On the other hand, the second table addressed one of the great challenges of the Public Administration: coordinating the multiple profiles necessary to manage, protect and exploit data in a context that is increasingly oriented towards AI. Nowadays, any administration can hire the same cloud platforms or analysis tools. Technology has been democratized. What really sets one organization apart from another is the richness, quality, and governance of its data.

Therefore, for the organization to function as a fine-tuned orchestra, the synchronization of roles is essential. In this sense, the table underlined the need for superior strategic leadership from the figure of the CDO (Chief Data Officer) capable of establishing business priorities and coordinating the team. Its legitimacy must come from the highest levels of the organization, because without this support it is difficult to promote the required organizational and cultural changes. The CDO is not a merely technical role because, in addition, it plays a key role in guiding data governance from the perspective of usefulness and impact.

The roles traditionally associated with regulatory compliance must also evolve. The Data Protection Officer (DPO) must become a strategic partner, co-responsible for risk and an active participant in decision-making. Only in this way will it be able to accompany the deployment of innovative projects based on data.

One of the most relevant consensuses was the central role of data quality. Although it is often perceived as a barrier that slows down innovation, the reality is just the opposite: quality is a non-negotiable requirement for developing ethical, robust and valid algorithms. AI cannot be built on opaque, inconsistent, or untraceable data without putting public trust at risk.

In addition, the value provided by historically consolidated disciplines within the Administration, such as statistics, cartography or open data, was highlighted. Far from being an anchor that slows down modernization, these specialties are a driver: their integration from the origin of the processes ensures that AI systems are fed with verified, traceable and top-quality data.

In conclusion, the table proposed moving towards multidisciplinary teams where engineers, business experts and legal managers work together throughout the data life cycle, avoiding the traditional compartmentalizations that weigh down digital projects so much.

Sectoral Data Spaces: from public impetus to the real market

The third table focused its analysis on a key element for the European data economy: the Sectoral Data Spaces. The Spanish public administrations showed a firm commitment to these developments, betting on a role of promoters, facilitators and guarantors of trust.

The message was direct: these spaces must evolve towards sustainable business models. Public subsidies can serve as an initial impetus, but they cannot sustain projects that do not generate real value for the market. Demand, and not just the supply of funding, must validate the viability of these initiatives in the medium term.

One of the challenges identified is the scaling up of projects that are born in regional areas to national dimensions. To achieve a significant impact, a shared vision and close collaboration between Autonomous Communities (ACs) is essential, something that the Forum has reinforced precisely with this type of meeting. One of the key objectives of the Artificial Intelligence Strategy 2024 and the recent Data Union Strategy is for SMEs to be actively involved. To do this, you need to simplify technical barriers and communicate the value proposition clearly, in a business-oriented language rather than a technicality.

Finally, an optimistic message was delivered about talent. Although there is concern about the ability of the public sector to attract and retain specialized profiles in competition with the private sector, the table rejected the idea of resignation. The Administration is not condemned to a secondary role if it is able to strengthen and enhance its internal talent. Digital transformation requires leadership from the public sphere, and this leadership is possible with the right structures, opportunities for growth, and a shared vision.

Conclusion: a qualitative leap towards maturity

The 2025 Autonomous Communities Forum has served to consolidate a collective and mature vision of the role of data in the Administration. Overcoming silos, coordinating roles, simplifying standards, guaranteeing data quality and generating sustainable business models are essential steps for AI and the data economy to generate real value for citizens.

Spain is moving towards a model in which administrations stop focusing on the tool, to focus on utility; A model where collaboration – between agencies, with the private sector and between territories – is the key to unlocking the true potential of public data.

The reuse of open data makes it possible to generate innovative solutions that improve people's lives, boost citizen participation and strengthen public transparency. Proof of this are the competitions promoted this year by the Junta de Castilla y León and the Madrid City Council.

Being the IX edition of the Castilla y León Competition and the first edition of the Madrid Competition, both administrations have presented the prizes to the selected projects, recognising both students and startups as well as professionals and researchers who have been able to transform public data into useful tools and knowledge. In this post, we review the award-winning projects in each competition and the context that drives them.

Castilla y León: ninth edition of consolidated awards in a more open administration