The Spanish Data Protection Agency (AEPD), through its own Innovation and Technology section, carries out an essential didactic task by providing a documentary corpus that translates the legal obligations of the General Data Protection Regulation (GDPR) into specific technological realities. Its value lies in its ability to offer legal certainty and technical guidelines in areas where regulations are still finding their practical fit, such as artificial intelligence or biometrics.

These are reference guides, articles and other teaching materials aimed especially at SMEs and entrepreneurs. In this post we present some of the most recent, ordered by sector and subject.

The new trends in artificial intelligence and its secure deployment

The evolution of artificial intelligence towards increasingly autonomous systems poses new challenges in terms of data protection. For this reason, the Spanish Data Protection Agency has developed various guides and documents aimed at facilitating a secure and responsible deployment of this technology. In general, AI is one of the areas of greatest document activity of the AEPD due to its transversal impact. The Agency's resources range from internal management to state-of-the-art technologies.

- Guide to agentric artificial intelligence from the perspective of data protection: theso-called agentric AI is one capable of making decisions and acting with a certain degree of independence. Unlike purely reactive models, an agent AI can carry out multiple tasks autonomously and make intermediate decisions during complex processes. This guide discusses the risks of loss of human control and sets out criteria to ensure that decision traceability is not lost in automation.

- General policy for the use of generative AI in AEPD administrative processes: generative artificial intelligence (IAG or GenAI) is a type of AI capable of producing new content, such as text, images, audio or code from learned patterns. This document establishes an internal policy for its responsible use in administrative processes.

- Implementation annex of the AEPD's general IAG policy: this annex to the above document includes the permitted use cases, the type of systems recommended (external, internal or ad hoc), the level of risk associated with each application and the specific obligations of review, human control, security and data protection.

- Basic summary of obligations and recommendations for the management of generative AI: this is a synthesized outline on aspects of governance, design and development of use cases, processing of personal data and sensitive information, transparency and explainability, and responsible use of tools, among others.

- Federated Learning Report: Federated learning is an AI approach that allows models to be trained collaboratively without centralizing data, improving privacy, and aligning with GDPR. This guide explains what it consists of, where personal data can be processed and what are the benefits and challenges in data protection.

To complement this information, users can also visit the AEPD's blog, which serves as a trend observatory where the visible and invisible risks of consumer technologies are analyzed. Some of the topics covered are:

- Image and voice processing: Analyses have been published on AI voice transcription and the use of services that convert photos to other formats (such as animations). These articles warn about the processing of biometric data and the ownership of data in the cloud.

- Algorithmic literacy: resources such as "Addressing AI Misconceptions" seek to raise the level of critical judgment of users and managers in the face of the opacity of algorithms.

- Balance of rights: the analysis of the protection of minors in the digital environment and the design of public contracts that integrate privacy by design stands out.

European Digital Identity Wallet

The evolution towards an interconnected Europe requires robust identity standards and security measures accessible to all levels of business.

Building a secure, interoperable and trustworthy digital identity is one of the pillars of digital transformation in Europe. The future European Digital Identity Portfolio is a project that aims to allow citizens to identify themselves electronically and share personal attributes in a controlled way across multiple services, both public and private.

To analyse its implications from the point of view of privacy, the Spanish Data Protection Agency has published a series of four monographic articles throughout 2025. In them, the Agency breaks down the relationship between the new digital identity wallet and the GDPR.

These contents address key issues such as:

- Data minimisation and the principle of proportionality in information exchange: explains how the eIDAS2 Regulation boosts the European digital identity portfolio. This regulation establishes a framework for secure, interoperable and user-centric electronic identification, aligned with the GDPR to ensure the control and protection of personal data across the EU.

- The risks associated with interoperability between systems: delves into how to prevent the use of the European Digital Identity Wallet from tracking citizens when they present credentials in different public or private services, highlighting the need for advanced cryptographic solutions.

- The need to ensure user control over their credentials: examines identification threats in digital identity wallets under eIDAS2, highlighting that, without strong safeguards such as pseudonymization and non-bonding, even selective disclosure of data can allow for the improper identification and profiling of users.

- The security measures needed to prevent misuse or data breaches: Raises the threats of inaccuracy in digital identity wallets under eIDAS2, highlighting how outdated data or linkable cryptographic mechanisms can lead to erroneous decisions and compromise privacy. To solve this, it stresses the need for solutions that guarantee both reliability and plausible deniability (that there is no technical evidence to prove that a person has carried out a specific action with their wallet or digital credential).

This series provides a progressive overview that helps to understand both the potential of European digital identity and the challenges posed by its implementation from a data protection perspective.

Personal Data Protection Encryption in SMBs

For many small and medium-sized businesses, ensuring the security of personal data remains a challenge, especially due to a lack of technical resources or specialized knowledge. In this context, encryption is presented as a fundamental tool to protect the confidentiality and integrity of information.

With the aim of bringing this concept closer to a non-expert audience, the Spanish Data Protection Agency has published the Encryption Guide for the self-employed and SMEs, accompanied by an explanatory infographic.

These resources explain in a clear and practical way:

- What is encryption and why is it important in data protection?

- What types of encryption exist and in which cases they are applied.

- How to implement encryption measures in common situations, such as sending emails or storing information.

- Which tools can be used without the need for advanced knowledge.

Scientific research and the European legal framework

For profiles that require a more in-depth and academic analysis, the Agency has promoted the publication of scientific articles in various international media, which connect technology with ethics and law. Some examples are:

- Addictive patterns: analysis of how interface design affects human behavior.

- Neurotechnology: study on the risks of brain-computer interfaces.

- Algorithmic governance: A comprehensive analysis that aligns the GDPR with the European Artificial Intelligence Regulation (AI Act), the Digital Services Act (DSA), and the Cyber Resilience Act.

The didactic value of these materials lies in their ability to offer a 360-degree view of the data. From cutting-edge academic research to encryption infographics for a small business, the AEPD provides the building blocks for innovation that doesn't sacrifice privacy.

Together, these materials shared by the Spanish Data Protection Agency help to incorporate effective security measures and comply with the requirements of the General Data Protection Regulation in a proportionate and accessible way. All of them, and some others, are compiled and ordered by theme in its website, available here.

On May 8, 2026, a new edition of the National Open Data Meeting (ENDA) will take place, this time in Pamplona organized by the Government of Navarra. A key event for those working in public innovation, data reuse and digital entrepreneurship to exchange knowledge, experiences and good practices.

Under the slogan "Learn and undertake", this year's edition focuses on the role of data in education and in the promotion of new business projects, highlighting the importance of data literacy and the potential of open data as a driver of innovation, learning and the creation of job opportunities.

An open approach focused on practical experiences

This edition's agenda has been designed to address the main challenges and opportunities posed by the use of open data in this specific area. Throughout the day, issues such as the reuse of data in the field of education, the possibilities they offer at the workplace or the role of public administrations as drivers of this data ecosystem will be explored.

The session will begin at 9:00 a.m. with the inauguration by Javier Remírez Apesteguía, First Vice-President, Minister of the Presidency and Equality and spokesman for the Government of Navarre. It will be followed by the keynote speech "AI and open data: new ways of exploiting, understanding and creating value" by Mikel Galar Idoiate, professor in the area of Computer Science and Artificial Intelligence at the Public University of Navarra.

The event will then take place over various round tables that will allow for a deeper understanding of various topics from a practical perspective, with real examples and experiences shared by professionals who work with data on a daily basis.

- Table 1: Relationship between education, entrepreneurship and employment

- Table 2: The role of Public Administrations in the reuse of data

- Table 3: Entrepreneurship and open data: vision and future

- Table 4: The power of data in education

- Table 5: Evolution of Open Data Policies

Datos.gob.es will participate in this last table by contributing its experience as a reference platform at the national level in terms of opening and reusing public sector information. For its part, the General Directorate of Data will share its vision at table 2. In both cases, trends and the lines of work that are being developed to promote the culture of data throughout the country will be shared.

A new challenge to face

Since its first edition, ENDA has been a meeting place for those who work with open data from very diverse perspectives. Each year, the event has been consolidating a broader and more mature community, capable of generating projects, methodologies and alliances that transcend the meeting itself. In this sense, within the framework of each meeting, a central space is dedicated to the identification of a challenge that must be addressed in order to consolidate a more solid, useful and sustainable data ecosystem. These challenges, defined collaboratively, allow public policies and reuse initiatives to be oriented towards a more mature and impact-oriented model. The challenges addressed in previous editions have been:

- CHALLENGE 1. Generate data exchanges and facilitate their openness, where participants reached a series of conclusions to promote inter-administrative collaboration.

- CHALLENGE 2. Increase capacities for open data, where work was done on a competency framework so that public employees acquire the knowledge and skills necessary to promote open data.

- CHALLENGE 3. How to measure the impact of open data, where a methodological proposal was made for the development of a systematic mapping of initiatives that try to measure the impact of open data.

- CHALLENGE 4. Prioritization in the opening of public data, where the datasets to be published by public administrations (local, regional or national) were identified. To this end, a methodological proposal and a tool were developed to determine the level of organizational maturity in data openness policies.

We will have to wait for the celebration of the V ENDA to know what this year's challenge is.

Registration now open

The conference is open to both those who work with data in their day-to-day work and those who want to discover new opportunities in the educational, professional or entrepreneurial field. Whatever your case, in order to attend it is necessary to register through the event's website. The form will remain available until April 30, 2026.

After four editions held in different territories, ENDA continues to grow as an itinerant meeting that promotes collaboration between administrations, universities, companies and citizen organizations, consolidating a diverse community committed to open data. An opportunity to grow and continue learning.

If you want more information, on the official website you can consult the contents, materials and learnings from the four previous editions, which have contributed to strengthening the state open data ecosystem.

This library aims to highlight and preserve the historical memory of Villel de Mesa by promoting research, preservation, and the dissemination of all knowledge related to the town, while providing open access to everyone.

To achieve this, it pursues the following objectives:

📚 To catalogue, organize, and digitize documents and cultural resources related to Villel de Mesa.

🖥️ To facilitate access to documentary resources through a virtual platform.

🔎 To encourage research and interest in the history and culture of Villel de Mesa.

🤝 To establish collaborations with individuals and cultural institutions in order to expand the available resources.

In addition, it includes a substantial documentary repository that links to resources from other libraries, archives, and institutions. It also holds a modest digitalized collection of its own documents, as well as a set of monographs that deal extensively with the history, heritage, natural environment, and social context of Villel de Mesa.

Data visualization is not a recent discipline. For centuries, people have used graphs , maps, and diagrams to represent complex information. Classic examples such as the statistical maps of the nineteenth century or the graphs used in the press show that the need to "see" the data in order to understand it has always existed.

For a long time, creating visualizations required specialized knowledge and access to professional tools, which limited their production to very specific profiles. However, the digital and technological revolution has profoundly transformed this landscape. Today, anyone with access to a computer and data can create visualizations. Tools have been democratized, many of them are free or open source, and visualization work has extended beyond design to integrate into areas such as statistics, data science, academic research, public administration, or education.

Today, data visualization is a transversal competence that allows citizens to explore public information, institutions to better communicate their policies, and reusers to generate new services and knowledge from open data. In this post we present some of the most accessible and used options in data visualization.

A broad and diverse ecosystem of tools

The ecosystem of data visualization tools is broad and diverse, both in functionalities and levels of complexity. There are options designed for a first exploration of the data, others aimed at in-depth analysis and some designed to create interactive visualizations or complex digital narratives.

This variety allows you to tailor the visualization to different contexts and goals—from understanding a dataset in advance to publishing interactive charts, dashboards, or maps on the web.

The Data Visualization Society's annual survey reflects this diversity and shows how the use of certain tools evolves over time, consolidating some widely known options and giving way to new solutions that respond to emerging needs. These are some of the tools mentioned in the survey, ordered according to usage profiles.

The following criteria have been taken into account for the preparation of this list:

- Degree of use and maturity of the tool.

- Free access, free or with open versions.

- Useful for projects related to public data.

- Priority to open tools or with free versions.

Simple tools to get started

These tools are characterized by visual interfaces, a low learning curve, and the ability to create basic charts quickly. They are especially useful for getting started exploring open datasets or for outreach activities.

- Excel: it is one of the most widespread and well-known tools. It allows basic graphs and first data scans to be carried out in a simple way. While not specifically designed for advanced visualization, it is still a common gateway to working with data and its graphical representation.

- Google Sheets: works as a free and collaborative alternative to Excel. Its main advantage is the ability to work in a shared way and publish simple graphics online, which facilitates the dissemination of basic visualizations.

- Datawrapper: widely used in public communication and data journalism. It allows you to create clear graphs, maps, and interactive tables without the need for technical knowledge. It is particularly suitable for explaining data in a way that is understandable to a wide audience.

- RAWGraphs: free software tool aimed at visual exploration. It allows you to experiment with less common types of charts and discover new ways to represent data. It is especially useful in exploratory phases.

- Canva: While its approach is more informative than analytical, it can be useful for creating simple visual pieces that integrate basic graphics with design elements. It is suitable for visual communication of results, not so much for data analysis.

Data exploration and analysis tools

This group of tools is geared towards profiles that want to go beyond basic charts and perform more structured analysis. Many of them are open and widely consolidated in the field of data analysis.

- A: Free programming language widely used in statistics and data analysis. It has a wide ecosystem of packages that allow you to work with public data in a reproducible and transparent way.

- Ggplot2: R language display library. It is one of the most powerful tools for creating rigorous and well-structured graphs, both for analysis and for communicating results.

- Python (Matplotlib and Plotly): Python is one of the most widely used languages in data analysis. Matplotlib allows you to create customizable static charts, while Plotly makes it easy to create interactive visualizations. Together they offer a good balance between power and flexibility.

- Apache Superset: Open source platform for data analysis and dashboard creation. It has a more institutional and scalable approach, making it suitable for organizations that work with large volumes of public data.

This block is especially relevant for open data reusers and intermediate technical profiles who seek to combine analysis and visualization in a systematic way.

Tools for interactive and web visualization

These tools allow you to create advanced visualizations for publication in web environments. Although they require greater technical knowledge, they offer great flexibility and expressive possibilities.

- D3.js: it is one of the benchmarks in web visualization. It is based on open standards and allows full control over the visual representation of data. Its flexibility is very high, although so is its complexity.

In this practical exercise you can see how to use this library

- Vega and Vega-Lite: declarative languages for visualization that simplify the use of D3. They allow you to define graphics in a structured and reproducible way, offering a good balance between power and simplicity.

- Observable: interactive environment closely linked to D3 and Vega. It's especially useful for creating educational examples, prototypes, and exploratory visualizations that combine code, text, and graphics.

- Three.js and WebGL: technologies aimed at advanced and three-dimensional visualizations. Its use is more experimental and is usually linked to dissemination projects or visual research.

In this section, it should be noted that, although the technical barriers are greater, these tools allow for the creation of rich interactive experiences that can be very effective in communicating complex public data.

Geospatial data and mapping tools

Geographic visualization is especially relevant in the field of open data, since a large part of public information has a territorial dimension. In this field, free software has a prominent weight and is closely aligned with use in public administrations.

- QGIS: a benchmark in free software for geographic information systems (GIS). It is widely used in public administrations and allows spatial data to be analysed and visualised in great detail.

- ArcGIS: very widespread in the institutional field. Although it is not free software, its use is well established and is part of the regular ecosystem of many public organizations.

- Mapbox: platform aimed at creating interactive web maps. It is widely used in online visualization projects and allows geographic data to be integrated into web applications.

- Leaflet: A popular open-source library for creating interactive maps on the web. It is lightweight, flexible, and widely used in geographic open data reuse projects.

This toolkit facilitates the territorial representation of data and its reuse in local, regional or national contexts.

In conclusion, the choice of a visualization tool depends largely on the goal being pursued. Learning and experimenting is not the same as analyzing data in depth or communicating results to a wide audience. Therefore, it is useful to reflect beforehand on the type of data available, the audience to which the visualization is aimed and the message you want to convey.

Betting on accessible and open tools allows more people to explore, interpret and communicate public data. In this sense, visualising data is also a way of bringing information closer to citizens and encouraging its reuse.

Data visualizations act as bridges between complex information and human understanding. A well-designed graph can communicate in seconds data that would take minutes or even hours to decipher in tabular format. What's more, interactive visualizations allow each user to explore data from their own perspective, filtering, comparing, and uncovering personalized insights.

To achieve these ends there are multiple tools, some of which we have addressed on previous occasions. Today we are approaching a new example: the free bookstore D3.js. In this post, we explain how it allows you to generate useful and attractive data visualizations together with the open source tool Observable.

What is D3?

D3.js (Data-Driven Documents) is a JavaScript library that allows you to create custom data visualizations in web browsers. Unlike tools that offer predefined charts, D3.js provides the fundamental elements to build virtually any type of visualization imaginable.

The library is completely free and open source, published under a BSD license, which means that any person or organization can use, modify, and distribute it without restrictions. This feature has contributed to its widespread adoption: international media such as The New York Times, The Guardian, Financial Times, and local media such as El País or ABC use D3.js to create journalistic visualizations that help tell stories with data.

D3.js works by manipulating the browser's DOM (Document Object Model). In practical terms, this means that it takes information (e.g., a CSV file with population data) and transforms it into visual elements (circles, bars, lines) that the browser can display. The power of D3.js lies in its flexibility: it doesn't impose a specific way to visualize data, but rather provides the tools to create exactly what is needed.

What is Observable?

Observable is a web-based platform for creating and sharing code, specially designed to work with data and visualizations. Although it offers a freemium service with some free and some paid features, it maintains an open-source philosophy that is particularly relevant for working with public data.

The distinguishing feature of Observable is its "notebook" format. Similar to tools like Jupyter Notebooks in Python, an Observable notebook combines code, visualizations, and explanatory text into a single interactive document. Each cell in the notebook can contain JavaScript code that runs immediately, displaying results instantly. This creates an ideal experimentation environment for exploring data.

You can see it in practice in this data science exercise that we have published in datos.gob.es

Observable integrates naturally with D3.js and other display libraries. In fact, the creator of D3.js is also one of the founders of Observable, so both tools work together in a fluid way. Observable notebooks can be shared publicly, allowing other users to view both the code and the results, fork them to create their own versions, or integrate them into their own projects.

Advantages of the tool to work with all types of data

Both D3.js and Observable have features that can be useful for working with data, including open data:

- Transparency and reproducibility: by publishing a visualization created with these tools, it is possible to share both the final result and the entire data transformation process. Anyone can inspect the code, verify the calculations, and reproduce the results. This transparency is essential when working with public information, where trust and verifiability are essential.

- No licensing costs: Both D3.js and the free version of Observable allow you to create and publish visualizations without the need to purchase software licenses. This removes economic barriers for organizations, journalists, researchers, or citizens who want to work with open data.

- Standard web formats: The created visualizations work directly in web browsers without the need for plugins or additional software. This makes it easy to integrate them into institutional websites, newspaper articles or digital reports, making them accessible from any device.

- Community and resources: There is a large community of users who share examples, tutorials, and solutions to common problems. Observable, in particular, houses thousands of public notebooks that serve as examples and reusable templates.

- Technical flexibility: Unlike tools with predefined options, these libraries allow you to create completely customized visualizations that are exactly tailored to the specific needs of each dataset or story you want to tell.

It is important to note that these tools require programming knowledge, specifically JavaScript. For people with no programming experience, there is a learning curve that can be steep initially. Other tools such as spreadsheets or visualization software with graphical interfaces may be more appropriate for users looking for quick results without writing code.

For those looking for open source alternatives with a smooth learning curve, there are visual interface-based tools that don't require programming. For example, RawGraphs allows you to create complex visualizations by simply dragging and dropping files, while Datawrapper is an excellent and very intuitive option for generating ready-to-publish charts and maps.

In addition, there are numerous open source and commercial alternatives for visualizing data: Python with libraries such as Matplotlib or Plotly, R with ggplot2, Tableau Public, Power BI, among many others. In the didactic section of visualization and data science exercises of datos.gob.es you can find practical examples of how to use some of them.

In summary, the choice of tools should always be based on an assessment of specific requirements, available resources, and project objectives. The important thing is that open data is transformed into accessible knowledge, and there are multiple ways to achieve this goal. D3.js and Observable offer one of these paths, particularly suited to those looking to combine technical flexibility with principles of openness and transparency. If you know of any other tool or would like us to delve into another topic, please send it to us through our social networks or in the contact form.

Christmas returns every year as an opportunity to stop, breathe and reconnect with what inspires us. From datos.gob.es, we take advantage of these dates to share our traditional letter of recommendations: a selection of books that invite us to better understand the digital world, reflect on the impact of artificial intelligence, improve our professional skills and look at the ethical dilemmas that mark our time.

In this post, we compile works on technological ethics, digital society, AI engineering, quantum computing, machine learning, and data governance. A varied list, with titles in both Spanish and English, which combines academic rigour, dissemination and critical vision. If you are looking for a book that will make you grow, surprise a loved one or simply feed your curiosity, here you will find options for all tastes.

The ethics of artificial intelligence by Sara Degli-Esposti

-

What is it about? This specialist in privacy and data analysis offers a clear and rigorous vision of the risks and opportunities of AI applied to areas such as health, safety, public policy or digital communication. Her proposal stands out for integrating legal, socio-technical and fundamental rights perspectives.

-

Who is it for? Readers looking for a solid introduction to the international debate on AI ethics and regulation.

Available for free here: https://ciec.edu.co/wp-content/uploads/2024/12/LA-ETICA-DE-LA-INTELIGENCIA-ARTIFICIAL.pdf

Understand Nate Gentile's technology

-

What is it about? In this book, the popularizer and specialist in hardware and digital culture Nate Gentile debunks myths, explains technical concepts in an accessible way and offers a critical look at how the technology we use every day really works. From processor performance and system architectures to the business models of large platforms, the book combines technical rigor with a relatable style, accompanied by practical examples and anecdotes from the technology community.

-

Who is it for? Curious readers who want to better understand the inner workings of devices, professionals who are looking for an informative but precise approach, and anyone who wants to lose their fear of technology and understand it more consciously.

Clicks Against Humanity by James Williams

-

What is it about? This journalistic work analyzes how digital platforms shape our attention, our beliefs and our decisions. Through true stories, the book shows the impact of algorithmic design on politics, public opinion, and daily life. An incisive and timely read, especially at a time of growing concern about misinformation and the attention economy.

-

Who is it for? Those who want to understand how platforms work and what effects they can have on society.

The Spring of Artificial Intelligence by Carmen Torrijos and José Carlos Sánchez

- What is it about? Based on everyday examples, the authors explain what language models really are, how technologies such as ChatGPT or computer vision work, what transformations they are generating in sectors such as education, creativity or communication, and what their current limits are. In addition, they provide a critical reflection on the ethical, social and labour challenges associated with this new wave of innovation.

- Who is it for? Readers who want to understand the current phenomenon of AI without the need for technical knowledge, teachers and professionals looking to contextualize its impact on their sector, and anyone interested in understanding what is behind the generative AI boom and how it can influence our immediate future.

Design of Machine Learning Systems by Chip Huyen

-

What is it about? It's a modern, practical primer that explains, from start to finish, how to design, deploy, and maintain robust machine learning systems. Chip Huyen combines technical expertise with informative clarity, covering everything from data engineering to model monitoring and MLOps. A highly valued work in the industry.

-

Who is it for? Technical professionals, teams implementing AI-based products, and students who want to understand how real systems are built in production.

Ethics in Artificial Intelligence and Information Technologies by Gabriela Arriagada-Bruneau, Claudia López and Marcelo Mendoza

-

What is it about? This book addresses one of the great challenges of our time: how to develop AI systems that respect people and society. The authors analyze principles of equity, transparency, accountability, and governance, combining philosophical foundations with practical examples. Its Ibero-American approach is particularly valuable in understanding how these challenges affect this region.

-

Who is it for? Students, public officials, technology professionals and anyone who wants to understand the ethical dilemmas behind the algorithms we use every day.

Generative Deep Learning: Teaching Machines to Paint, Write, Compose, and Play de David Foster

-

What is it about? This work, which is already a classic in its field, introduces the most important generative architectures (GANs, VAEs, diffusion models) with examples and reproducible code. The second edition updates concepts and provides new practical cases related to text, sound and image.

-

Who is it for? Those who want to learn how to create generative models from scratch or understand the technologies behind automatic content creation.

Michio Kaku's quantum supremacy

-

What is it about? Quantum computing has ceased to be science fiction and has become a global strategic field. Michio Kaku, a renowned popularizer, explains clearly what a qubit is, how quantum computers work, what limits they present and what transformations they will promote in cryptography, chemical simulation or artificial intelligence.

-

Who is it for? Ideal for science lovers, technologically curious and readers who want to peek into the future of computing without the need for advanced mathematical knowledge.

Data Governance based on UNE specifications by Ismael Caballero, Fernando Gualo and Mario G. Piattini

-

What is it about? This book is a complete guide to understanding and applying UNE standards relating to data governance in organisations. Clearly explain how to structure roles, processes, policies, and controls to manage data as a strategic asset. It includes case studies, maturity models, implementation examples and guidance to align governance with international standards and with the real needs of the public and private sectors. A particularly useful resource at a time when data quality, traceability and interoperability are critical to driving digital transformation.

-

Who is it for? Data Managers (CDOs), analytics and architecture teams, government professionals, consultants, and any organization that wants to implement a formal data governance model based on recognized standards.

While we'd love to include many more titles, this selection is already a great starting point for exploring the big themes that will shape the digital transformation of the coming years. If you feel like giving knowledge as a gift this holiday season, these works will be a sure hit: they combine current affairs, critical reflection, practical applications and a broad look at the technological future.

Remember that, although here we mention titles that you can find in online bookstores, we always encourage you to check your neighborhood bookstore first. It's a great way to support small businesses and help keep the cultural ecosystem alive and vibrant.

Would you recommend another title?

At datos.gob.es we love to discover new readings. If you know of a book on data, AI, technology or digital society that deserves to be in future editions, you can share it in the comments or write to us through this form. Happy holidays and happy reading!

Data visualization is a fundamental practice to democratize access to public information. However, creating effective graphics goes far beyond choosing attractive colors or using the latest technological tools. As Alberto Cairo, an expert in data visualization and professor at the academy of the European Open Data Portal (data.europa.eu), points out, "every design decision must be deliberate: inevitably subjective, but never arbitrary." Through a series of three webinars that you can watch again here, the expert offered innovative tips to be at the forefront of data visualization.

When working with data visualization, especially in the context of public information, it is crucial to debunk some myths ingrained in our professional culture. Phrases like "data speaks for itself," "a picture is worth a thousand words," or "show, don't count" sound good, but they hide an uncomfortable truth: charts don't always communicate automatically.

The reality is more complex. A design professional may want to communicate something specific, but readers may interpret something completely different. How can you bridge the gap between intent and perception in data visualization? In this post, we offer some keys to the training series.

A structured framework for designing with purpose

Rather than following rigid "rules" or applying predefined templates, the course proposes a framework of thinking based on five interrelated components:

- Content: the nature, origin, and limitations of the data

- People: The audience we are targeting

- Intention: The Purposes We Define

- Constraints: The Constraints We Face

- Results: how the graph is received

This holistic approach forces us to constantly ask ourselves: what do our readers really need to know? For example, when communicating information about hurricane or health emergency risks, is it more important to show exact trajectories or communicate potential impacts? The correct answer depends on the context and, above all, on the information needs of citizens.

The danger of over-aggregation

Even without losing sight of the purpose, it is important not to fall into adding too much information or presenting only averages. Imagine, for example, a dataset on citizen security at the national level: an average may hide the fact that most localities are very safe, while a few with extremely high rates distort the national indicator.

As Claus O. Wilke explains in his book "Fundamentals of Data Visualization," this practice can hide crucial patterns, outliers, and paradoxes that are precisely the most relevant to decision-making. To avoid this risk, the training proposes to visualize a graph as a system of layers that we must carefully build from the base:

1. Encoding

- It's the foundation of everything: how we translate data into visual attributes. Research in visual perception shows us that not all "visual channels" are equally effective. The hierarchy would be:

- Most effective: position, length and height

- Moderately effective: angle, area and slope

- Less effective: color, saturation, and shape

How do we put this into practice? For example, for accurate comparisons, a bar chart will almost always be a better choice than a pie chart. However, as nuanced in the training materials, "effective" does not always mean "appropriate". A pie chart can be perfect when we want to express the idea of a "whole and its parts", even if accurate comparisons are more difficult.

2. Arrangement

- The positioning, ordering, and grouping of elements profoundly affects perception. Do we want the reader to compare between categories within a group, or between groups? The answer will determine whether we organize our visualization with grouped or stacked bars, with multiple panels, or in a single integrated view.

3. Scaffolding

Titles, introductions, annotations, scales and legends are fundamental. In datos.gob.es we've seen how interactive visualizations can condense complex information, but without proper scaffolding, interactivity can confuse rather than clarify.

The value of a correct scale

One of the most delicate – and often most manipulable – technical aspects of a visualization is the choice of scale. A simple modification in the Y-axis can completely change the reader's interpretation: a mild trend may seem like a sudden crisis, or sustained growth may go unnoticed.

As mentioned in the second webinar in the series, scales are not a minor detail: they are a narrative component. Deciding where an axis begins, what intervals are used, or how time periods are represented involves making choices that directly affect one's perception of reality. For example, if an employment graph starts the Y-axis at 90% instead of 0%, the decline may seem dramatic, even if it's actually minimal.

Therefore, scales must be honest with the data. Being "honest" doesn't mean giving up on design decisions, but rather clearly showing what decisions were made and why. If there is a valid reason for starting the Y-axis at a non-zero value, it should be explicitly explained in the graph or in its footnote. Transparency must prevail over drama.

Visual integrity not only protects the reader from misleading interpretations, but also reinforces the credibility of the communicator. In the field of public data, this honesty is not optional: it is an ethical commitment to the truth and to citizen trust.

Accessibility: Visualize for everyone

On the other hand, one of the aspects often forgotten is accessibility. About 8% of men and 0.5% of women have some form of color blindness. Tools like Color Oracle allow you to simulate what our visualizations look like for people with different types of color perception impairments.

In addition, the webinar mentioned the Chartability project, a methodology to evaluate the accessibility of data visualizations. In the Spanish public sector, where web accessibility is a legal requirement, this is not optional: it is a democratic obligation. Under this premise, the Spanish Federation of Municipalities and Provinces published a Data Visualization Guide for Local Entities.

Visual Storytelling: When Data Tells Stories

Once the technical issues have been resolved, we can address the narrative aspect that is increasingly important to communicate correctly. In this sense, the course proposes a simple but powerful method:

- Write a long sentence that summarizes the points you want to communicate.

- Break that phrase down into components, taking advantage of natural pauses.

- Transform those components into sections of your infographic.

This narrative approach is especially effective for projects like the ones we found in data.europa.eu, where visualizations are combined with contextual explanations to communicate the value of high-value datasets or in datos.gob.es's data science and visualization exercises.

The future of data visualization also includes more creative and user-centric approaches. Projects that incorporate personalized elements, that allow readers to place themselves at the center of information, or that use narrative techniques to generate empathy, are redefining what we understand by "data communication".

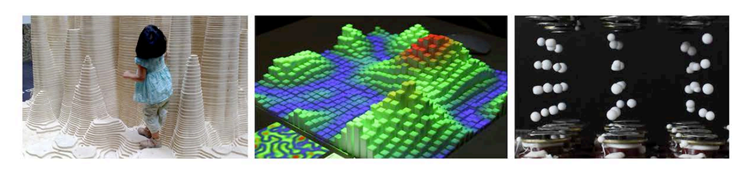

Alternative forms of "data sensification" are even emerging: physicalization (creating three-dimensional objects with data) and sonification (translating data into sound) open up new possibilities for making information more tangible and accessible. The Spanish company Tangible Data, which we echo in datos.gob.es because it reuses open datasets, is proof of this.

Figure 1. Examples of data sensification. Source: https://data.europa.eu/sites/default/files/course/webinar-data-visualisation-episode-3-slides.pdf

By way of conclusion, we can emphasize that integrity in design is not a luxury: it is an ethical requirement. Every graph we publish on official platforms influences how citizens perceive reality and make decisions. That is why mastering technical tools such as libraries and visualization APIs, which are discussed in other articles on the portal, is so relevant.

The next time you create a visualization with open data, don't just ask yourself "what tool do I use?" or "Which graphic looks best?". Ask yourself: what does my audience really need to know? Does this visualization respect data integrity? Is it accessible to everyone? The answers to these questions are what transform a beautiful graphic into a truly effective communication tool.

Education has the power to transform lives. Recognized as a fundamental right by the international community, it is a key pillar for human and social development. However, according to UNESCO data, 272 million children and young people still do not have access to school, 70% of countries spend less than 4% of their GDP on education, and 69 million more teachers are still needed to achieve universal primary and secondary education by 2030. In the face of this global challenge, open educational resources and open access initiatives are presented as decisive tools to strengthen education systems, reduce inequalities and move towards inclusive, equitable and quality education.

Open educational resources (OER) offer three main benefits: they harness the potential of digital technologies to solve common educational challenges; they act as catalysts for pedagogical and social innovation by transforming the relationship between teachers, students and knowledge; and they contribute to improving equitable access to high-quality educational materials.

What are Open Educational Resources (OER)

According to UNESCO, open educational resources are "learning, teaching, and research materials in any format and support that exist in the public domain or are under copyright and were released under an open license." The concept, coined at the forum held in Paris in 2002, has as its fundamental characteristic that these resources allow "their access at no cost, their reuse, reorientation, adaptation and redistribution by third parties".

OER encompasses a wide variety of formats, from full courses, textbooks, and curricula to maps, videos, podcasts, multimedia applications, assessment tools, mobile apps, databases, and even simulations.

Open educational resources are made up of three elements that work inseparably:

- Educational content: includes all kinds of material that can be used in the teaching-learning process, from formal objects to external and social resources. This is where open data would come in, which can be used to generate this type of resource.

- Technological tools: software that allows content to be developed, used, modified and distributed, including applications for content creation and platforms for learning communities.

- Open licenses: differentiating element that respects intellectual property while providing permissions for the use, adaptation and redistribution of materials.

Therefore, OER are mainly characterized by their universal accessibility, eliminating economic and geographical barriers that traditionally limit access to quality education.

Educational innovation and pedagogical transformation

Pedagogical transformation is one of the main impacts of open educational resources in the current educational landscape. OER are not simply free digital content, but catalysts for innovation that are redefining teaching-learning processes globally.

Combined with appropriate pedagogical methodologies and well-designed learning objectives, OER offer innovative new teaching options to enable both teachers and students to take a more active role in the educational process and even in the creation of content. They foster essential competencies such as critical thinking, autonomy and the ability to "learn to learn", overcoming traditional models based on memorization.

Educational innovation driven by OER is materialized through open technological tools that facilitate their creation, adaptation and distribution. Programs such as eXeLearning allow you to develop digital educational content in a simple way, while LibreOffice and Inkscape offer free alternatives for the production of materials.

The interoperability achieved through open standards, such as IMS Global or SCORM, ensures that these resources can be integrated into different platforms and therefore accessibility for all users, including people with disabilities.

Another promising innovation for the future of OER is the combination of decentralized technologies like Nostr with authoring tools like LiaScript. This approach solves the dependency on central servers, allowing an entire course to be created and distributed over an open, censorship-resistant network. The result is a single, permanent link (URI de Nostr) that encapsulates all the material, giving the creator full sovereignty over its content and ensuring its durability. In practice, this is a revolution for universal access to knowledge. Educators share their work with the assurance that the link will always be valid, while students access the material directly, without the need for platforms or intermediaries. This technological synergy is a fundamental step to materialize the promise of a truly open, resilient and global educational ecosystem, where knowledge flows without barriers.

The potential of Open Educational Resources is realized thanks to the communities and projects that develop and disseminate them. Institutional initiatives, collaborative repositories and programmes promoted by public bodies and teachers ensure that OER are accessible, reusable and sustainable.

Collaboration and open learning communities

The collaborative dimension represents one of the fundamental pillars that support the open educational resources movement. This approach transcends borders and connects education professionals globally.

The educational communities around OER have created spaces where teachers share experiences, agree on methodological aspects and resolve doubts about the practical application of these resources. Coordination between professionals usually occurs on social networks or through digital channels such as Telegram, in which both users and content creators participate. This "virtual cloister" facilitates the effective implementation of active methodologies in the classroom.

Beyond the spaces that have arisen at the initiative of the teachers themselves, different organizations and institutions have promoted collaborative projects and platforms that facilitate the creation, access and exchange of Open Educational Resources, thus expanding their reach and impact on the educational community.

OER projects and repositories in Spain

In the case of Spain, Open Educational Resources have a consolidated ecosystem of initiatives that reflect the collaboration between public administrations, educational centres, teaching communities and cultural entities. Platforms such as Procomún, content creation projects such as EDIA (Educational, Digital, Innovative and Open) or CREA (Creation of Open Educational Resources), and digital repositories such as Hispana show the diversity of approaches adopted to make educational and cultural resources available to citizens in open access. Here's a little more about them:

- The EDIA (Educational, Digital, Innovative and Open) Project, developed by the National Center for Curriculum Development in Non-Proprietary Systems (CEDEC), focuses on the creation of open educational resources designed to be integrated into environments that promote digital competences and that are adapted to active methodologies. The resources are created with eXeLearning, which facilitates editing, and include templates, guides, rubrics and all the necessary documents to bring the didactic proposal to the classroom.

- The Procomún network was born as a result of the Digital Culture in School Plan launched in 2012 by the Ministry of Education, Culture and Sport. This repository currently has more than 74,000 resources and 300 learning itineraries, along with a multimedia bank of 100,000 digital assets under the Creative Commons license and which, therefore, can be reused to create new materials. It also has a mobile application. Procomún also uses eXeLearning and the LOM-ES standard, which ensures a homogeneous description of the resources and facilitates their search and classification. In addition, it is a semantic web, which means that it can connect with existing communities through the Linked Open Data Cloud.

The autonomous communities have also promoted the creation of open educational resources. An example is CREA, a programme of the Junta de Extremadura aimed at the collaborative production of open educational resources. Its platform allows teachers to create, adapt and share structured teaching materials, integrating curricular content with active methodologies. The resources are generated in interoperable formats and are accompanied by metadata that facilitates their search, reuse and integration into different platforms.

There are similar initiatives, such as the REA-DUA project in Andalusia, which brings together more than 250 educational resources for primary, secondary and baccalaureate, with attention to diversity. For its part, Galicia launched the 2022-23 academic year cREAgal whose portal currently has more than 100 primary and secondary education resources. This project has an impact on inclusion and promotes the personal autonomy of students. In addition, some ministries of education make open educational resources available, as is the case of the Canary Islands.

Hispana, the portal for access to Spanish cultural heritage

In addition to these initiatives aimed at the creation of educational resources, others have emerged that promote the collection of content that was not created for an educational purpose but that can be used in the classroom. This is the case of Hispana, a portal for aggregating digital collections from Spanish libraries, archives and museums.

To provide access to Spanish cultural and scientific heritage, Hispana collects and makes accessible the metadata of digital objects, allowing these objects to be viewed through links to the pages of the owner institutions. In addition to acting as a collector, Hispana also adds the content of institutions that wish to do so to Europeana, the European digital library, which allows increasing the visibility and reuse of resources.

Hispana is an OAI-PMH repository, which means that it uses the Open Archives Initiative – Protocol for Metadata Harvesting, an international standard for the collection and exchange of metadata between digital repositories. Thus, Hispana collects the metadata of the Spanish archives, museums and libraries that exhibit their collections with this protocol and sends them to Europeana.

International initiatives and global cooperation

At the global level, it is important to highlight the role of UNESCO through the Dynamic Coalition on OER, which seeks to coordinate efforts to increase the availability, quality and sustainability of these assets.

In Europe, ENCORE+ (European Network for Catalysing Open Resources in Education) seeks to strengthen the European OER ecosystem. Among its objectives is to create a network that connects universities, companies and public bodies to promote the adoption, reuse and quality of OER in Europe. ENCORE+ also promotes interoperability between platforms, metadata standardization and cooperation to ensure the quality of resources.

In Europe, other interesting initiatives have been developed, such as EPALE (Electronic Platform for Adult Learning in Europe), an initiative of the European Commission aimed at specialists in adult education. The platform contains studies, reports and training materials, many of them under open licenses, which contributes to the dissemination and use of OER.

In addition, there are numerous projects that generate and make available open educational resources around the world. In the United States, OER Commons functions as a global repository of educational materials of different levels and subjects. This project uses Open Author, an online editor that makes it easy for teachers without advanced technical knowledge to create and customize digital educational resources directly on the platform.

Another outstanding project is Plan Ceibal, a public program in Uruguay that represents a model of technological inclusion for equal opportunities. In addition to providing access to technology, it generates and distributes OER in interoperable formats, compatible with standards such as SCORM and structured metadata that facilitate its search, integration into learning platforms and reuse by teachers.

Along with initiatives such as these, there are others that, although they do not directly produce open educational resources, do encourage their creation and use through collaboration between teachers and students from different countries. This is the case for projects such as eTwinning and Global Classroom.

The strength of OER lies in their contribution to the democratization of knowledge, their collaborative nature, and their ability to promote innovative methodologies. By breaking down geographical, economic, and social barriers, open educational resources bring the right to education one step closer to becoming a universal reality.

The open data sector is very active. To keep up to date with everything that happens, from datos.gob.es we publish a compilation of news such as the development of new technological applications, legislative advances or other related news.

Six months ago, we already made the last compilation of the year 2024. On this occasion, we are going to summarize some innovations, improvements and achievements of the first half of 2025.

Regulatory framework: new regulations that transform the landscape

One of the most significant developments is the publication of the Regulation on the European Health Data Space by the European Parliament and the Council. This regulation establishes a common framework for the secure exchange of health data between member states, facilitating both medical research and the provision of cross-border health services. In addition, this milestone represents a paradigmatic shift in the management of sensitive data, demonstrating that it is possible to reconcile privacy and data protection with the need to share information for the common good. The implications for the Spanish healthcare system are considerable, as it will allow greater interoperability with other European countries and facilitate the development of collaborative research projects.

On the other hand, the entry into force of the European AI Act establishes clear rules for the development of this technology, guaranteeing security, transparency and respect for human rights. These types of regulations are especially relevant in the context of open data, where algorithmic transparency and the explainability of AI models become essential requirements.

In Spain, the commitment to transparency is materialised in initiatives such as the new Digital Rights Observatory, which has the participation of more than 150 entities and 360 experts. This platform is configured as a space for dialogue and monitoring of digital policies, helping to ensure that the digital transformation respects fundamental rights.

Technological innovations in Spain and abroad

One of the most prominent milestones in the technological field is the launch of ALIA, the public infrastructure for artificial intelligence resources. This initiative seeks to develop open and transparent language models that promote the use of Spanish and Spanish co-official languages in the field of AI.

ALIA is not only a response to the hegemony of Anglo-Saxon models, but also a strategic commitment to technological sovereignty and linguistic diversity. The first models already available have been trained in Spanish, Catalan, Galician, Valencian and Basque, setting an important precedent in the development of inclusive and culturally sensitive technologies.

In relation to this innovation, the practical applications of artificial intelligence are multiplying in various sectors. For example, in the financial field, the Tax Agency has adopted an ethical commitment in the design and use of artificial intelligence. Within this framework, the community has even developed a virtual chatbot trained with its own data that offers legal guidance on fiscal and tax issues.

In the healthcare sector, a group of Spanish radiologists is working on a project for the early detection of oncological lesions using AI, demonstrating how the combination of open data and advanced algorithms can have a direct impact on public health.

Also combining AI with open data, projects related to environmental sustainability have been developed. This model developed in Spain combines AI and open weather data to predict solar energy production over the next 30 years, providing crucial information for national energy planning.

Another relevant sector in terms of technological innovation is that of smart cities. In recent months, Las Palmas de Gran Canaria has digitized its municipal markets by combining WiFi networks, IoT devices, a digital twin and open data platforms. This comprehensive initiative seeks to improve the user experience and optimize commercial management, demonstrating how technological convergence can transform traditional urban spaces.

Zaragoza, for its part, has developed a vulnerability map using artificial intelligence applied to open data, providing a valuable tool for urban planning and social policies.

Another relevant case is the project of the Open Data Barcelona Initiative, #iCuida, which stands out as an innovative example of reusing open data to improve the lives of caregivers and domestic workers. This application demonstrates how open data can target specific groups and generate direct social impact.

Last but not least, at a global level, this semester DeepSeek has launched DeepSeek-R1, a new family of generative models specialized in reasoning, publishing both the models and their complete training methodology in open source, contributing to the democratic advancement of AI.

New open data portals and improvement tools

In all this maelstrom of innovation and technology, the landscape of open data portals has been enriched with new sectoral initiatives. The Association of Commercial and Property Registrars of Spain has presented its open data platform, allowing immediate access to registry data without waiting for periodic reports. This initiative represents a significant change in the transparency of the registry sector.

In the field of health, the 'I+Health' portal of the Andalusian public health system collects and disseminates resources and data on research activities and results from a single site, facilitating access to relevant scientific information.

In addition to the availability of data, there is a treatment that makes them more accessible to the general public: data visualization. The University of Granada has developed 'UGR in figures', an open-access space with an open data section that facilitates the exploration of official statistics and stands as a fundamental piece in university transparency.

On the other hand, IDENA, the new tool of the Navarre Geoportal, incorporates advanced functionalities to search, navigate, incorporate maps, share data and download geographical information, being operational on any device.

Training for the future: events and conferences

The training ecosystem in this ecosystem is strengthened every year with events such as the Data Management Summit in Tenerife, which addresses interoperability in public administrations and artificial intelligence. Another benchmark event in open data that was also held in the Canary Islands was the National Open Data Meeting.

Beyond these events, collaborative innovation has also been promoted through specialized hackathons, such as the one dedicated to generative AI solutions for biodiversity or the Merkle Datathon in Gijón. These events not only generate innovative solutions, but also create communities of practice and foster emerging talent.

Once again, the open data competitions of Castilla y León and the Basque Country have awarded projects that demonstrate the transformative potential of the reuse of open data, inspiring new initiatives and applications.

International perspective and global trends: the fourth wave of open data

The Open Data Policy Lab spoke at the EU Open Data Days about what is known as the "fourth wave" of open data, closely linked to generative AI. This evolution represents a quantum leap in the way public data is processed, analyzed, and used, where natural language models allow for more intuitive interactions and more sophisticated analysis.

Overall, the open data landscape in 2025 reveals a profound transformation of the ecosystem, where the convergence between artificial intelligence, advanced regulatory frameworks, and specialized applications is redefining the possibilities of transparency and public innovation.

Artificial intelligence is no longer a thing of the future: it is here and can become an ally in our daily lives. From making tasks easier for us at work, such as writing emails or summarizing documents, to helping us organize a trip, learn a new language, or plan our weekly menus, AI adapts to our routines to make our lives easier. You don't have to be tech-savvy to take advantage of it; while today's tools are very accessible, understanding their capabilities and knowing how to ask the right questions will maximize their usefulness.

AI Passive and Active Subjects

The applications of artificial intelligence in everyday life are transforming our daily lives. AI already covers multiple fields of our routines. Virtual assistants, such as Siri or Alexa, are among the most well-known tools that incorporate artificial intelligence, and are used to answer questions, schedule appointments, or control devices.

Many people use tools or applications with artificial intelligence on a daily basis, even if it operates imperceptibly to the user and does not require their intervention. Google Maps, for example, uses AI to optimize routes in real time, predict traffic conditions, suggest alternative routes or estimate the time of arrival. Spotify applies it to personalize playlists or suggest songs, and Netflix to make recommendations and tailor the content shown to each user.

But it is also possible to be an active user of artificial intelligence using tools that interact directly with the models. Thus, we can ask questions, generate texts, summarize documents or plan tasks. AI is no longer a hidden mechanism but a kind of digital co-pilot that assists us in our day-to-day lives. ChatGPT, Copilot or Gemini are tools that allow us to use AI without having to be experts. This makes it easier for us to automate daily tasks, freeing up time to spend on other activities.

AI in Home and Personal Life

Virtual assistants respond to voice commands and inform us what time it is, the weather or play the music we want to listen to. But their possibilities go much further, as they are able to learn from our habits to anticipate our needs. They can control different devices that we have in the home in a centralized way, such as heating, air conditioning, lights or security devices. It is also possible to configure custom actions that are triggered via a voice command. For example, a "good morning" routine that turns on the lights, informs us of the weather forecast and the traffic conditions.

When we have lost the manual of one of the appliances or electronic devices we have at home, artificial intelligence is a good ally. By sending a photo of the device, you will help us interpret the instructions, set it up, or troubleshoot basic issues.

If you want to go further, AI can do some everyday tasks for you. Through these tools we can plan our weekly menus, indicating needs or preferences, such as dishes suitable for celiacs or vegetarians, prepare the shopping list and obtain the recipes. It can also help us choose between the dishes on a restaurant's menu taking into account our preferences and dietary restrictions, such as allergies or intolerances. Through a simple photo of the menu, the AI will offer us personalized suggestions.

Physical exercise is another area of our personal lives in which these digital co-pilots are very valuable. We may ask you, for example, to create exercise routines adapted to different physical conditions, goals and available equipment.

Planning a vacation is another of the most interesting features of these digital assistants. If we provide them with a destination, a number of days, interests, and even a budget, we will have a complete plan for our next trip.

Applications of AI in studies

AI is profoundly transforming the way we study, offering tools that personalize learning. Helping the little ones in the house with their schoolwork, learning a language or acquiring new skills for our professional development are just some of the possibilities.

There are platforms that generate personalized content in just a few minutes and didactic material made from open data that can be used both in the classroom and at home to review. Among university students or high school students, some of the most popular options are applications that summarize or make outlines from longer texts. It is even possible to generate a podcast from a file, which can help us understand and become familiar with a topic while playing sports or cooking.

But we can also create our applications to study or even simulate exams. Without having programming knowledge, it is possible to generate an application to learn multiplication tables, irregular verbs in English or whatever we can think of.

How to Use AI in Work and Personal Finance

In the professional field, artificial intelligence offers tools that increase productivity. In fact, it is estimated that in Spain 78% of workers already use AI tools in the workplace. By automating processes, we save time to focus on higher-value tasks. These digital assistants summarize long documents, generate specialized reports in a field, compose emails, or take notes in meetings.

Some platforms already incorporate the transcription of meetings in real time, something that can be very useful if we do not master the language. Microsoft Teams, for example, offers useful options through Copilot from the "Summary" tab of the meeting itself, such as transcription, a summary or the possibility of adding notes.

The management of personal finances has also evolved thanks to applications that use AI, allowing you to control expenses and manage a budget. But we can also create our own personal financial advisor using an AI tool, such as ChatGPT. By providing you with insights into income, fixed expenses, variables, and savings goals, it analyzes the data and creates personalized financial plans.

Prompts and creation of useful applications for everyday life

We have seen the great possibilities that artificial intelligence offers us as a co-pilot in our day-to-day lives. But to make it a good digital assistant, we must know how to ask it and give it precise instructions.

A prompt is a basic instruction or request that is made to an AI model to guide it, with the aim of providing us with a coherent and quality response. Good prompting is the key to getting the most out of AI. It is essential to ask well and provide the necessary information.

To write effective prompts we have to be clear, specific, and avoid ambiguities. We must indicate what the objective is, that is, what we want the AI to do: summarize, translate, generate an image, etc. It is also key to provide it with context, explaining who it is aimed at or why we need it, as well as how we expect the response to be. This can include the tone of the message, the formatting, the fonts used to generate it, etc.

Here are some tips for creating effective prompts:

- Use short, direct and concrete sentences. The clearer the request, the more accurate the answer. Avoid expressions such as "please" or "thank you", as they only add unnecessary noise and consume more resources. Instead, use words like "must," "do," "include," or "list." To reinforce the request, you can capitalize those words. These expressions are especially useful for fine-tuning a first response from the model that doesn't meet your expectations.

- It indicates the audience to which it is addressed. Specify whether the answer is aimed at an expert audience, inexperienced audience, children, adolescents, adults, etc. When we want a simple answer, we can, for example, ask the AI to explain it to us as if we were ten years old.

- Use delimiters. Separate the instructions using a symbol, such as slashes (//) or quotation marks to help the model understand the instruction better. For example, if you want it to do a translation, it uses delimiters to separate the command ("Translate into English") from the phrase it is supposed to translate.

- Indicates the function that the model should adopt. Specifies the role that the model should assume to generate the response. Telling them whether they should act like an expert in finance or nutrition, for example, will help generate more specialized answers as they will adapt both the content and the tone.

- Break down entire requests into simple requests. If you're going to make a complex request that requires an excessively long prompt, it's a good idea to break it down into simpler steps. If you need detailed explanations, use expressions like "Think by step" to give you a more structured answer.

- Use examples. Include examples of what you're looking for in the prompt to guide the model to the answer.

- Provide positive instructions. Instead of asking them not to do or include something, state the request in the affirmative. For example, instead of "Don't use long sentences," say, "Use short, concise sentences." Positive instructions avoid ambiguities and make it easier for the AI to understand what it needs to do. This happens because negative prompts put extra effort on the model, as it has to deduce what the opposite action is.

- Offer tips or penalties. This serves to reinforce desired behaviors and restrict inappropriate responses. For example, "If you use vague or ambiguous phrases, you will lose 100 euros."

- Ask them to ask you what they need. If we instruct you to ask us for additional information we reduce the possibility of hallucinations, as we are improving the context of our request.

- Request that they respond like a human. If the texts seem too artificial or mechanical, specify in the prompt that the response is more natural or that it seems to be crafted by a human.

- Provides the start of the answer. This simple trick is very useful in guiding the model towards the response we expect.

- Define the fonts to use. If we narrow down the type of information you should use to generate the answer, we will get more refined answers. It asks, for example, that it only use data after a specific year.

- Request that it mimic a style. We can provide you with an example to make your response consistent with the style of the reference or ask you to follow the style of a famous author.

While it is possible to generate functional code for simple tasks and applications without programming knowledge, it is important to note that developing more complex or robust solutions at a professional level still requires programming and software development expertise. To create, for example, an application that helps us manage our pending tasks, we ask AI tools to generate the code, explaining in detail what we want it to do, how we expect it to behave, and what it should look like. From these instructions, the tool will generate the code and guide us to test, modify and implement it. We can ask you how and where to run it for free and ask for help making improvements.

As we've seen, the potential of these digital assistants is enormous, but their true power lies in large part in how we communicate with them. Clear and well-structured prompts are the key to getting accurate answers without needing to be tech-savvy. AI not only helps us automate routine tasks, but it expands our capabilities, allowing us to do more in less time. These tools are redefining our day-to-day lives, making it more efficient and leaving us time for other things. And best of all: it is now within our reach.