Training is one of the pillars that support the open data ecosystem in Europe. Publishing data is essential, but just as important is that there are capabilities to understand, reuse and manage it properly. In this context, the European Open Data Portal (data.europa.eu) offers an online training programme that allows you to become familiar with the open data ecosystem from different angles: basic concepts, legal frameworks, emerging trends, success stories or good practices of publication and reuse.

This program has incorporated a relevant novelty in 2026: learning paths or structured learning itineraries, which allow you to advance step by step in the domain of open data.

From datos.gob.es we want to publicize this update, which reinforces the European training offer and complements already consolidated initiatives. We tell you about it in this post.

What changes in 2026? Step-by-step itineraries

The main novelty is the incorporation of learning paths, conceived as structured training paths that group content (readings, videos and quizzes) in a logical and progressive order.

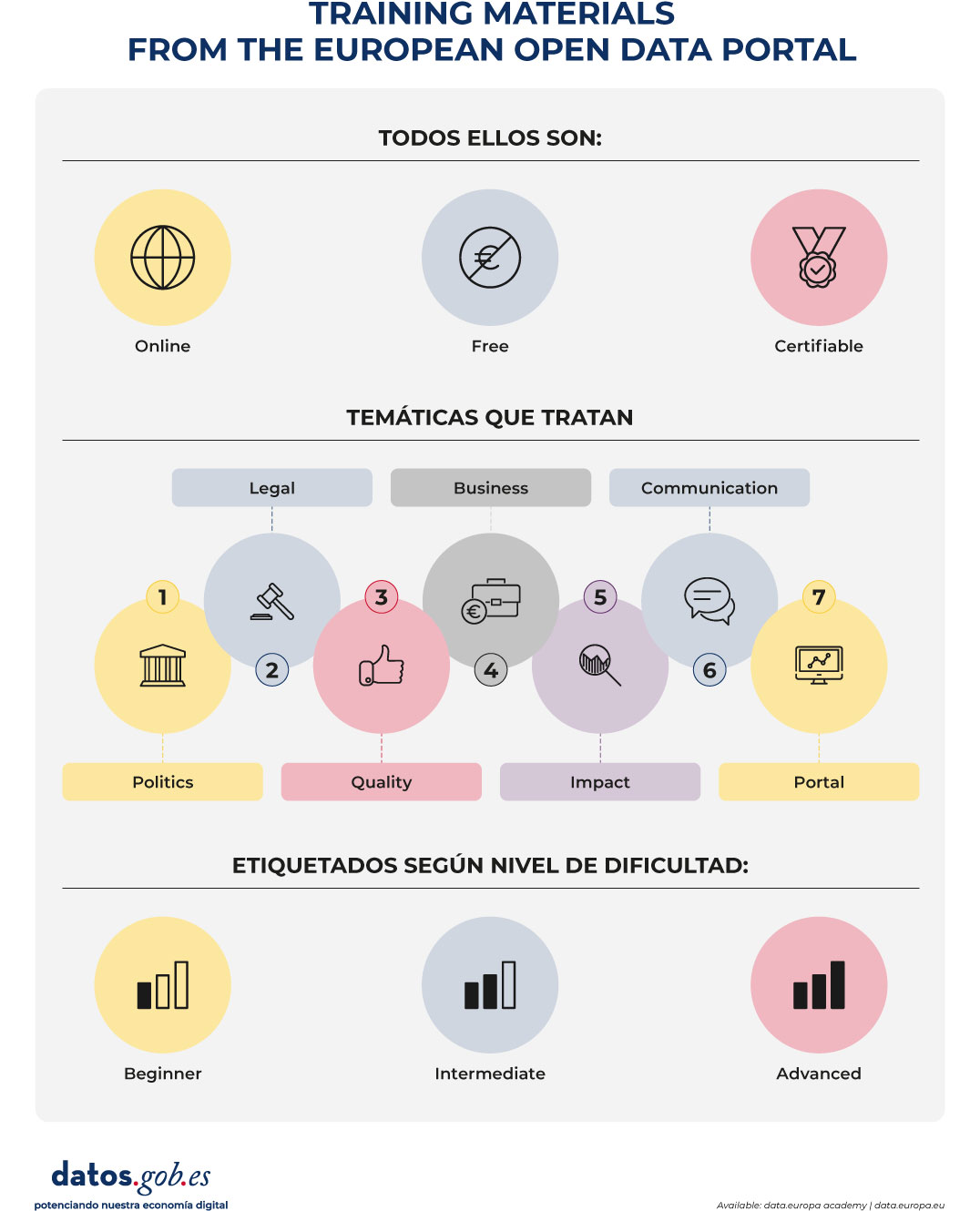

Until now, the academy allowed free access to courses organized by subject (Policy, Legal, Quality, Business, Impact, Communication and Portal) and level (beginner, intermediate or advanced). With the new itineraries, learning becomes a more guided experience:

- An itinerary is chosen according to the level of experience.

- Progress is made sequentially.

- Activities and questionnaires are carried out.

- A certification is obtained at the end.

This structure makes learning particularly easy for those who are looking for an orderly training, with clear objectives and a defined progression.

The new itineraries are especially aimed at the public sector, although anyone interested can take them. They are organized into three levels.

Figure 1. Free training process in open data via data.europe.eu

1. Beginner Level: The Basics of Open Data

Approximate duration: 4 hours and 23 minutes.

This itinerary provides a solid foundation for understanding:

- What is open data?

- What are its fundamental principles?

- How they are published.

- What benefits they generate for innovation, transparency and reuse.

It is intended for people who are new to working with data or want to understand the general framework of open data. It is also useful for non-technical profiles that need a strategic vision. The goal is to build a robust conceptual foundation before addressing more complex aspects.

2. Intermediate level: the legal and strategic framework

Approximate duration: 7 hours and 3 minutes.

The second itinerary delves into the legal and public policy aspects that underpin the European data strategy. Among the topics covered are:

- The European regulatory framework on data.

- The legal implications of information sharing.

- Reuse licenses.

- Regulatory compliance.

This level is especially relevant for transparency managers, legal advisors, portal managers and profiles involved in data governance.

Understanding the legal framework is a requirement to publish data with guarantees and encourage its reuse in a secure way and in accordance with European regulations.

3. Advanced level: quality and interoperability

Approximate duration: 4 hours and 39 minutes.

The third itinerary addresses two critical issues for the success of open data: quality and interoperability.

Content includes:

- Data quality principles and metrics.

- Interoperability methodologies.

- Standardization guidelines.

- Advanced metadata management.

- Application of European standards such as DCAT-AP.

This level is aimed at technical or strategic profiles that want to improve the coherence, accessibility and reuse of published data.

In a European context where cross-border interoperability is essential, adopting common standards is a condition for generating real impact.

Digital Certificates & Badges

One of the most attractive elements of the update is the possibility of obtaining official certificates upon completion of each training itinerary.

To get them, the process is simple:

- Complete all the modules of the itinerary.

- Pass the final quiz.

- Download the corresponding certificate.

In addition, the academy allows you to earn digital badges as you progress through the content. These credentials can be shared in professional profiles and are a tangible way to accredit open data competencies.

In a work environment where data literacy is increasingly in demand, having European certificates reinforces the professional profile and demonstrates commitment to continuous training.

Figure 2. Training materials from the European open data portal. Source: own elaboration

Continuous training as a strategic element

One of the strengths of the academy is its applied approach. The contents show how open data is connected to specific challenges such as improving public services, promoting innovation and economic development or transparency and the evaluation of public policies.

In addition, as it is a free and accessible online platform, it eliminates economic barriers and facilitates participation from any territory.

In this sense, learning paths represent a step forward towards a more structured, coherent and recognizable training. Because, by integrating content, evaluation and certification in a single journey, the academy reinforces the value of learning and makes it easier for each person to advance at their own pace.

The European data ecosystem is evolving rapidly. The European data strategy, sectoral data spaces and common interoperability standards require trained professionals aligned with a shared vision.

The incorporation of structured itineraries in the data.europa.eu academy is a commitment to strengthen the skills necessary for open data to generate public value. Because these new training itineraries define a clearer, more progressive and accessible learning path for the entire community. The academy update will roll out throughout 2026. From datos.gob.es we will continue to share relevant information for the Spanish open data community.

The construction of the ecosystem for the secondary use of e-health data in the European Health Data Space (EHDS) poses a significant scenario of opportunities for Spanish research, innovation and entrepreneurship. To this end, the European Union is promoting a multitude of strategic projects in which hospitals, health research foundations, universities, research centres and Spanish companies participate. The list of projects is extensive and aims to satisfy at least two objectives: to promote the generation of infrastructures capable of generating quality datasets and to promote conditions for their reuse.

The role of Spain. Strengths in the deployment of the European Health Area

Spain offers significantly favourable conditions not only to participate but also to contribute significantly to the tasks of creating the EHDS

- First, our public health system is characterized by a high level of integration and structuring. Unlike systems based on reimbursement mechanisms, in which there may be an atomisation in the field of service provision, in our system we have a clear frame of reference in primary care, medical specialities and hospital services.

- On the other hand, the experience deployed by our health environments from the General Data Protection Regulation (GDPR) and, particularly, the lessons learned from the seventeenth additional provision on health data processing of Organic Law 3/2018, of 5 December, on the Protection of Personal Data and guarantee of digital rights (LOPDGDD) they constitute a valuable experience.

- The opening of the National Health Data Space promoted by the Government of Spain and promoted by the Ministry for Digital Transformation and Public Function, the Ministry of Health and the Autonomous Communities allows the deployment of an essential infrastructure for the EHDS.

The National Health Data space was presented on January 29. The event highlighted how this project represents a paradigm shift that revolutionizes the management of health data, promoting a federated, secure and ethical model that preserves the sovereignty and privacy of information while facilitating its use for research, innovation and public policies. Its operation is based on a federated catalog of metadata and a rigorous process of access and analysis in secure environments, which seeks to promote open science and scientific and technological advances, benefiting patients, researchers, managers and industry.

Lessons learned from European Projects

The path taken by Regulation (EU) 2025/327 of the European Parliament and of the Council of 11 February 2025 on the European Health Data Space, amending Directive 2011/24/EU and Regulation (EU) 2024/2847 (EEDSR), poses significant challenges that are addressed in research projects funded by European and national funds. The lessons learned in some of them can be extraordinarily useful for the research and entrepreneurship community in our country. We cannot forget that we start from significant strengths.

1.-Compliance by design

The existence of a new regulation requires a rigorous analysis of the state of the art in our organizations, not only to implement its deployment but also to ensure the preconditions of legal reliability of the datasets and the research that is proposed.

2.-Accountability: proactive responsibility and documentary strength

In our country we come from a long tradition of accountability. The EEDSR will impose on the data requester a set of relevant documentary requirements, such as, for example, having provided safeguards to prevent any misuse of electronic health data. This issue cannot be neglected from the point of view of data holders, who will also have to meet certain requirements. For example, proving that data is legitimate and reusable is an ethical and legally documentable issue; and the simplified procedure for accessing electronic health data through a trusted health data holder requires the latter to document the security of its data space or capabilities to evaluate requests for access to health data.

One of the main obstacles we face in this intermediate period of implementation of the EHDS lies precisely in the organizational culture for the generation of verifiable evidence. As standardization and the set of common rules of the EEDS scale, it will be necessary to deepen the dynamics of proactive responsibility understood as demonstrated responsibility.

3. Secure processing environments

In our country, health environments by their very definition must be safe environments. The deployment of the National Security Scheme (ENS) and the GDPR have allowed the entire health system, public or private, to adopt maturity models that are perfectly consistent with the conditions of the secure processing environments defined by the EEDSR.

Challenges of the Spanish system

Along with the inherent strengths of our system, it is necessary to consider those aspects that present themselves as challenges.

1. Anonymisation and pseudonymisation

In the national context, the aforementioned seventeenth additional provision of Organic Law 3/2018, of 5 December, on the Protection of Personal Data and guarantee of digital rights, defines specific conditions for pseudonymisation. These consist of the functional separation between the teams that pseudonymize and those that reuse data, and the definition of a secure environment that prevents any attempt at re-identification. In addition, there are legal guarantees in terms of individual commitments not to re-identify, deployment of the impact assessment tool related to data protection and supervision by ethics committees. The challenge of anonymization is more demanding, since it implies the impossibility of linking health data with those of the original patient under any conditions.

2. Reeskilling of teams

The European Health Data Space (EHDS) will pose an unprecedented training challenge that will cut across all sectors involved in the health data ecosystem. Research ethics committees should familiarise themselves not only with the permissible secondary uses of health data, but also with the integration of the Artificial Intelligence Regulation and with the ethical principles of the ALTAI (Assessment List for Trustworthy Artificial Intelligence) framework. This need for reeskilling will also extend to health systems and health administration, where Health Data Access Bodies will require highly qualified personnel in these new ethical and regulatory frameworks, as well as reliable data holders who will safeguard sensitive information. Development staff and IT teams will also need to acquire new skills in critical technical areas, such as cataloguing, validation, and curation of data, as well as in interoperability standards that enable effective communication between systems. Perhaps the most sensitive training challenge will fall on new entrants, who will be able to take advantage of opportunities to access datasets for innovative secondary uses. This especially concerns technology startups in the health sector. To face a very demanding regulatory framework (GDPR, Regalmento de AI, EEDSR), the resources and capabilities for legal compliance in Spanish SMEs is notably limited. For this reason, it will be necessary to build a solid culture of data protection and ethical development of reliable artificial intelligence systems from the beginning.

3. Data cataloguing: the challenge of quality and standardization

In the context of the European Health Data Space, deepen the standardization of data through the most functional methodologies – such as OMOP CDM for observational clinical data, HL7 FHIR for dynamic information exchange, DICOM for medical imaging, or reference terminologies such as SNOMED CT, LOINC and RxNorm— is presented as a key strategic element for the creation and re-use of high-quality datasets. However, the adoption of these standards is not enough on its own: the processes of validation, semantic annotation and data enrichment require highly qualified human resources capable of ensuring the coherence, completeness and accuracy of the information, making this training a real precondition for effective participation in the European health data ecosystem. Alignment with the standardized cataloguing of datasets following the HealthDCAT-AP (Health Data Catalog Application Profile) standard, which allows the descriptive metadata of health data resources to be described in a homogeneous way, is presented as one of the immediate challenges, along with the implementation of the work that has been deployed in relation to the data utility quality label, a quality label that assesses the real usefulness of data for secondary uses and is becoming a seal of trust for users and researchers.

If previously in this article the very high capacities of the Spanish health system to generate health data in a systematic way and in significant volumes were highlighted, these aspects of cataloguing, standardization and quality certification will occupy an absolutely central place in designing optimal conditions of European competitiveness in their reuse, transforming the abundance of data into a real strategic advantage that allows Spain to position itself as a relevant player in the research and innovation landscape with electronic health data.

The experience of the EUCAIM project (Cancer Image EU)

The European Health Data Space Regulation aims to enable the secondary use of electronic health data across Europe through harmonised rules in a federated ecosystem. In the cancer arena, fragmented access to high-quality datasets slows down research, limits reproducibility and undermines Europe's ability to develop and validate reliable AI tools for oncology.

EUCAIM demonstrates the viability of an ecosystem for the secondary use of cancer through a federated model that allows cross-border access under harmonized rules guaranteeing adequate control of resources at the local level. And this is deployed through a set of enabling components:

1) A Secure Processing Environment (SPE) federated at European level

EUCAIM is creating a federated PES to enforce data access conditions, control processing, and support secure cross-border analysis under EEDS restrictions. This PES is fully in line with the requirements and measures laid down in Article 73 EEDSR for safe environments.

2) Overcoming the "anonymisation barrier"

EUCAIM promotes a layered anonymization strategy that combines dataholder-autonomous local anonymization processes with platform controls to enable datasets to remain useful for AI research and development. The importance of this approach lies in the fact that it aims to reconcile the protection of privacy with the practical need to have sets with large volumes of data characterized by their diversity.

3) Data cataloguing and standardization

EUCAIM aligns cataloguing with the HealthDCAT-AP principles whose main objective is to apply the FAIR principles, that is, to ensure that data is findable, accessible, interoperable and reusable.

4) Reduction of legal costs

EUCAIM has deployed its own compliance framework aimed at the General Data Protection Regulation and the Artificial Intelligence Regulation. To do this, a robust compliance framework is in place at the platform level that is deployed across complex data ecosystems. This is based on data protection impact assessments (included in the GDPR) with a particular focus on fundamental rights. It also incorporates training and professional retraining of users as a functional requirement, so that compliance capability becomes an essential feature.

5) Support for data users

EUCAIM offers significant advantages to data users, including researchers and AI developers, by establishing a transparent and well-governed environment for data access. The adoption of transparent governance criteria, clearly defined obligations and their technical application by the platform, provide data users with the guarantee that their access is adequate and lawful, fully auditable and remains stable over time. The platform's design ensures that users can leverage powerful data for advanced analytics, including federated processing in a secure environment. Through mandatory training and implementation of standardized procedures, teams benefit from less uncertainty and are better equipped to align with compliance requirements set forth by the EEDSR, GDPR, and AI governance frameworks.

6) Guarantee of patients' rights

EUCAIM's approach is based on data protection by design and by default that unites organisational safeguards with robust technical controls. This framework has been purpose-built to minimise the risk of data misuse, while supporting safe and effective cross-border cancer research and innovation. The result is a system in which the protection of privacy is not an obstacle but a fundamental element that allows the responsible use of data for the benefit of society and science. The model reinforces accountability for the secondary use of health data by combining strong governance oversight, a comprehensive record of actions, and strict and enforceable obligations for all participating entities. All actions taken with patient data are recorded and reviewed, ensuring that all uses are fully auditable. This traceability ensures that the processing of data is kept within the limits of the permitted use and that any deviations can be identified and addressed quickly.

Multi-level governance: the key to sustainable success

The most relevant lesson learned at EUCAIM concerns the imperative need for articulated, coherent and operational multilevel governance. In a broad sense, it is essential to provide effective governance tools and frameworks on three fundamental dimensions:

- Firstly, on the processes for generating datasets and their sharing conditions, establishing clear criteria on what data is generated, how it is standardised, who holds rights over it and under what licences and restrictions it can be shared with third parties.

- Second, on data access request processes, defining transparent and efficient procedures so that researchers, innovators, and policymakers can identify, request, and obtain access to the data needed for their projects, minimizing administrative burdens without compromising ethical and legal guarantees.

- Thirdly, on the processes of validating the correctness of the datasets and adherence to the system, as well as the procedures for authorising access to data, ensuring that only data of certified quality feed the infrastructure and that only authorised users with legitimate purposes access sensitive information.

This procedural governance cannot function without strategic and operational decisions regarding the definition of human resources roles and functions. To do this, it is necessary to have the necessary professional profiles such as data managers, experts in research ethics, cybersecurity specialists, data curators and quality managers. Secondly, it will be essential to define the secure processing environments where analyses are carried out on sensitive data, ensuring that these spaces comply with the highest technical standards of security, traceability, auditing and privacy preservation, and that they are designed to operate under the principle of zero trust) adapted to the health context. Only through this multi-level governance architecture, which integrates technical, organizational, ethical and legal dimensions at all levels of decision-making – from the design of national policies to the day-to-day operational management of platforms – will it be possible to build health data infrastructures that are truly sustainable, reliable and capable of generating long-term social, scientific and economic value. positioning the Spanish healthcare system as a strategic player in the European healthcare innovation ecosystem.

Content prepared by Ricard Martínez Martínez, Director of the Chair of Privacy and Digital Transformation, Department of Constitutional Law, University of Valencia. The contents and views expressed in this publication are the sole responsibility of the author.

La The European Commission has recently presented the document setting out a new EU Strategy in the field of data. Among other ambitious objectives, this initiative aims to address a transcendental challenge in the era of generative artificial intelligence: the insufficient availability of data under the right conditions.

Since the previous 2020 Strategy, we have witnessed an important regulatory advance that aimed to go beyond the 2019 regulation on open data and reuse of public sector information.

Specifically, on the one hand, the Data Governance Act served to promote a series of measures that tended to facilitate the use of data generated by the public sector in those cases where other legal rights and interests were affected – personal data, intellectual property.

On the other hand, through the Data Act, progress was made, above all, in the line of promoting access to data held by private subjects, taking into account the singularities of the digital environment.

The necessary change of focus in the regulation on access to data.

Despite this significant regulatory effort, the European Commission has detected an underuse of data , which is also often fragmented in terms of the conditions of its accessibility. This is due, in large part, to the existence of significant regulatory diversity. Measures are therefore needed to facilitate the simplification and streamlining of the European regulatory framework on data.

Specifically, it has been found that there is regulatory fragmentation that generates legal uncertainty and disproportionate compliance costs due to the complexity of the applicable regulatory framework itself. Specifically, the overlap between the General Data Protection Regulation (GDPR), the Data Governance Act, the Data Act, the Open Data Directive and, likewise, the existence of sectoral regulations specific to some specific areas has generated a complex regulatory framework which is difficult to face, especially if we think about the competitiveness of small and medium-sized companies. Each of these standards was designed to address specific challenges that were addressed successively, so a more coherent overview is needed to resolve potential inconsistencies and ultimately facilitate their practical implementation.

In this regard, the Strategy proposes to promote a new legislative instrument – the proposal for a Regulation called Digital Omnibus – which aims to consolidate the rules relating to the European single market in the field of data into a single standard. Specifically, with this initiative:

- The provisions of the Data Governance Act are merged into the regulation of the Data Act, thus eliminating duplications.

- The Regulation on non-personal data, whose functions are also covered by the Data Act, is repealed;

- Public sector data standards are integrated into the Data Act, as they were previously included in both the 2019 Directive and the Data Governance Act.

This regulation therefore consolidates the role of the Data Act as a general reference standard in the field. It also strengthens the clarity and precision of its forecasts, with the aim of facilitating its role as the main regulatory instrument through which it is intended to promote the accessibility of data in the European digital market.

Modifications in terms of personal data protection

The Digital Omnibus proposal also includes important new features with regard to the regulations on the protection of personal data, amending several provisions of Regulation (EU) 1016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data.

In order for personal data to be used – that is, any information referring to an identified or identifiable natural person – it is necessary that one of the circumstances referred to in Article 6 of the aforementioned Regulation is present, including the consent of the owner or the existence of a legitimate interest on the part of the person who is going to process the data.

Legitimate interest allows personal data to be processed when it is necessary for a valid purpose (improving a service, preventing fraud, etc.) and does not adversely affect the rights of the individual.

Source: Guide on legitimate interest. ISMS Forum and Data Privacy Institute. Available here: guiaintereslegitimo1637794373.pdf

Regarding the possibility of resorting to legitimate interest as a legal basis for training artificial intelligence tools, the current regulation allows the processing of personal data as long as the rights of the interested parties who own such data do not prevail.

However, given the generality of the concept of "legitimate interest", when deciding when personal data may be used under this clause , there will not always be absolute certainty, it will be necessary to analyse on a case-by-case basis: specifically, it will be necessary to carry out an activity of weighing the conflicting legal interests and, therefore, its application may give rise to reasonable doubts in many cases.

Although the European Data Protection Board has tried to establish some guidelines to specify the application of legitimate interest, the truth is that the use of open and indeterminate legal concepts will not always allow clear and definitive answers to be reached. To facilitate the specification of this expression in each case, the Strategy refers as a criterion to takeinto account the potential benefit that the processing may entail for the data subject and for society in general. Likewise, given that the consent of the owner of the data will not be necessary – and therefore, its revocation would not be applicable – it reinforces the right of opposition by the owner to the processing of their data and, above all, guarantees greater transparency regarding the conditions under which the data will be processed. Thus, by strengthening the legal position of the data subject and referring to this potential benefit, the Strategy aims to facilitate the use of legitimate interest as a legal basis for the use of personal data without the consent of the data subject, but with appropriate safeguards.

Another major data protection measure concerns the distinction between anonymised and pseudonymised data. The GDPR defines pseudonymisation as data processing that, until now, could no longer be attributed to a data subject without recourse to additional, separate information. However, pseudonymised data is still personal data and, therefore, subject to this regulation. On the other hand, anonymous data does not relate to identified or identifiable persons and therefore its use would not be subject to the GDPR. Consequently, in order to know whether we are talking about anonymous or pseudo-nimized data, it is essential to specify whether there is a "reasonable probability" of identifying the owner of the data.

However, the technologies currently available multiply the risk of re-identification of the data subject, which directly affects what could be considered reasonable, generating uncertainty that has a negative impact on technological innovation. For this reason, the Digital Omnibus proposal, along the lines already stated by the Court of Justice of the European Union, aims to establish the conditions under which pseudonymised data could no longer be considered personal data, thus facilitating its use. To this end, it empowers the European Commission, through implementing acts, to specify such circumstances, in particular taking into account the state of the art and, likewise, offering criteria that allow the risk of re-identification to be assessed in each specific case.

Scaling High-Value Datasets

The Strategy also aims to expand the catalogue of High Value Data (HVD) provided for in Implementing Regulation (EU) 2023/138. These are datasets with exceptional potential to generate social, economic and environmental benefits, as they are high-quality, structured and reliable data that are accessible under technical, organisational and semantic conditions that are very favourable for automated processing. Six categories are currently included (geospatial, Earth observation and environment, meteorology, statistics, business and mobility), to which the Commission would add, among other things, legal, judicial and administrative data.

Opportunity and challenge

The European Data Strategy represents a paradigmatic shift that is certainly relevant: it is not only a matter of promoting regulatory frameworks that facilitate the accessibility of data at a theoretical level but, above all, of making them work in their practical application, thus promoting the necessary conditions of legal certainty that allow a competitive and innovative data economy to be energized.

To this end, it is essential, on the one hand, to assess the real impact of the measures proposed through the Digital Omnibus and, on the other, to offer small and medium-sized enterprises appropriate legal instruments – practical guides, suitable advisory services, standard contractual clauses, etc. – to face the challenge that regulatory compliance poses for them in a context of enormous complexity. Precisely, this difficulty requires, on the part of the supervisory authorities and, in general, of public entities, to adopt advanced and flexible data governance models that adapt to the singularities posed by artificial intelligence, without affecting legal guarantees.

Content prepared by Julián Valero, professor at the University of Murcia and coordinator of the Innovation, Law, and Technology Research Group (iDerTec). The content and views expressed in this publication are the sole responsibility of the author.

In the era of Artificial Intelligence (AI), data has ceased to be simple records and has become the essential fuel of innovation. However, for this fuel to really drive new services, more effective public policies or advanced AI models, it is not enough to have large volumes of information: the data must be varied, of quality and, above all, accessible.

In this context, the data pooling or Data Clustering, a practice that consists of Pooling data to generate greater value from their joint use. Far from being an abstract idea, the data pooling is emerging as one of the key mechanisms for transforming the data economy in Europe and has just received a new impetus with the proposal of the Digital Omnibus, aimed at simplifying and strengthening the European data-sharing framework.

As we already analyzed in our recent post on the Data Union Strategy, the European Union aspires to build a Single Data Market in which information can flow safely and with guarantees. The data pooling it is, precisely, the Operational tool which makes this vision tangible, connecting data that is now dispersed between administrations, companies and sectors.

But what exactly does "data pooling" mean? Why is this concept being talked about more and more in the context of the European data strategy and the new Digital Omnibus? And, above all, what opportunities does it open up for public administrations, companies and data reusers? In this article we try to answer these questions.

What is data pooling, how does it work and what is it for?

To understand what data pooling is, it can be helpful to think about a traditional agricultural cooperative. In it, small producers who, individually, have limited resources decide to pool their production and their means. By doing so, they gain scale, access better tools, and can compete in markets they wouldn't reach separately.

In the digital realm, data pooling works in a very similar way. It consists of combining or grouping datasets from different organizations or sources to analyze or reuse them with a shared goal. Creating this "common repository" of information—physical or logical—enables more complex and valuable analyses that could hardly be performed from a single isolated source.

This "pooling of data" can take different forms, depending on the technical and organizational needs of each initiative:

- Shared repositories, where multiple organizations contribute data to the same platform.

- Joint or federated access, where data remains in its source systems, but can be analyzed in a coordinated way.

- Governance agreements, which set out clear rules about who can access data, for what purpose, and under what conditions.

In all cases, the central idea is the same: each participant contributes their data and, in return, everyone benefits from a greater volume, diversity and richness of information, always under previously agreed rules.

What is the purpose of sharing data?

The growing interest in data pooling is no coincidence. Sharing data in a structured way allows, among other things:

- Detect patterns that are not visible with isolated data, especially in complex areas such as mobility, health, energy or the environment.

- Enhance the development of artificial intelligence, which needs diverse, quality data at scale to generate reliable results.

- Avoiding duplication, reducing costs and efforts in both the public and private sectors.

- To promote innovation, facilitating new services, comparative studies or predictive analysis.

- Strengthen evidence-based decision-making, a particularly relevant aspect in the design of public policies.

In other words, data pooling multiplies the value of existing data without the need to always generate new sets of information.

Different types of data pooling and their value

Not all data pools are created equal. Depending on the context and the objective pursued, different models of data grouping can be identified:

- M2M (Machine-to-Machine) data pooling, very common in the Internet of Things (IoT). For example, when industrial sensor manufacturers pool data from thousands of machines to anticipate failures or improve maintenance.

- Cross-sector or cross-sector data pooling, which combines data from different sectors – such as transport and energy – to optimise services, for example, the management of electric vehicle charging in smart cities.

- Data pooling for research, especially relevant in the field of health, where hospitals or research centers share anonymized data to train algorithms capable of detecting rare diseases or improving diagnoses.

These examples show that data pooling is not a single solution, but a set of adaptable practices, capable of generating economic, social and scientific value when applied with the appropriate guarantees.

From potential to practice: guarantees, clear rules and new opportunities for data pooling

Talking about sharing data does not mean doing it without limits. For data pooling to build trust and sustainable value, it is imperative to address how to share data responsibly. This has been, in fact, one of the great challenges that have conditioned its adoption in recent years.

Among the main concerns are the Protection of personal data, ensuring compliance with the General Data Protection Regulation (GDPR) and minimizing risks of re-identification; the confidentiality and the protection of trade secrets, especially when companies are involved; as well as the Quality and interoperability of the data, as combining inconsistent information can lead to erroneous conclusions. To all this is added a transversal element: the Trust between the parties, without which no sharing mechanism can function.

For this reason, data pooling is not just a technical issue. It requires clear legal frameworks, strong governance models, and trust mechanisms, which provide security to both those who share the data and those who reuse it.

Europe's role: from sharing data to creating ecosystems

Aware of these challenges, the European Union has been working for years to build a Single Data Market, where sharing information is simpler, safer and more beneficial for all actors involved. In this context, key initiatives have emerged, such as the European Data Spaces, organized by strategic sectors (health, mobility, industry, energy, agriculture), the promotion of Standards and Interoperability, and the appearance of Data Brokers as trusted third parties who facilitate sharing.

Data pooling fits fully into this vision: it is one of the practical mechanisms that allow these data spaces to work and generate real value. By facilitating the aggregation and joint use of data, pooling acts as the "engine" that makes many of these ecosystems operational.

All this is part of the Data Union Strategy, which seeks to connect policies, infrastructures and standards so that data can flow safely and efficiently throughout Europe.

The big brake: regulatory fragmentation

Until recently, this potential was met with a major hurdle: the Complexity of the European legal framework on data. An organization that would like to participate in a data pool cross-border had to navigate between multiple rules – GDPR, Data Governance Act, Data Act, Open Data Directive and sectoral or national regulations—with definitions, obligations, and competent authorities that are not always aligned. This fragmentation generated legal uncertainty: doubts about responsibilities, fear of sanctions, or uncertainty about the real protection of trade secrets. In practice, this "normative labyrinth" has for years held back the development of many common data spaces and limited the adoption of the data pooling, especially among SMEs and medium-sized companies with less legal and technical capacity.

The Digital Omnibus: Simplifying for Data Pooling to Scale

This is where the Digital Omnibus, the European Commission's proposal to simplify and harmonise the digital legal framework, comes into play. Far from adding new regulatory layers, the objective of the Omnibus is to organize, consolidate and reduce administrative burdens, making it easier to share data in practice.

From a data pooling perspective, the message is clear: less fragmentation, more clarity, and greater trust. The Omnibus seeks to concentrate the rules in a more coherent framework, avoid duplication and remove unnecessary barriers that until now discouraged data-driven collaboration, especially in cross-border projects.

In addition, the role of data intermediation services, key actors in organizing pooling in a neutral and reliable way, is reinforced. By clarifying their role and reducing certain burdens, it favors the emergence of new models – including technology startups – capable of acting as "arbiters" of data exchange between multiple participants.

Another particularly relevant element is the strengthening of the protection of trade secrets, allowing data holders to limit or deny access when there is a real risk of misuse or transfer to environments without adequate guarantees. This point is key for industrial and strategic sectors, where trust is an essential condition for sharing data.

New opportunities for data pooling: public sector, companies and data reuse

The regulatory simplification and confidence-building introduced by the Digital Omnibus is not an end in itself. Its true value lies in the concrete opportunities that data pooling opens up for different actors in the data ecosystem, especially for the public sector, companies and information reusers.

In the case of public administrations, data pooling offers particularly relevant potential. It allows data from different sources and administrative levels to be combined to improve the design and evaluation of public policies, move towards evidence-based decision-making and offer more effective and personalised services to citizens. At the same time, it facilitates the breaking down of information silos, the reuse of already available data and the reduction of duplications, with the consequent savings in costs and efforts.

In addition, data pooling reinforces collaboration between the public sector, the research field and the private sector, always under secure and transparent frameworks. In this context, it does not compete with open data, but complements it, making it possible to connect datasets that are currently published in a fragmented way and enabling more advanced analyses that expand their social and economic value.

From a business point of view, the Digital Omnibus introduces a significant novelty by expanding the focus beyond traditional SMEs. The so-called small mid-caps, mid-cap companies that also suffer the impact of bureaucracy, are now benefiting from regulatory simplification. This significantly increases the base of organizations capable of participating in data pooling schemes and expands the volume and diversity of data available in strategic sectors such as industry, automotive or chemicals.

The economic impact of this new scenario is also relevant. The European Commission estimates significant savings in administrative and operational costs, both for companies and public administrations. But beyond the numbers, these savings represent freed up capacity to innovate, invest in new digital services, and develop more advanced AI models, fueled by data that can now be shared more securely.

In short, data pooling is consolidated as a key lever to move from the punctual sharing of data to the systematic generation of value, laying the foundations for a more collaborative, efficient and competitive data economy in Europe.

Conclusion: Cooperate to compete

The proposal of data pooling in the Digital Omnibus marks a before and after in the way we understand the ownership of information. Europe has understood that, in the global data economy, sovereignty is not defended by closing borders, but by creating secure environments where collaboration is the simplest and most profitable option.

Data pooling is at the heart of this transformation. By cutting red tape, simplifying notifications, and protecting trade secrets, the Omnibus is taking the stones out of the way so that businesses and citizens can enjoy the benefits of a true Data Union.

In short, it is a question of moving from an economy of isolated silos to one of connected networks. Because, in the world of data, sharing is not losing control, it is gaining scale.

Content created by Dr. Fernando Gualo, Professor at UCLM and Government and Data Quality Consultant. The content and views expressed in this publication are the sole responsibility of the author.

On 19 November, the European Commission presented the Data Union Strategy, a roadmap that seeks to consolidate a robust, secure and competitive European data ecosystem. This strategy is built around three key pillars: expanding access to quality data for artificial intelligence and innovation, simplifying the existing regulatory framework, and protecting European digital sovereignty. In this post, we will explain each of these pillars in detail, as well as the implementation timeline of the plan planned for the next two years.

Pillar 1: Expanding access to quality data for AI and innovation

The first pillar of the strategy focuses on ensuring that companies, researchers and public administrations have access to high-quality data that allows the development of innovative applications, especially in the field of artificial intelligence. To this end, the Commission proposes a number of interconnected initiatives ranging from the creation of infrastructure to the development of standards and technical enablers. A series of actions are established as part of this pillar: the expansion of common European data spaces, the development of data labs, the promotion of the Cloud and AI Development Act, the expansion of strategic data assets and the development of facilitators to implement these measures.

1.1 Extension of the Common European Data Spaces (ECSs)

Common European Data Spaces are one of the central elements of this strategy:

-

Planned investment: 100 million euros for its deployment.

-

Priority sectors: health, mobility, energy, (legal) public administration and environment.

-

Interoperability: SIMPL is committed to interoperability between data spaces with the support of the European Data Spaces Support Center (DSSC).

-

Key Applications:

-

European Health Data Space (EHDS): Special mention for its role as a bridge between health data systems and the development of AI.

-

New Defence Data Space: for the development of state-of-the-art systems, coordinated by the European Defence Agency.

-

1.2 Data Labs: the new ecosystem for connecting data and AI development

The strategy proposes to use Data Labs as points of connection between the development of artificial intelligence and European data.

These labs employ data pooling, a process of combining and sharing public and restricted data from multiple sources in a centralized repository or shared environment. All this facilitates access and use of information. Specifically, the services offered by Data Labs are:

-

Makes it easy to access data.

-

Technical infrastructure and tools.

-

Data pooling.

-

Data filtering and labeling

-

Regulatory guidance and training.

-

Bridging the gap between data spaces and AI ecosystems.

Implementation plan:

-

First phase: the first Data Labs will be established within the framework of AI Factories (AI gigafactories), offering data services to connect AI development with European data spaces.

-

Sectoral Data Labs: will be established independently in other areas to cover specific needs, for example, in the energy sector.

-

Self-sustaining model: It is envisaged that the Data Labs model can be deployed commercially, making it a self-sustaining ecosystem that connects data and AI.

1.3 Cloud and AI Development Act: boosting the sovereign cloud

To promote cloud technology, the Commission will propose this new regulation in the first quarter of 2026. There is currently an open public consultation in which you can participate here.

1.4 Strategic data assets: public sector, scientific, cultural and linguistic resources

On the one hand, in 2026 it will be proposed to expand the list of high-value data in English or HVDS to include legal, judicial and administrative data, among others. And on the other hand, the Commission will map existing bases and finance new digital infrastructure.

1.5 Horizontal enablers: synthetic data, data pooling, and standards

The European Commission will develop guidelines and standards on synthetic data and advanced R+D in techniques for its generation will be funded through Horizon Europe.

Another issue that the EU wants to promote is data pooling, as we explained above. Sharing data from early stages of the production cycle can generate collective benefits, but barriers persist due to legal uncertainty and fear of violating competition rules. Its purpose? Make data pooling a reliable and legally secure option to accelerate progress in critical sectors.

Finally, in terms of standardisation, the European standardisation organisations (CEN/CENELEC) will be asked to develop new technical standards in two key areas: data quality and labelling. These standards will make it possible to establish common criteria on how data should be to ensure its reliability and how it should be labelled to facilitate its identification and use in different contexts.

Pillar 2: Regulatory simplification

The second pillar addresses one of the challenges most highlighted by companies and organisations: the complexity of the European regulatory framework on data. The strategy proposes a series of measures aimed at simplifying and consolidating existing legislation.

2.1 Derogations and regulatory consolidation: towards a more coherent framework

The aim is to eliminate regulations whose functions are already covered by more recent legislation, thus avoiding duplication and contradictions. Firstly, the Free Flow of Non-Personal Data Regulation (FFoNPD) will be repealed, as its functions are now covered by the Data Act. However, the prohibition of unjustified data localisation, a fundamental principle for the Digital Single Market, will be explicitly preserved.

Similarly, the Data Governance Act (European Data Governance Regulation or DGA) will be eliminated as a stand-alone rule, migrating its essential provisions to the Data Act. This move simplifies the regulatory framework and also eases the administrative burden: obligations for data intermediaries will become lighter and more voluntary.

As for the public sector, the strategy proposes an important consolidation. The rules on public data sharing, currently dispersed between the DGA and the Open Data Directive, will be merged into a single chapter within the Data Act. This unification will facilitate both the application and the understanding of the legal framework by public administrations.

2.2 Cookie reform: balancing protection and usability

Another relevant detail is the regulation of cookies, which will undergo a significant modernization, being integrated into the framework of the General Data Protection Regulation (GDPR). The reform seeks a balance: on the one hand, low-risk uses that currently generate legal uncertainty will be legalized; on the other, consent banners will be simplified through "one-click" systems. The goal is clear: to reduce the so-called "user fatigue" in the face of the repetitive requests for consent that we all know when browsing the Internet.

2.3 Adjustments to the GDPR to facilitate AI development

The General Data Protection Regulation will also be subject to a targeted reform, specifically designed to release data responsibly for the benefit of the development of artificial intelligence. This surgical intervention addresses three specific aspects:

-

It clarifies when legitimate interest for AI model training may apply.

-

It defines more precisely the distinction between anonymised and pseudonymised data, especially in relation to the risk of re-identification.

-

It harmonises data protection impact assessments, facilitating their consistent application across the Union.

2. 4 Implementation and Support for the Data Act

The recently approved Data Act will be subject to adjustments to improve its application. On the one hand, the scope of business-to-government ( B2G) data sharing is refined, strictly limiting it to emergency situations. On the other hand, the umbrella of protection is extended: the favourable conditions currently enjoyed by small and medium-sized enterprises (SMEs) will also be extended to medium-sized companies or small mid-caps, those with between 250 and 749 employees.

To facilitate the practical implementation of the standard, a model contractual clause for data exchange has already been published , thus providing a template that organizations can use directly. In addition, two additional guides will be published during the first quarter of 2026: one on the concept of "reasonable compensation" in data exchanges, and another aimed at clarifying the key definitions of the Data Act that may generate interpretative doubts.

Aware that SMEs may struggle to navigate this new legal framework, a Legal Helpdesk will be set up in the fourth quarter of 2025. This helpdesk will provide direct advice on the implementation of the Data Act, giving priority precisely to small and medium-sized enterprises that lack specialised legal departments.

2.5 Evolving governance: towards a more coordinated ecosystem

The governance architecture of the European data ecosystem is also undergoing significant changes. The European Data Innovation Board (EDIB) evolves from a primarily advisory body to a forum for more technical and strategic discussions, bringing together both Member States and industry representatives. To this end, its articles will be modified with two objectives: to allow the inclusion of the competent authorities in the debates on Data Act, and to provide greater flexibility to the European Commission in the composition and operation of the body.

In addition, two additional mechanisms of feedback and anticipation are articulated. The Apply AI Alliance will channel sectoral feedback, collecting the specific experiences and needs of each industry. For its part, the AI Observatory will act as a trend radar, identifying emerging developments in the field of artificial intelligence and translating them into public policy recommendations. In this way, a virtuous circle is closed where politics is constantly nourished by the reality of the field.

Pillar 3: Protecting European data sovereignty

The third pillar focuses on ensuring that European data is treated fairly and securely, both inside and outside the Union's borders. The intention is that data will only be shared with countries with the same regulatory vision.

3.1 Specific measures to protect European data

-

Publication of guides to assess the fair treatment of EU data abroad (Q2 2026):

-

Publication of the Unfair Practices Toolbox (Q2 2026):

-

Unjustified location.

-

Exclusion.

-

Weak safeguards.

-

The data leak.

-

-

Taking measures to protect sensitive non-personal data.

All these measures are planned to be implemented from the last quarter of 2025 and throughout 2026 in a progressive deployment that will allow a gradual and coordinated adoption of the different measures, as established in the Data Union Strategy.

In short, the Data Union Strategy represents a comprehensive effort to consolidate European leadership in the data economy. To this end, data pooling and data spaces in the Member States will be promoted, Data Labs and AI gigafactories will be committed to and regulatory simplification will be encouraged.

The European open data portal has published the third volume of its Use Case Observatory, a report that compiles the evolution of data reuse projects across Europe. This initiative highlights the progress made in four areas: economic, governmental, social and environmental impact.

The closure of a three-year investigation

Between 2022 and 2025, the European Open Data Portal has systematically monitored the evolution of various European projects. The research began with an initial selection of 30 representative initiatives, which were analyzed in depth to identify their potential for impact.

After two years, 13 projects continued in the study, including three Spanish ones: Planttes, Tangible Data and UniversiDATA-Lab. Its development over time was studied to understand how the reuse of open data can generate real and sustainable benefits.

The publication of volume III in October 2025 marks the closure of this series of reports, following volume I (2022) and volume II (2024). This last document offers a longitudinal view, showing how the projects have matured in three years of observation and what concrete impacts they have generated in their respective contexts.

Common conclusions

This third and final report compiles a number of key findings:

Economic impact

Open data drives growth and efficiency across industries. They contribute to job creation, both directly and indirectly, facilitate smarter recruitment processes and stimulate innovation in areas such as urban planning and digital services.

The report shows the example of:

- Naar Jobs (Belgium): an application for job search close to users' homes and focused on the available transport options.

This application demonstrates how open data can become a driver for regional employment and business development.

Government impact

The opening of data strengthens transparency, accountability and citizen participation.

Two use cases analysed belong to this field:

- Waar is mijn stemlokaal? (Netherlands): platform for the search for polling stations.

- Statsregnskapet.no (Norway): website to visualize government revenues and expenditures.

Both examples show how access to public information empowers citizens, enriches the work of the media, and supports evidence-based policymaking. All of this helps to strengthen democratic processes and trust in institutions.

Social impact

Open data promotes inclusion, collaboration, and well-being.

The following initiatives analysed belong to this field:

- UniversiDATA-Lab (Spain): university data repository that facilitates analytical applications.

- VisImE-360 (Italy): a tool to map visual impairment and guide health resources.

- Tangible Data (Spain): a company focused on making physical sculptures that turn data into accessible experiences.

- EU Twinnings (Netherlands): platform that compares European regions to find "twin cities"

- Open Food Facts (France): collaborative database on food products.

- Integreat (Germany): application that centralizes public information to support the integration of migrants.

All of them show how data-driven solutions can amplify the voice of vulnerable groups, improve health outcomes and open up new educational opportunities. Even the smallest effects, such as improvement in a single person's life, can prove significant and long-lasting.

Environmental impact

Open data acts as a powerful enabler of sustainability.

As with environmental impact, in this area we find a large number of use cases:

- Digital Forest Dryads (Estonia): a project that uses data to monitor forests and promote their conservation.

- Air Quality in Cyprus (Cyprus): platform that reports on air quality and supports environmental policies.

- Planttes (Spain): citizen science app that helps people with pollen allergies by tracking plant phenology.

- Environ-Mate (Ireland): a tool that promotes sustainable habits and ecological awareness.

These initiatives highlight how data reuse contributes to raising awareness, driving behavioural change and enabling targeted interventions to protect ecosystems and strengthen climate resilience.

Volume III also points to common challenges: the need for sustainable financing, the importance of combining institutional data with citizen-generated data, and the desirability of involving end-users throughout the project lifecycle. In addition, it underlines the importance of European collaboration and transnational interoperability to scale impact.

Overall, the report reinforces the relevance of continuing to invest in open data ecosystems as a key tool to address societal challenges and promote inclusive transformation.

The impact of Spanish projects on the reuse of open data

As we have mentioned, three of the use cases analysed in the Use Case Observatory have a Spanish stamp. These initiatives stand out for their ability to combine technological innovation with social and environmental impact, and highlight Spain 's relevance within the European open data ecosystem. His career demonstrates how our country actively contributes to transforming data into solutions that improve people's lives and reinforce sustainability and inclusion. Below, we zoom in on what the report says about them.

This citizen science initiative helps people with pollen allergies through real-time information about allergenic plants in bloom. Since its appearance in Volume I of the Use Case Observatory, it has evolved as a participatory platform in which users contribute photos and phenological data to create a personalized risk map. This participatory model has made it possible to maintain a constant flow of information validated by researchers and to offer increasingly complete maps. With more than 1,000 initial downloads and about 65,000 annual visitors to its website, it is a useful tool for people with allergies, educators and researchers.

The project has strengthened its digital presence, with increasing visibility thanks to the support of institutions such as the Autonomous University of Barcelona and the University of Granada, in addition to the promotion carried out by the company Thigis.

Its challenges include expanding geographical coverage beyond Catalonia and Granada and sustaining data participation and validation. Therefore, looking to the future, it seeks to extend its territorial reach, strengthen collaboration with schools and communities, integrate more data in real time and improve its predictive capabilities.

Throughout this time, Planttes has established herself as an example of how citizen-driven science can improve public health and environmental awareness, demonstrating the value of citizen science in environmental education, allergy management, and climate change monitoring.

The project transforms datasets into physical sculptures that represent global challenges such as climate change or poverty, integrating QR codes and NFC to contextualize the information. Recognized at the EU Open Data Days 2025, Tangible Data has inaugurated its installation Tangible climate at the National Museum of Natural Sciences in Madrid.

Tangible Data has evolved in three years from a prototype project based on 3D sculptures to visualize sustainability data to become an educational and cultural platform that connects open data with society. Volume III of the Use Case Observatory reflects its expansion into schools and museums, the creation of an educational program for 15-year-old students, and the development of interactive experiences with artificial intelligence, consolidating its commitment to accessibility and social impact.

Its challenges include funding and scaling up the education programme, while its future goals include scaling up school activities, displaying large-format sculptures in public spaces, and strengthening collaboration with artists and museums. Overall, it remains true to its mission of making data tangible, inclusive, and actionable.

UniversiDATA-Lab is a dynamic repository of analytical applications based on open data from Spanish universities, created in 2020 as a public-private collaboration and currently made up of six institutions. Its unified infrastructure facilitates the publication and reuse of data in standardized formats, reducing barriers and allowing students, researchers, companies and citizens to access useful information for education, research and decision-making.

Over the past three years, the project has grown from a prototype to a consolidated platform, with active applications such as the budget and retirement viewer, and a hiring viewer in beta. In addition, it organizes a periodic datathon that promotes innovation and projects with social impact.

Its challenges include internal resistance at some universities and the complex anonymization of sensitive data, although it has responded with robust protocols and a focus on transparency. Looking to the future, it seeks to expand its catalogue, add new universities and launch applications on emerging issues such as school dropouts, teacher diversity or sustainability, aspiring to become a European benchmark in the reuse of open data in higher education.

Conclusion

In conclusion, the third volume of the Use Case Observatory confirms that open data has established itself as a key tool to boost innovation, transparency and sustainability in Europe. The projects analysed – and in particular the Spanish initiatives Planttes, Tangible Data and UniversiDATA-Lab – demonstrate that the reuse of public information can translate into concrete benefits for citizens, education, research and the environment.

Did you know that less than two out of ten European companies use artificial intelligence (AI) in their operations? This data, corresponding to 2024, reveals the margin for improvement in the adoption of this technology. To reverse this situation and take advantage of the transformative potential of AI, the European Union has designed a comprehensive strategic framework that combines investment in computing infrastructure, access to quality data and specific measures for key sectors such as health, mobility or energy.

In this article we explain the main European strategies in this area, with a special focus on the Apply AI Strategy or the AI Continent Action Plan , adopted this year in October and April respectively. In addition, we will tell you how these initiatives complement other European strategies to create a comprehensive innovation ecosystem.

Context: Action plan and strategic sectors

On the one hand, the AI Continent Action Plan establishes five strategic pillars:

- Computing infrastructures: scaling computing capacity through AI Factories, AI Gigafactories and the Cloud and AI Act, specifically:

- AI factories: infrastructures to train and improve artificial intelligence models will be promoted. This strategic axis has a budget of 10,000 million euros and is expected to lead to at least 13 AI factories by 2026.

- Gigafactorie AI: the infrastructures needed to train and develop complex AI models will also be taken into account, quadrupling the capacity of AI factories. In this case, 20,000 million euros are invested for the development of 5 gigafactories.

- Cloud and AI Act: Work is being done on a regulatory framework to boost research into highly sustainable infrastructure, encourage investments and triple the capacity of EU data centres over the next five to seven years.

- Access to quality data: facilitate access to robust and well-organized datasets through the so-called Data Labs in AI Factories.

- Talent and skills: strengthening AI skills across the population, specifically:

- Create international collaboration agreements.

- To offer scholarships in AI for the best students, researchers and professionals in the sector.

- Promote skills in these technologies through a specific academy.

- Test a specific degree in generative AI.

- Support training updating through the European Digital Innovation Hub.

- Development and adoption of algorithms: promoting the use of artificial intelligence in strategic sectors.

- Regulatory framework: Facilitate compliance with the AI Regulation in a simple and innovative way and provide free and adaptable tools for companies.

On the other hand, the recently presented, in October 2025, Apply AI Strategy seeks to boost the competitiveness of strategic sectors and strengthen the EU's technological sovereignty, driving AI adoption and innovation across Europe, particularly among small and medium-sized enterprises. How? The strategy promotes an "AI first" policy, which encourages organizations to consider artificial intelligence as a potential solution whenever they make strategic or policy decisions, carefully evaluating both the benefits and risks of the technology. In addition, it encourages a European procurement approach, i.e. organisations, particularly public administrations, prioritise solutions developed in Europe. Moreover, special importance is given to open source AI solutions, because they offer greater transparency and adaptability, less dependence on external providers and are aligned with the European values of openness and shared innovation.

The Apply AI Strategy is structured in three main sections:

Flagship sectoral initiatives

The strategy identifies 11 priority areas where AI can have the greatest impact and where Europe has competitive strengths:

- Healthcare and pharmaceuticals: AI-powered advanced European screening centres will be established to accelerate the introduction of innovative prevention and diagnostic tools, with a particular focus on cardiovascular diseases and cancer.

- Robotics: Adoption will be driven for the adoption of European robotics connecting developers and user industries, driving AI-powered robotics solutions.

- Manufacturing, engineering and construction: the development of cutting-edge AI models adapted to industry will be supported, facilitating the creation of digital twins and optimisation of production processes.

- Defence, security and space: the development of AI-enabled European situational awareness and control capabilities will be accelerated, as well as highly secure computing infrastructure for defence and space AI models.

- Mobility, transport and automotive: the "Autonomous Drive Ambition Cities" initiative will be launched to accelerate the deployment of autonomous vehicles in European cities.

- Electronic communications: a European AI platform for telecommunications will be created that will allow operators, suppliers and user industries to collaborate on the development of open source technological elements.

- Energy: the development of AI models will be supported to improve the forecasting, optimization and balance of the energy system.

- Climate and environment: An open-source AI model of the Earth system and related applications will be deployed to enable better weather forecasting, Earth monitoring, and what-if scenarios.

- Agri-food: the creation of an agri-food AI platform will be promoted to facilitate the adoption of agricultural tools enabled by this technology.

- Cultural and creative sectors, and media: the development of micro-studios specialising in AI-enhanced virtual production and pan-European platforms using multilingual AI technologies will be incentivised.

- Public sector: A dedicated AI toolkit for public administrations will be built with a shared repository of good practices, open source and reusable, and the adoption of scalable generative AI solutions will be accelerated.

Cross-cutting support measures

For the adoption of artificial intelligence to be effective, the strategy addresses challenges common to all sectors, specifically:

- Opportunities for European SMEs: The more than 250 European Digital Innovation Hubs have been transformed into AI Centres of Expertise. These centres act as privileged access points to the European AI innovation ecosystem, connecting companies with AI Factories, data labs and testing facilities.

- AI-ready workforce: Access to practical AI literacy training, tailored to sectors and professional profiles, will be provided through the AI Skills Academy.

- Supporting the development of advanced AI: The Frontier AI Initiative seeks to accelerate progress on cutting-edge AI capabilities in Europe. Through this project, competitions will be created to develop advanced open-source artificial intelligence models, which will be available to public administrations, the scientific community and the European business sector.

- Trust in the European market: Disclosure will be strengthened to ensure compliance with the European Union's AI Regulation, providing guidance on the classification of high-risk systems and on the interaction of the Regulation with other sectoral legislation.

New governance system

In this context, it is particularly important to ensure proper coordination of the strategy. Therefore, the following is proposed:

- Apply AI Alliance: The existing AI Alliance becomes the premier coordination forum that brings together AI vendors, industry leaders, academia, and the public sector. Sector-specific groups will allow the implementation of the strategy to be discussed and monitored.

- AI Observatory: An AI Observatory will be established to provide robust indicators assessing its impact on currently listed and future sectors, monitor developments and trends.

Complementary strategies: science and data as the main axes

The Apply AI Strategy does not act in isolation, but is complemented by two other fundamental strategies: the AI in Science Strategy and the Data Union Strategy.

AI in Science Strategy

Presented together with the Apply AI Strategy, this strategy supports and incentivises the development and use of artificial intelligence by the European scientific community. Its central element is RAISE (Resource for AI Science in Europe), which was presented in November at the AI in Science Summit and will bring together strategic resources: funding, computing capacity, data and talent. RAISE will operate on two pillars: Science for AI (basic research to advance fundamental capabilities) and AI in Science (use of artificial intelligence for progress in different scientific disciplines).

Data Union Strategy

This strategy will focus on ensuring the availability of high-quality, large-scale datasets, essential for training AI models. A key element will be the Data Labs associated with the AI Factories, which will bring together and federate data from different sectors, linking with the corresponding European Common Data Spaces, making them available to developers under the appropriate conditions.

In short, through significant investments in infrastructure, access to quality data, talent development and a regulatory framework that promotes responsible innovation, the European Union is creating the necessary conditions for companies, public administrations and citizens to take advantage of the full transformative potential of artificial intelligence. The success of these strategies will depend on collaboration between European institutions, national governments, businesses, researchers and developers.

With 24 official languages and more than 60 regional and minority languages, the European Union is proud of its cultural and linguistic diversity. However, this richness also represents a significant challenge in the digital and technological sphere. Advances in artificial intelligence (AI) and natural language processing have been dominated by English, creating a noticeable imbalance in the availability of language resources for most European languages.

This imbalance has direct consequences, for example:

- Asymmetric technology development: Companies and researchers have difficulty creating AI solutions adapted to specific languages because resources are limited.

- Technological dependence: Europe risks becoming dependent on language solutions developed outside its cultural and normative context.

Addressing this gap is not only a matter of inclusion, but also represents a large-scale economic opportunity, capable of generating huge gains in both trade and technological innovation. To address these challenges, the European Commission has launched the European Language Data Space (LDS), a decentralised infrastructure that promotes the secure and controlled exchange of language data among multiple actors in the European ecosystem.

Unlike a simple centralised repository, the LDS functions as a language data marketplace that allows participants to share, sell or license their data under clearly defined conditions and with full control over the use of the data.

The European Language Data Space (LDS), with a beta version operational, represents a decisive step towards democratising language technologies across all languages of the European Union. We tell you the keys to this project and the next steps.

How does this platform work?

LDS is based on a decentralised peer-to-peer (P2P) architecture that allows users to interact directly with each other, without the need for a central server or single authority, where each participant maintains control of its own data. The key elements of LDS operation are:

1. Decentralised and sovereign architecture

Each participant (whether data provider or data consumer) can locally install the LDS Connector, a software that allows interacting directly with other participants without the need for a central server.. This approach ensures:

-

Data sovereignty: owners retain full control over who can access their data and under what conditions of use.

-

Trust and security: Only eligible and authorised participants, legal entities registered in the EU, can be part of the ecosystem.

- Interoperability: is compatible with other European data spaces, following common standards.

2. Data exchange flow

The exchange process follows a structured flow between two main actors:

- The providers describe their linguistic datasets, establish access policies (licences, prices) and publish these offers in the catalogue.